Results for “evidence-based” 37 found

Is there Hope for Evidence-Based Policy?

Vital City magazine and the Niskanen Center’s Hypertext have a special issue on the prospects for “evidence-based policymaking.” The issue takes as its starting point, Megan Stevenson’s Cause, effect, and the structure of the social world, a survey of RCTs in criminology which concludes that the vast majority of interventions “have little to no lasting effect.” The issue features responses from John Arnold, Jonathan Rauch, Anna Harvey, Aaron Chalfin, Jennifer Doleac, myself, and others. It’s an excellent issue.

My contribution focuses on the difference between changing preferences versus constraints. Here’s one bit:

Some other programs that Stevenson mentions elsewhere are also not predominantly constraint- or incentive-changing. Take, for example, the many papers estimating the effect of imprisonment on the post-release behavior of criminal defendants via the random selection of less and more lenient judges. At first, it may seem absurd to say that imprisonment is not about incentives. Isn’t deterrence the ne plus ultra of incentives? Yes, but the economic theory of deterrence, so-called general deterrence, is rooted in the anticipation of consequences — the odds before the crime. By the sentencing stage, we’re merely observing where the roulette wheel stopped. Criminals factor in the likelihood of capture as just another cost of doing business. Thus, the economic theory of deterrence predicts high rates of recidivism, as the calculus that justified the initial crime remains unchanged after punishment. To be sure, imprisonment might change behavior for all kinds of reasons. Maybe inmates learn that they underestimated the unpleasantness of prison, but perhaps they improve their criminal skills while in prison or join a gang, or perhaps the stain of a criminal record reduces the prospect of legitimate employment. Thus, the study of imprisonment’s effects on criminal defendants is intriguing, but it’s not testing deterrence or incapacitation, on which we have built a body of work with clear predictions.

Indeed, on Stevenson’s list only hot-spot policing is a clear example of changing constraints. It is perhaps not coincidental that hot-spot policing is one of the few interventions that Stevenson acknowledges “leads to a small but statistically significant decrease in reported crime in the areas with increased policing.” While I do not begrudge Stevenson her interpretation, other people shade the total evidence differently. Here, for example, is the Center for Evidence-Based Crime Policy, in my experience a rather tough-minded and empirically rigorous organization not easily swayed by compelling narratives:

As the National Research Council review of police effectiveness noted, “studies that focused police resources on crime hot spots provided the strongest collective evidence of police effectiveness that is now available.” A Campbell systematic review by Braga et al. comes to a similar conclusion; although not every hot spots study has shown statistically significant findings, the vast majority of such studies have (20 of 25 tests from 19 experimental or quasi-experimental evaluations reported noteworthy crime or disorder reductions), suggesting that when police focus in on crime hot spots, they can have a significant beneficial impact on crime in these areas. As Braga concluded, “extant evaluation research seems to provide fairly robust evidence that hot spots policing is an effective crime prevention strategy.”

Indeed, I argue that most of the programs that Stevenson shows failed, tried to change preferences while those that succeeded tend to focus on changing constraints. There are lessons for future policy and funding. Read the whole thing.

Shruti Rajagopalan and Janhavi Nilekani podcast

In this episode, Shruti speaks with [the excellent] Janhavi Nilekani about India’s high rate of C-sections compared with vaginal births, problems with maternal healthcare, the present and future of Indian midwifery and much more. Nilekani is the founder and chair of the Aastrika Foundation, which seeks to promote a future in which every woman is treated with respect and dignity during childbirth, and the right treatment is provided at the right time. She is a development economist by training and now works in the field of maternal health. She obtained her Ph.D. in public policy from Harvard and holds a 2010 B.A., cum laude, in economics and international studies from Yale.

Here is the link.

Regulatory quality is declining

One example of such evidence-free regulation in recent years comes from the Department of Health and Human Services (HHS). In 2021, HHS repealed a rule enacted by the Trump administration that would have required the agency to periodically review its regulations for their impact on small businesses. The measure was known as the SUNSET rule because it would attach sunset provisions, or expiration dates, to department rules. If the agency failed to conduct a review, the regulation expired.

Ironically, in proposing to rescind the SUNSET rule, HHS argued that it would be too time consuming and burdensome for the agency to review all of its regulations. Citing almost no academic work in support of its proposed repeal — a reflection of the anti-consequentialism that animates so much contemporary regulatory policy — the agency effectively asserted that assessing the real-world consequences of its existing rules was far less pressing an issue than addressing the perceived problems of the day (by, of course, issuing more regulations).

Through its actions, HHS has rejected the very notion of having to review its own rules and assess whether they work. In fact, the suggestion that agencies review their regulations is an almost inexplicably divisive issue in Washington today. “Retrospective review” has become a dirty term, while cost-benefit analysis has morphed into a tool to judge intentions rather than predict real-world consequences. The shift highlights how far the modern administrative state has drifted from the rational, evidence-based system envisioned by the law-and-economics movement just a few decades ago.

Here is more from James Broughel at Mercatus.

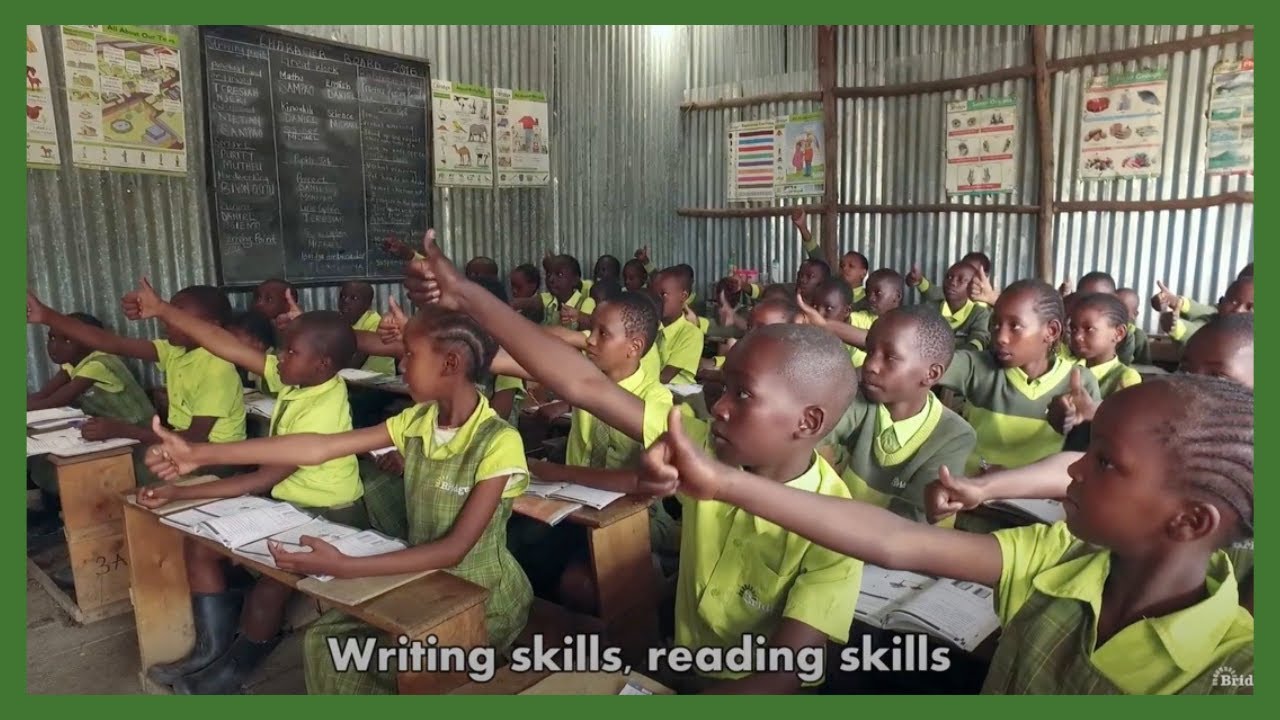

Direct Instruction Produces Large Gains in Learning, Kenya Edition

In an important new paper, Can Education be Standardized? Evidence from Kenya, Guthrie Gray-Lobe, Anthony Keats, Michael Kremer, Isaac Mbiti and Owen Ozier evaluate Bridge International schools using a large randomized experiment. Twenty five thousand Kenyan students applied for 10,000 scholarships to Bridge International and the scholarships were given out by lottery.

Kenyan pupils who won a lottery for two-year scholarships to attend schools employing a highly-structured and standardized approach to pedagogy and school management learned more than students who applied for, but did not win, scholarships.

After being enrolled at these schools for two years, primary-school pupils gained approximately the equivalent of 0.89 extra years of schooling (0.81 standard deviations), while in pre-primary grades, pupils gained the equivalent of 1.48 additional years of schooling (1.35 standard deviations).

These are very large gains. Put simply, children in the Bridge programs learnt approximately three years worth of material in just two years! Now, I know what you are thinking. We have all seen examples of high-quality, expensive educational interventions that don’t scale–that was the point of my post Heroes are Not Replicable and see also my recent discussion of the Perry Preschool project–but it’s important to understand the backstory of the Bridge study. Bridge Academy uses Direct Instruction and Direct Instruction scales! We know this from hundreds of studies. In 2018 I wrote (no indent):

These are very large gains. Put simply, children in the Bridge programs learnt approximately three years worth of material in just two years! Now, I know what you are thinking. We have all seen examples of high-quality, expensive educational interventions that don’t scale–that was the point of my post Heroes are Not Replicable and see also my recent discussion of the Perry Preschool project–but it’s important to understand the backstory of the Bridge study. Bridge Academy uses Direct Instruction and Direct Instruction scales! We know this from hundreds of studies. In 2018 I wrote (no indent):

What if I told you that there is a method of education which significantly raises achievement, has been shown to work for students of a wide range of abilities, races, and socio-economic levels and has been shown to be superior to other methods of instruction in hundreds of tests?….I am reminded of this by the just-published, The Effectiveness of Direct Instruction Curricula: A Meta-Analysis of a Half Century of Research which, based on an analysis of 328 studies using 413 study designs examining outcomes in reading, math, language, other academic subjects, and affective measures (such as self-esteem), concludes:

…Our results support earlier reviews of the DI effectiveness literature. The estimated effects were consistently positive. Most estimates would be considered medium to large using the criteria generally used in the psychological literature and substantially larger than the criterion of .25 typically used in education research (Tallmadge, 1977). Using the criteria recently suggested by Lipsey et al. (2012), 6 of the 10 baseline estimates and 8 of the 10 adjusted estimates in the reduced models would be considered huge. All but one of the remaining six estimates would be considered large. Only 1 of the 20 estimates, although positive, might be seen as educationally insignificant.

…The strong positive results were similar across the 50 years of data; in articles, dissertations, and gray literature; across different types of research designs, assessments, outcome measures, and methods of calculating effects; across different types of samples and locales, student poverty status, race-ethnicity, at-risk status, and grade; across subjects and programs; after the intervention ceased; with researchers or teachers delivering the intervention; with experimental or usual comparison programs; and when other analytic methods, a broader sample, or other control variables were used.

Indeed, in 2015 I pointed to Bridge International as an important, large, and growing set of schools that use Direct Instruction to create low-cost, high quality private schools in the developing world. The Bridge schools, which have been backed by Mark Zuckerberg and Bill Gates, have been controversial which is one reason the Kenyan results are important.

One source of controversy is that Bridge teachers have less formal education and training than public school teachers. But Brdige teachers need less formal education because they are following a script and are closely monitored. DI isn’t designed for heroes, it’s designed for ordinary mortals motivated by ordinary incentives.

School heads are trained to observe teachers twice daily, recording information on adherence to the detailed teaching plans and interaction with pupils. School heads are given their own detailed scripts for teacher observation, including guidance for preparing for the observation, what teacher behaviors to watch for while observing, and how to provide feedback. School heads are instructed to additionally conduct a 15 minute follow up on the same day to check whether teachers incorporated the feedback and enter their scores through a digital system. The presence of the scripts thus transforms and simplifies the task of classroom observation and provision of feedback to teachers. Bridge also standardizes a range of other processes from school construction to financial management.

Teachers are observed twice daily! The model is thus education as a factory with extensive quality control–which is why teachers don’t like DI–but standardization, scale, and factory production make civilization possible. How many bespoke products do you buy? The idea that education should be bespoke gets things entirely backward because that means that you can’t apply what you learn about what works at scale–Heroes are Not Replicable–and thus you don’t get the benefits of refinement, evolution, and continuous improvement that the factory model provides. I quoted Ian Ayres in 2007:

“The education establishment is wedded to its pet theories regardless of what the evidence says.” As a result they have fought it tooth and nail so that “Direct Instruction, the oldest and most validated program, has captured only a little more than 1 percent of the grade-school market.”

Direct Instruction is evidence-based instruction that is formalized, codified, and implemented at scale. There is a big opportunity in the developing world to apply the lessons of Direct Instruction and accelerate achievement. Many schools in the developed world would also be improved by DI methods.

Addendum 1: The research brief to the paper, from which I have quoted, is a short but very good introduction to the results of the paper and also to Direct Instruction more generally.

Addendum 2: A surprising number of people over the years have thanked me for recommending DI co-founder Siegfried Engelmann’s Teach Your Child to Read in 100 Easy Lessons.

Is the scolding equilibrium shifting, and if so why?

As the pandemic evolves, so is the tendency of people to take moral positions they would not normally endorse. Most notably, many left-wing commentators are becoming moral scolds, stressing ideals of individual responsibility.

Consider these words:

“So it’s time to stop being diffident and call out destructive behavior for what it is. Doing so may make some people feel that they’re being looked down on. But you know what? Your feelings don’t give you the right to ruin other people’s lives.”

If I had read that paragraph two years ago, I might have thought it was a conservative columnist lamenting inner-city crime, or perhaps complaining about the behavior of homeless people in San Francisco. But no: It is Paul Krugman discussing those who will not get vaccinated or wear masks. He calls it “the rage of the responsible,” and it is emblematic of a broader set of current left-wing attitudes, most of all toward the red state responses to the pandemic.

To be clear, I agree with Krugman’s point, and I frequently express similar sentiments. All the same, I wonder about the rules here. When exactly are “the responsible” allowed to express their quiet rage, on which issues and on which terms?

The alternative to this rage is the language of victimhood. For example, many on the left tend to portray the homeless as hostages to circumstances largely beyond their control: the high cost of housing, unjust eviction policies, a tattered social welfare state, perhaps mental illness or drug addiction.

There is some truth in all those hypotheses. Still, when it comes to the homeless, am I also allowed to express the quiet rage of the responsible? Or is only the rhetoric of victimhood allowed?

There is no doubt that homeless people suffer very real injustices. But it could be argued that allowing oneself to become homeless is a greater abdication of responsibility than refusing to be vaccinated. It is also worse for your health and bad for the community, as anyone from San Francisco can tell you.

One rejoinder might be that a pandemic is different. Maybe so, but if this were the 1980s, during the peak of the HIV-AIDS epidemic, one could imagine a Moral Majority advocate expressing sentiments similar to Krugman’s about gay men who engage in unsafe sex. Today such a view would be considered uncouth, at least in the mainstream media, and that’s not only because there are now effective treatments against HIV-AIDS. This kind of scolding has mostly gone out of fashion, especially when the recipients have been victims of prior or current social discrimination.

Or consider the question of suicide. There was a time in America when it was common to view suicide as a violation of Christian doctrine. Now there is largely sympathy for those who have killed themselves. Is this change for the better? Maybe, but it’s not clear that this issue has been given serious evidence-based consideration. Scolding sometimes helps to limit the number of wrong deeds, and everyone does it to some degree, even when it is sometimes not appropriate.

Then there are alcohol and drug abuse, which have some features of epidemics in that they exhibit social contagion. Your drunkenness, for example, on average encourages some of your friends to experiment with the same. But scolding alcoholics also is out of fashion, even though the social costs of alcohol abuse are extremely high, especially when considered cumulatively. As a teetotaler, I sometimes express my own quiet rage of the responsible, and my reaction is mostly considered a strange curiosity.

It is not only left-wing thinkers who have ended up in strange ideological positions. Governor Ron DeSantis of Florida, a conservative Republican and one of America’s leading right-wing politicians, has essentially expanded public health-care coverage in his state by setting up mobile units to administer monoclonal antibodies to Covid-19 sufferers. I’m all for that. At the same time, I notice he continues to oppose Medicaid expansion in Florida.

What explains the attitudinal shifts we are seeing? One possibility is that left-wing thinkers are getting more puritanical and are more comfortable in their new role as scolds, including with respect to sex and vaccination and mask-wearing. That would leave Trumpist Republicans as the defenders of medical choice and the sexual libertinism of the 1960s and 1970s.

Another possibility, not mutually exclusive, is that few of us are intellectually consistent, and so our scolding is increasingly shaped by affective political polarization. The left will scold the practices of Trump supporters, while the right will scold the woke, and views on any particular issue will be adjusted to fit into this broader pattern. If an issue is not very partisan, such as alcohol abuse or suicide, scolding simply will decline.

Here is an article on the movement to treat vaccinated patients first. Fine by me! But what exactly are the egalitarians supposed to say? Is meritocracy now allowed to rear its ugly head? Or do no other social outcomes have anything to do with your merit? Only this one? Really?

Emergent Ventures winners, 15th cohort

Emily Oster, Brown University, in support of her COVID-19 School Response Dashboard and the related “Data Hub” proposal, to ease and improve school reopenings, project here.

Kathleen Harward, to write and market a series of children’s books based on classical liberal values.

William Zhang, a high school junior on Long Island, NY, for general career development and to popularize machine learning and computation.

Kyle Schiller, to study possibilities for nuclear fusion.

Aaryan Harshith, 15 year old in Ontario, for general career development and “LightIR is the world’s first device that can instantly detect cancer cells during cancer surgery, preventing the disease from coming back and keeping patients healthier for longer.”

Anna Harvey, New York University and Social Science Research Council, to bring evidence-based law and economics research to practitioners in police departments and legal systems.

EconomistsWritingEveryDay blog, here is one recent good Michael Makowsky post.

Richard Hanania, Center for the Study of Partisanship and Ideology, to pursue their new mission.

Jeremy Horpedahl, for his work on social media to combat misinformation, including (but not only) Covid misinformation.

Congratulations! Here are previous Emergent Ventures winners.

What I’ve been reading

1. Matthew Hongoltz-Hetling, A Libertarian Walks Into a Bear: The Utopian Plot to Liberate an American Town (And Some Bears). A fun look at the Free Town project as applied to Grafton, New Hampshire: “During a television interview, a Grafton resident accused the Free Towners of “trying to cram freedom down our throats.””

2. Cass R. Sunstein and Adrian Vermeulen, Law & Leviathan: Redeeming the Administrative State. Self-recommending from the pairing alone, there is a great deal of interesting content in the 145 pp. of text. It is furthermore an interesting feature of this book that it was written at all on the chosen topic. Perhaps the administrative state is under more fire than I realize. And might you consider this book a centrist version of…maybe call it “state capacity not quite libertarianism”?

3. Michael D. Gordin, The Pseudo-Science Wars: Immanuel Velikovsky and the Birth of the Modern Fringe. A somewhat forgotten but still fascinating episode in the history of science, extra-interesting for those interested in Venus. I had not known that Velikovsky pushed a weird version of a eugenicist theory stating that Israel was too hot for its own long-term good, and that its inhabitants needed to find ways of cooling it down.

4. History, Metaphor, Fables: A Hans Blumenberg Reader, edited by Bajohr, Fuchs, and Kroll. I love Blumenberg, but the selection here didn’t quite sell me. Better to start with his The Legitimacy of the Modern Age, noting that book is a tough climb for just about anyone and it requires your full attention for some number of weeks. Might Blumenberg be the best 20th thinker who isn’t discussed much in the Anglo-American world? And yes it is Progress Studies too.

5. Laura Tunbridge, Beethoven: A Life in Nine Pieces. Smart books on Beethoven are like potato chips, plus you can listen to his music while reading (heard Op.33 Bagatelles lately?). In addition to some of the classics, this book covers some lesser known pieces such as the Septet, An die Ferne Geliebte, and the Choral Fantasy, and how they fit into Beethoven’s broader life and career. Intelligent throughout.

6. Sean Scully, The Shape of Ideas, edited and written by Timothy Rub and Amanda Sroka. Is Scully Ireland’s greatest living artist? He has been remarkably consistent over more than five decades of creation. This is likely the best Scully picture book available, and the text is useful too. Since it is abstract color and texture painting, he is harder than most to cancel — will we see the visual arts shift in that direction?

Jonathan E. Hillman, The Emperor’s New Road: China and the Project of the Century, is a good introduction to its chosen topic.

Robert Litan, Resolved: Debate Can Revolutionize Education and Help Save Our Democracy: “…incorporate debate or evidence-based argumentation in school as early as the late elementary grades, clearly in high school, and even in college.”

I am closer to the economics than the politics of Casey B. Mulligan, You’re Hired! Untold Successes and Failures of a Populist President, but nonetheless it is an interesting and contrarian book, again here is the excellent John Cochrane review.

There is also Harriet Pattison, Our Days are Like Full Years: A Memoir with Letters from Louis Kahn, a lovely romance with nice photos, sketches, and images as well, very nice integration of text and visuals.

Wednesday assorted links

1. Russian billionaire wants to buy cancelled Confederate statues.

3. Where are the missing right-wing firms? And Arnold.

6. An evidence-based return to work plan.

7. The nasal spray, which will be entering clinical trials.

Sunday assorted links

1. John Cleese on PC and wokeness. I think the first comment is satire rather than serious, but one can’t be entirely sure these days. The best-known Monty Python episodes these days are entirely acceptable, but some of the now lesser-known works are pretty…out there.

2. “Meanwhile, for-profit companies charge schools thousands of dollars for the training, making the active shooter drill industry worth an estimated $2.7 billion — “all in pursuit of a practice that, to date, is not evidence-based,” according to the researchers.” Link here.

3. Ross Douthat on how many lives a more competent president would have saved (NYT).

4. Why don’t coaches/manangers adjust more? A parable from the NBA, but with much broader applicability. Note that sometimes the star player is the problem too.

Friday assorted links

1. “The evening’s entertainment harshly criticizes capitalism, and at $2,000 a seat…” (NYT)

2. “Pledges to Notre Dame by rich stir resentment…” (NYT) More information here.

3. Has TikTok learned how to censor the internet?

4. Me on The Gist, Slate podcast with Mike Pesca. And Ryan Bourne reviews *BIg Business* in the Daily Telegraph.

5. Have we finally figured out how general anesthesia works?

6. Emily Oster on evidence-based parenting and breast-feeding (NYT).

Is Dentistry Safe and Effective?

The FDA may be too conservative but it does subject new pharmaceuticals to real scientific tests for efficacy. In contrasts, many medical and surgical procedures have not been tested in randomized controlled trials. Moreover, dental care is far behind medical care in demanding scientific evidence of efficacy. A long-read in The Atlantic spends far too much time on a single case of egregious dental fraud but it’s larger point is correct:

Common dental procedures are not always as safe, effective, or durable as we are meant to believe. As a profession, dentistry has not yet applied the same level of self-scrutiny as medicine, or embraced as sweeping an emphasis on scientific evidence.

…Consider the maxim that everyone should visit the dentist twice a year for cleanings. We hear it so often, and from such a young age, that we’ve internalized it as truth. But this supposed commandment of oral health has no scientific grounding. Scholars have traced its origins to a few potential sources, including a toothpaste advertisement from the 1930s and an illustrated pamphlet from 1849 that follows the travails of a man with a severe toothache. Today, an increasing number of dentists acknowledge that adults with good oral hygiene need to see a dentist only once every 12 to 16 months.

The joke, of course, is that there’s no evidence for the 12 to 16 month rule either. Still give credit to Ferris Jabr for mentioning that the case for fluoridation is also weak by modern standards–questioning fluoridation has been a taboo in American society since anti-fluoridation activists were branded as far-right conspiracy theorists in the 1950s.

The Cochrane organization, a highly respected arbiter of evidence-based medicine, has conducted systematic reviews of oral-health studies since 1999….most of the Cochrane reviews reach one of two disheartening conclusions: Either the available evidence fails to confirm the purported benefits of a given dental intervention, or there is simply not enough research to say anything substantive one way or another.

Fluoridation of drinking water seems to help reduce tooth decay in children, but there is insufficient evidence that it does the same for adults. Some data suggest that regular flossing, in addition to brushing, mitigates gum disease, but there is only “weak, very unreliable” evidence that it combats plaque. As for common but invasive dental procedures, an increasing number of dentists question the tradition of prophylactic wisdom-teeth removal; often, the safer choice is to monitor unproblematic teeth for any worrying developments. Little medical evidence justifies the substitution of tooth-colored resins for typical metal amalgams to fill cavities. And what limited data we have don’t clearly indicate whether it’s better to repair a root-canaled tooth with a crown or a filling. When Cochrane researchers tried to determine whether faulty metal fillings should be repaired or replaced, they could not find a single study that met their standards.

The third cohort of Emergent Ventures recipients

As always, note that the descriptions are mine and reflect my priorities, as the self-descriptions of the applicants may be broader or slightly different. Here goes:

Jordan Schneider, for newsletter and podcast and writing work “explaining the rise of Chinese tech and its global ramifications.”

Michelle Rorich, for her work in economic development and Africa, to be furthered by a bike trip Cairo to Capetown.

Craig Palsson, Market Power, a new YouTube channel for economics.

Jeffrey C. Huber, to write a book on tech and economic progress from a Christian point of view.

Mayowa Osibodu, building AI programs to preserve endangered languages.

David Forscey, travel grant to look into issues and careers surrounding protection against election fraud.

Jennifer Doleac, Texas A&M, to develop an evidence-based law and economics, crime and punishment podcast.

Fergus McCullough, University of St. Andrews, travel grant to help build a career in law/history/politics/public affairs.

Justin Zheng, a high school student working on biometrics for cryptocurrency.

Matthew Teichman at the University of Chicago, for his work in philosophy podcasting.

Kyle Eschen, comedian and magician and entertainer, to work on an initiative for the concept of “steelmanning” arguments.

Here is the first cohort of winners, and here is the second cohort. Here is the underlying philosophy behind Emergent Ventures. Note by the way, if you received an award very recently, you have not been forgotten but rather will show up in the fourth cohort.

Tuesday assorted links

1. Markets in everything: UNESCO World Heritage Sites.

2. Venture Research.

3. Purely evidence-based policy does not exist.

5. The diffusion of military surrender.

6. South Korean cyberfunerals, the ultimate right to be forgotten.

Is NIH funding seeing diminishing returns?

Scientific output is not a linear function of amounts of federal grant support to individual investigators. As funding per investigator increases beyond a certain point, productivity decreases. This study reports that such diminishing marginal returns also apply for National Institutes of Health (NIH) research project grant funding to institutions. Analyses of data (2006-2015) for a representative cross-section of institutions, whose amounts of funding ranged from $3 million to $440 million per year, revealed robust inverse correlations between funding (per institution, per award, per investigator) and scientific output (publication productivity and citation impact productivity). Interestingly, prestigious institutions had on average 65% higher grant application success rates and 50% larger award sizes, whereas less-prestigious institutions produced 65% more publications and had a 35% higher citation impact per dollar of funding. These findings suggest that implicit biases and social prestige mechanisms (e.g., the Matthew effect) have a powerful impact on where NIH grant dollars go and the net return on taxpayers investments. They support evidence-based changes in funding policy geared towards a more equitable, more diverse and more productive distribution of federal support for scientific research. Success rate/productivity metrics developed for this study provide an impartial, empirically based mechanism to do so.

That is by Wayne P. Wals, via Michelle Dawson.

The seven forbidden words?

Policy analysts at the Centers for Disease Control and Prevention in Atlanta were told of the list of forbidden words at a meeting Thursday with senior CDC officials who oversee the budget, according to an analyst who took part in the 90-minute briefing. The forbidden words are “vulnerable,” “entitlement,” “diversity,” “transgender,” “fetus,” “evidence-based” and “science-based.”

That’s the WaPo piece everyone is abuzz about. A few observations:

1. This story may well be true, but I’d like more than “…according to an analyst who took part in the 90-minute briefing.” Here is another account of what exactly is known. Wasn’t “not publishing the article until it is better sourced” the evidence-based thing to do?

2. I don’t have a great fondness for the terms “evidence-based” or “science-based.” When they are used on MR, it is often as a form of third-person reference or with a slight mock or ironic touch. When I see the words used by others, my immediate reaction is to think someone is deploying it selectively, without complete self-awareness, or as a bullying tactic, in lieu of an actual argument, or as a way of denying how much their own argument depends on values rather than science. I wouldn’t ban the words for anyone working for me, but seeing them often prompts my editor’s red pen, so to speak. The most er…evidence-based people I know don’t use the term so much, least of all with reference to themselves.

3. In any case, the suggested replacement phrase — “CDC bases its recommendations on science in consideration with community standards and wishes” — I do not find offensive or anti-science, and I can imagine a plausible case that it is an actual improvement. Science is (ought to be) value-free, yet CDC and more broadly federal policy should embody values too. It should not think of itself as “the handmaiden of science.”

4. There is a fine line between “censorship” and “a bureaucratic organization which can be badly damaged by individual freelancing deciding to adopt uniform terminologies.” I don’t doubt both might be going on here, but I’d like to see the extant Twitter takes show a little more subtlety on the broader point. Don’t forget that the executive branch of government reports to the…executive, it is not a freestanding committee for debate, however much it might sometimes like to imagine otherwise.

5. The word “diversity” usually isn’t specific enough, or is channeling unstated preconceptions about how diversity should be interpreted. We should improve our use of this word. I have similar feelings about “vulnerable.”

6. People react to changes rather than levels.

7. “Fetus” — look, it is fine to disagree with the “pro-life view” (I’m not even sure what is the most neutral way of labeling it). But is banning the use of the word “fetus” in institutional documents censorship? What if an employee, during the Obama years, in an official CDC release had referred to a “fetus” as a “child”? Would that have been changed back to fetus? I am inclined to say yes. Is it censorship in only one direction, or are both decisions censorship? Or is this better seen as a disagreement over matters of fact? A disagreement over values? I am genuinely unsure, and I am unsure what a majority of the American public would think. But I would say this is sooner worth a ponder than a rant.

8. If nothing else, Sam Altman can show up in China, post “here is my vulnerable entitlement diversity transgender fetus, who is evidence-based and science-based” on his Weibo account, and then go order some Chairman Mao’s braised pork belly.

9. What are the forbidden words in other parts of the federal government, whether de jure or de facto? Will anyone be showing us a list? Or is that list censored too?