A Very Depressing Paper on the Great Stagnation

Are Ideas Getting Harder to Find? Yes, say Bloom, Jones, Van Reenen, and Webb. A well known fact about US economic growth is that it has been relatively constant over a hundred years or more. Yet we also know that the number of researchers has increased greatly over the same time period. More researchers and the same growth rate suggest a declining productivity of ideas. Jones made this point in a much earlier paper that has long nagged at me. With just one country and rising world growth rates, however, I wondered if the US had somehow had offsetting factors. Bloom, Jones, Van Reenen and Webb, however, now return to the same issue with a more detailed investigation of specific industries and the picture isn’t pretty.

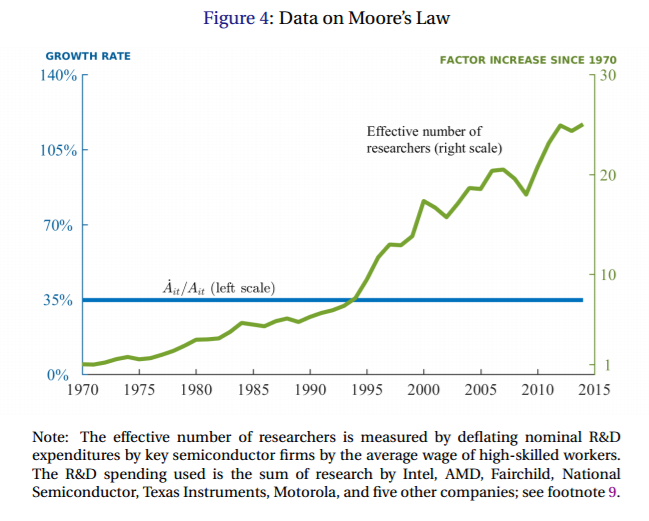

Moore’s law (increasing transistors per CPU) is often trotted out as the stock example of an amazing increase in productivity and it is when measured on the output side. But when you look at Moore’s law from the perspective of inputs what we see is a tremendous decline in idea productivity.

The striking fact, shown in Figure 4, is that research effort has risen by a factor of 25 since 1970. This massive increase occurs while the growth rate of chip density is more or less stable: the constant exponential growth implied by Moore’s Law has been achieved only by a staggering increase in the amount of resources devoted to pushing the frontier forward.

In some ways Moore’s law is the least disturbing trend because massive increases in researchers has at least kept growth constant. In other areas, growth is slowing despite many more researchers.

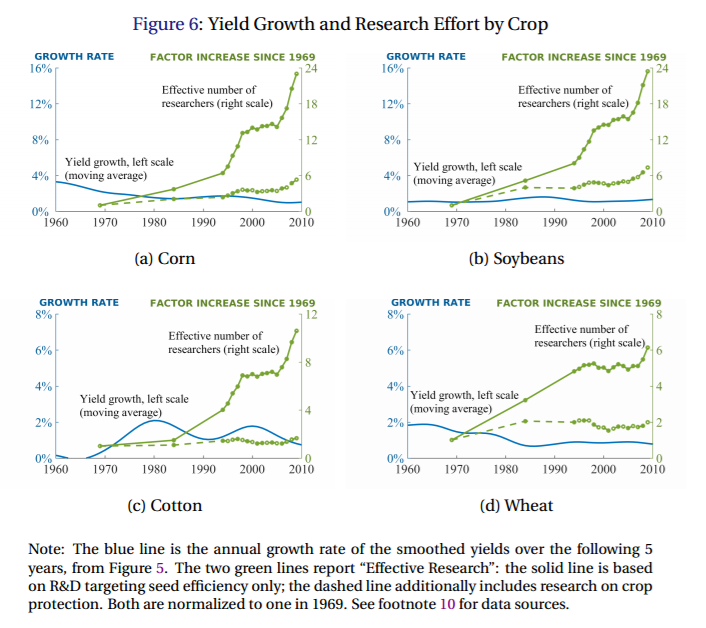

Agricultural yields, for example, are increasing but the rate is constant or declining despite big increases in the number of researchers.

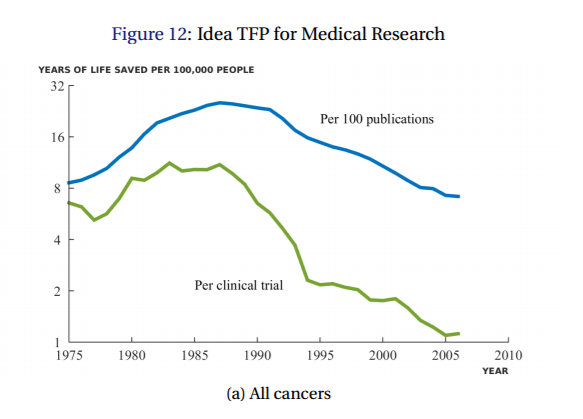

Since 1950 life expectancy at birth has been growing at a remarkably steady rate of about 1.8 years per decade but that growth has only been bought by ever increasing number of researchers. Here, for example, is cancer mortality as function of the number of publications or clinical trials. Each clinical trial used to be associated with ~8 lives saved per 100,000 people but today a new clinical trial is associated with only ~1 life saved per 100,000.

And how is this for a depressing summary sentence:

…the economy has to double its research efforts every 13 years just to maintain the same overall rate of economic growth.

In my TED talk and in Launching I pointed to increased market size and increased wealth in developing countries as two factors which increase the number of researchers and therefore increase the global flow of ideas. That remains true. Indeed, if Bloom et al. are correct then even more than before we can’t afford to waste minds. To maximize growth we need to draw on all the world’s brain power and that means we need a world of peace, trade and the free flow of ideas.

Nevertheless the Bloom et al findings cut optimism. The idea of the Singularity, for example, comes from projecting constant or increasing growth rates into the future but if it takes ever more researchers just to keep growth rates from falling then growth must slow as we run out of researchers. As China and India become wealthy the number of researchers will increase but better institutions can only push lower growth rates into the future temporarily. Most frighteningly, can we sustain a world of peace, trade and the free flow of ideas with lower growth rates?

Just because idea production has become more difficult in the past, however, doesn’t make it necessarily so forever. We could be in a slump. Breakthroughs in ideas for improving idea production could raise growth rates. Genetic engineering to increase IQ could radically increase growth. Artificial intelligence or brain emulations could greatly increase ideas and growth, especially as we can create AIs or EMs faster at far lower cost than we can create more natural intelligences. That sounds great but if computers and the Internet haven’t already had such an effect one wonders how long we will have to wait to break the slump.

I told you the paper was depressing.