Category: Science

My excellent Conversation with Toby Wilkinson

Here is the audio, video, and transcript. Most of all, we cover Ptolemaic Egypt. Here is part of the episode summary:

Tyler and Toby cover how Alexander took over the empire almost without a fight, why Alexandria became the Manhattan of the ancient world, whether the era was as philosophically fertile as it was scientifically, whether your ancient doctor’s visit had positive expected value, what Egypt was actually exporting and selling, whether living standards rose above subsistence or stayed Malthusian, how the ethnic divide between Greek rulers and Egyptian subjects shaped society, what constrained the Ptolemaic Empire from becoming the next Rome, whether Cleopatra has been overhyped, what Julius Caesar was really thinking when he sided with her over her brother, the new frontiers in archeology, whether Herodotus can be trusted, what ancient Egypt knew about Israel and India, when Egyptian jewelry peaked and why, what triggered the sudden emergence of civilization across the ancient world, why a six-year-old Tyler knew King Tut better than Napoleon, and much more.

Excerpt:

COWEN: Either technologically or institutionally, what is it that the Persians had that the Egyptians did not?

WILKINSON: The Persians had a pretty formidable army. Their military technology was certainly superior to the Egyptians at the time that they conquered Egypt originally in the 6th century BC. Like many empires, I suppose, throughout history, they overreached themselves. They overextended themselves, and they found it increasingly hard to hold together this empire stretching all the way from the Aegean to the borders of India. Bits of the empire started to fragment and pull away. Egypt had always had this very strong sense of its own identity. When it had a chance to throw off the Persian yoke, it took it.

COWEN: Let’s think about some of the achievements of Ptolemaic Egypt as an era. Infrastructure. What did they do that was most impressive?

WILKINSON: Build Alexandria. Alexandria the city was a new foundation established by Alexander the Great to bear his name. Unlike all previous ancient Egyptian cities, it was a city built from the outset for commerce. It was a city built on the Mediterranean coast with a great natural harbor, with facilities for loading and offloading ships. It had a great lighthouse guarding the entrance to its harbor, which became one of the wonders of the world. The whole city was really designed from the get-go as a great commercial center looking outwards to the Mediterranean, rather than inwards to the rest of Egypt.

COWEN: Canals, artificial lake. What else did they do?

WILKINSON: They built a city quite unlike anything previously seen in the valley of the river Nile. In fact, any inhabitant today of a modern city would recognize the grid iron pattern of streets. Streets intersecting at right angles, that was something completely unheard of until this point in Egypt with vast public buildings. This was the Manhattan of the ancient world, if you like, in scale, in grandeur, and in the level of commercial activity.

And:

EN: What were the main exports of the Alexandria region? What are they selling, making?

WILKINSON: Oh, the two big exports that account for the lion’s share of Egypt’s wealth at the time are gold and grain. Gold has been mined in Egypt for millennia up to this point, but it’s still the place in the ancient world that produces large quantities of gold. Of course, gold has always been a great currency of international commerce.

Then Egypt is famed as the breadbasket of the ancient world. It produces a superabundance of grain thanks to the fertility of the Nile and the benign climate. It produces more than it needed for its own consumption, by comparison with poorer agricultural regions in Greece and Asia Minor, which struggled to produce enough food. Yes, gold and grain were the absolute engine of Egyptian prosperity.

COWEN: There’s metalwork, there’s glass. What else is there, manufacturing, as we would call it today?

WILKINSON: Oh, yes. There’s a big ceramics industry, so producing not just pots, but terracotta statues and votive objects. There’s glassmaking, as you’ve said. There’s advanced metallurgy, goldsmithing, ironworking, copper and bronze foundries. There’s what we might call the decorative arts, so sculpture, painting. All of these things thrived in ancient Alexandria.

COWEN: Do they have living standards sustainably above subsistence, or is this a Malthusian equilibrium, where they get some wealth and then more people survive and the wage falls again, and it doesn’t get much above what is required to keep people alive?

Recommended, informative and interesting throughout. And I am very happy to recommend all of Toby’s books, including his latest

Seven ways to avoid losing your job to AI

That is the theme of my latest Free Press column, here is one excerpt:

Principle five: Run experiments.

This is a more general version of the healthcare point. AI will generate so many new ideas and hypotheses, including for drugs and medical devices, but not only. Become a tester. Test new battery designs, new educational techniques, or new methods of conserving valued wildlife.

The demand for experiments will rise sharply, and most of those cannot be done by robots, at least not anytime soon.

Principle six: Gather data.

AI is a marvelous tool, but it relies on knowing lots about the world. That can stem from reading the internet, watching videos of people folding clothes, and hearing recordings of voices, among many other ways of absorbing information.

The more powerful the AI, the higher the returns from feeding it data, because it will make smart and useful inferences from those data. But most data in our world have never been put into AI models. Just consider corporate records, historical archives, referee reports for failed scientific papers, accounts of lab procedures, and much more. Most of that remains virgin territory.

The next few decades will bring an immense investment in feeding more data into the AIs. So there will be new jobs in gathering environmental data, job safety data, construction site data, corporate and management data, public health data, agricultural data, education data, and much more. Those jobs could be yours.

Recommended.

The new tranche of UAP videos

You can find them here: https://x.com/theblackvault/status/2057800997012197428?s=61

The AIs are “One of Us”

A general purpose AI model from OpenAI has produced a (dis)proof of an important conjecture. Tim Gowers writes:

AI has now solved a major open problem — one of the best known Erdos problems called the unit distance problem, one of Erdos’s favourite questions and one that many mathematicians had tried.

A number of prominent mathematicians comment. I enjoyed Thomas Bloom’s comments:

This was one of Erdős’ favourite problems – he first asked it in 1946 [14] and returned to it many times. (The site www.erdosproblems.com, on which it is Problem #90, currently lists 14 separate references, and there are no doubt more.) The influential collection of ‘Research Problems in Discrete Geometry’ by Brass, Moser, and Pach [8] describes it as ‘possibly the best known (and simplest to explain) problem in combinatorial geometry’. For an AI to produce a solution to a problem of this calibre is both surprising and impressive.

…On examining the construction, it becomes more clear how people had missed this before – it requires the confluence of several different unlikely events: that a good mathematician is

(1) spending significant time in thinking about the unit distance conjecture in the first place;

(2) seriously trying to disprove it, despite the oft-repeated belief of Erdős that it is true;

(3) believes that there is mileage in generalising the original construction to other number fields,

and so is willing to expend significant time in exploring such constructions; and

(4) sufficiently familiar with the relevant parts of class field theory to recognise that the appropriately phrased question about infinite towers of number fields with appropriate parameters can be solved using existing theory.The AI met all of these criteria, and its success here echoes previous achievements: it often produces the most surprising results by persevering down paths that a human may have dismissed as not worth their time to explore, combining superhuman levels of patience with familiarity with a vast array of technical machinery.

…perhaps some in the area will be a little disappointed with how little this tells us: it does not introduce any powerful new geometric tools, or hitherto unsuspected structural results, that a proof of the unit distance conjecture would likely have called for. Still, while perhaps not the proof of a conjecture that we had hoped for, no doubt this construction and the ideas involved will have a major impact in discrete geometry.

One aspect of this proof should not be overlooked: while the original proof produced by AI was completely valid, it was significantly improved by the human researchers at OpenAI and the many other mathematicians involved in the present paper. The human still plays a vital role in discussing, digesting, and improving this proof, and exploring its consequences.

The frontiers of knowledge are very spiky, and no doubt the coming months and years will see similar successes in many other areas of mathematics, where long-standing open problems are resolved by an AI revealing unexpected connections and pushing the existing technical machinery to its limit. AI is helping us to more fully explore the cathedral of mathematics we have build over the centuries; what other unseen wonders are waiting in the wings?

One way of putting this is that the mathematicians are now acknowledging that the AI’s are “one of us”. Gooble Gobble! Read the AIs chain of thought to understand why. I asked Claude how many people the world could understand the proof:

A rough tiered estimate, treating “understand” as “could read the 42-page note and follow the argument without needing to learn new machinery from scratch”:

Tier 1 — could referee it cold (real working knowledge of class field towers + the Ellenberg–Venkatesh circle): roughly 150–400 people worldwide. This is essentially the active algebraic number theory community working near arithmetic statistics, plus a handful of arithmetic-geometry-adjacent combinatorialists. The author list itself is a decent proxy for the upper crust of this group.

Tier 2 — could understand it with a week or two of focused effort and some Wikipedia/textbook chasing (strong number theorists or combinatorialists outside the immediate subfield, plus sharp grad students past quals at top programs): roughly 2,000–5,000. Think most tenure-track number theorists, the top tier of extremal combinatorics, and arithmetic geometers generally.

Tier 3 — could grasp the structure of the argument from a Quanta-style exposition without verifying the steps: 50,000–200,000+, i.e., most working mathematicians and a chunk of physicists/CS theorists. This is not what you asked, but it’s where most of the public “understanding” will sit.

Robin (it’s happening)

Scientific discovery is driven by the iterative process of observation, hypothesis generation, experimentation, and data analysis. Despite recent advancements in applying artificial intelligence to biology, no system has yet automated all these stages [1, 2, 3]. Here, we introduce Robin, the first multi-agent system capable of fully automating both hypothesis generation and data analysis for experimental biology. By integrating literature search agents with data analysis agents, Robin can generate hypotheses, propose experiments, interpret experimental results, and generate updated hypotheses, achieving a semi-autonomous approach to scientific discovery. By applying this system, we were able to identify promising therapeutic candidates for dry age-related macular degeneration (dAMD), the major cause of blindness in the developed world [4, 5]. Robin proposed enhancing retinal pigment epithelium phagocytosis as a therapeutic strategy, and identified and confirmed in vitro efficacy for ripasudil and KL001. Ripasudil is a clinically-used Rho kinase (ROCK) inhibitor that has never previously been proposed for treating dAMD. To elucidate the mechanism of ripasudil-induced upregulation of phagocytosis, Robin then proposed and analyzed a follow-up RNA-seq experiment, which revealed upregulation of ABCA1, a lipid efflux pump and possible novel target. All hypotheses, experimental directions, data analyses, and data figures in the main text of this report were produced by Robin. As the first AI system to autonomously discover and validate novel therapeutic candidates within an iterative lab-in-the-loop framework, Robin establishes a new paradigm for AI-driven scientific discovery.

Here is the full article from Nature. And here are two other new Nature pieces on related topics.

“Is the scientific enterprise too risk-averse?”

I participated in an Open to Debate debate at Johns Hopkins not too long ago, argued yes, and my side saw a twelve-point shift in our favor. Here are some links:

Links to the full debate:

-

YouTube: https://youtu.be/AuPz09dpLSc

Also to be broadcast over NPR.

Meta-papers in science (from my email)

From Brennan Plaetzer:

Hi Tyler,

Your post yesterday argued AI will replace papers with meta-papers that synthesize, re-run, and extend prior work. I built one in oncology last month, before reading your post.

I ran my friend Omar Abdel-Wahab’s (MSK) last ten papers through an AI synthesis layer. This came out on top: an integrated, falsifiable hypothesis bridging two of his 2025 papers, one in Cancer Cell on a refractory MEK1 mutation, one in Cell on splicing-derived neoantigens. It comes with seven testable experiments his lab can run today. The move generalizes to any field: surface the questions hidden in plain view, the ones the source papers could answer with their own data but never asked.

https://page56capital.com/writings/cross-paper-synthesis

The “box” you described already exists in biology. It just doesn’t have a name there yet.

Brennan

Note that if you, in the future, do not do this kind of thing yourself, someone else, or their AI agent, will do it for you. Solve for the equilibrium!

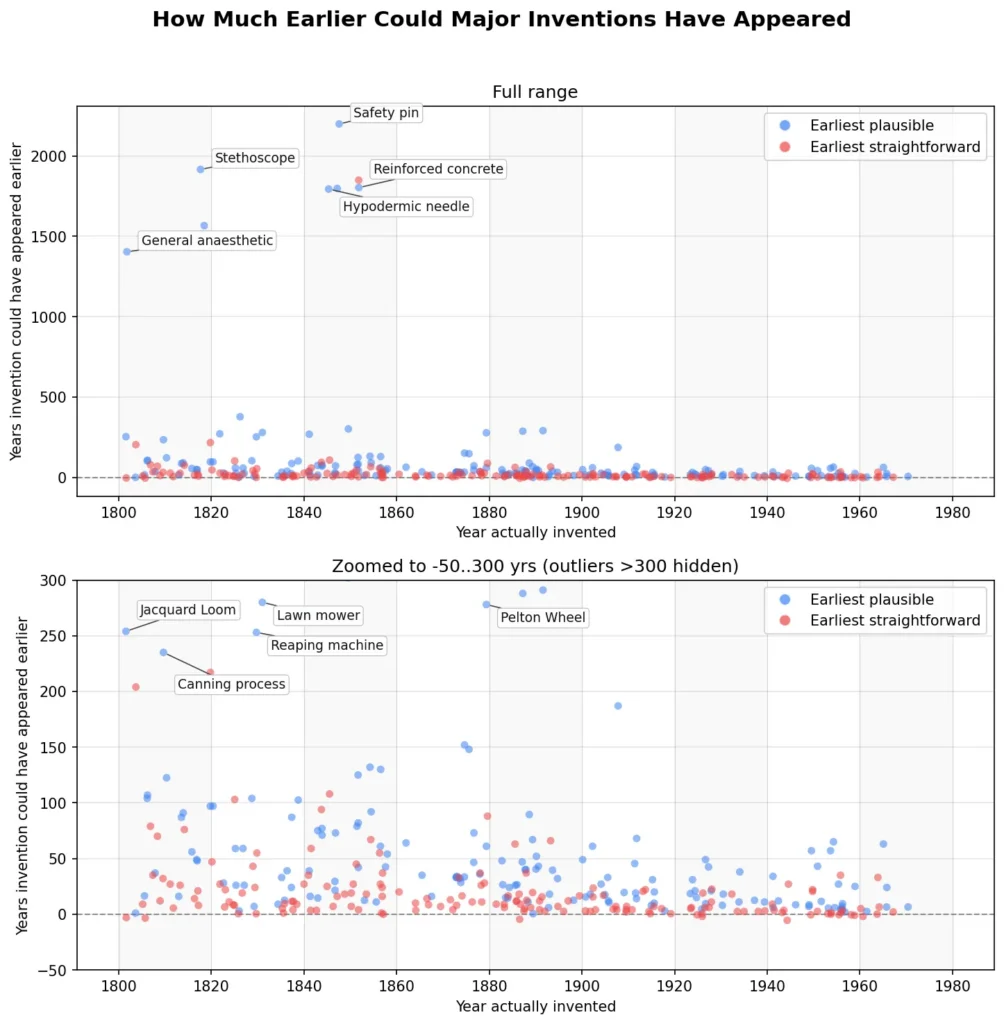

Ideas Behind Their Time: Part Two

In 2010 I wrote about Ideas Behind Their Time:

We are all familiar with ideas said to be ahead of their time, Babbage’s analytical engine and da Vinci’s helicopter are classic examples. We are also familiar with ideas “of their time,” ideas that were “in the air” and thus were often simultaneously discovered such as the telephone, calculus, evolution, and color photography. What is less commented on is the third possibility, ideas that could have been discovered much earlier but which were not, ideas behind their time.

I gave experimental economics, random clinical trials and view morphing (“bullet time”) as examples. Jason Crawford has a list discussing the wheel, the steam engine and bicycles among other possibilities. In some cases, further exploration indicates that an idea required precursors and so was not as behind its times as first suspected, in rare cases, however, good ideas really could have been invented much earlier.

Using Claude, Brian Potter has significantly expanded the list by looking systematically across a wide range of inventions and asking could they have been invented earlier? Most could not. Put the other way, most useful technologies tend to be invented quite quickly once they are possible–this is reassuring. The airplane, for example, could not have been invented before a high power-to-weight engine, which happened circa 1880 making the late 1880s the earliest feasible date for powered flight. Thus, the Wright Brothers (1903) were only just behind the earliest feasible date–and that is true for many inventions.

The ideas very far behind their time include the stethoscope, general anesthesia and reinforced concrete and quite far behind are the Jacquard loom and canning. Is there a pattern here?

Addendum: Brian’s Github with the full prompt and output for each invention is here.

Using agents to build economic datasets

Constructing datasets from primary sources is one of the costliest tasks in empirical economics. We propose Deep Research on a Loop (DRIL), a methodology that uses AI agents to assemble datasets from publicly available sources. DRIL applies a fixed research instrument across a mapped unit space (e.g., countries by years), with a two-stage architecture separating design from implementation. The instrument specifies variables and coding rules, an evidence policy governs sources and citations, and data quality mechanisms track gaps and uncertainty explicitly. We exercise DRIL on a 2025 update of the Global Tax Expenditures Database for eight Latin American and Caribbean countries. The run produces 129 sources and 136 evidence records, covering 22 qualitative fields fully and 6 quantitative estimate types with documented gaps, at the cost of a standard LLM subscription comparable to a few hours of research-assistant work. We argue that even partial automation of dataset construction can shift the production function of empirical economics.

That is from a new NBER working paper by

Another use of AI in research (from my email)

“Another thing we (John [Horton] and I) have thought about is having a swarm of AIs “fight” over a literature. They could take the cumulative datasets available and continuously argue until they understand the question. One line of thinking says they reach a stalemate (as scientists currently do). But we think not. More likely, they push evidentiary understanding to the limit and coalesce around what’s most probable — if not definitive!”

That is from Benjamin Manning.

Will AI kill the research paper?

Imagine taking a macroeconomics paper and adding a little button at the end “Press this button to update this paper with the latest macro data.”

All of a sudden you have multiple papers rather than one, and no single canonical version. It is the latter versions, not created directly by the authors, that people will look at.

Imagine adding another button, to either micro or macro papers “Please rerun these results using what the AI thinks might be five other different yet still plausible specifications.”

Then you have more papers yet.

Ultimately, why not just build a “meta-paper,” using AI, to answer any possible question about the subject area under consideration. This meta-paper would allow the reader, using AI, to make many sorts of modifications and additions to the basic work. The meta-paper also would allow the reader to add new data, to run additional robustness checks, and to do whatever else you might think of. Once again, the canonical version of the paper evolves away.

A researcher might spend a significant part of his or her career building such a meta-paper. Imagine a meta-paper, or sometimes I call it a “box,” devoted to answering questions about say fiscal policy, minimum wage hikes, or maybe the Industrial Revolution. Fed researchers would spend their entire careers, not writing papers, but improving the Fed’s “box” that answers questions about monetary policy and also prudential supervision.

Who will be good at doing such things? Is it the people today who become the top economists, or not? Will it be a highly decentralized endeavor, or, given the compute and team work requirements, a highly centralized one?

Economics is going to change a lot, as will many of the other sciences.

It is funny, and tragic, how much some of you are still obsessed with writing and publishing “papers.”

Which are the most common everyday phenomena that we don’t properly understand?

Off the top of my head:

• Lightning (how does it happen?)

• Sleep; dreams (why do they exist?)

• Glass (thermodynamics of formation)

• Turbulence (when does it start?)

• Morphogenesis (how does a creature know what should go where?)

• Rain (it seems to start faster than models would predict)

• Ice (dynamics of slipperiness)

• Static electricity (which material will donate electrons?)

• General anaesthetic. (And the mechanism of a lot of drugs, e.g. paracetamol.)

That is from Patrick Collison. It is a further interesting question how many of those questions will be answered by what is sometimes called AGI. Perhaps none of them? In at least some of those cases, what is scarce is experimental data, not reasoning per se.

The UAP report so far

I will stick with my earlier Free Press predictions:

The fact remains that, if you talk with insiders, they will confirm that the federal government faces some big mysteries. It seems that we have data on what appear to be craft that move very fast, have no visible means of propulsion, and can accelerate in a surprising manner. Radar, infrared, and other forms of data are cited to varying degrees, plus there are eyewitness pilot reports, broadly consistent with what our instruments are telling us.

And this:

Assuming a reasonable chunk of the data are declassified, I think we will simply see more of the same kind of material we’ve seen in the past: more data on entities that appear to move very quickly and in mysterious ways, but with no real explanations. We will see, as I’ve argued before, that the government itself does not know what is going on, and has been afraid to admit that. That may be the real “conspiracy” and why the veil of secrecy has been relatively difficult to pierce.

As of yesterday, there are plenty of additional videos of what seem to be glowing orbs moving fast and in unpredictable ways. Or try this one. Here is another weird one. Or try this. And another one, near military craft. And what is this?

One thing we can conclude is that the debunkers, who have been suggesting this is all camera tricks, parallax issues, or people not understanding how videos work, are proven wrong in general, even though they are right about some particular cases. On that point we can move on, as I have been arguing for some while. Mick West is not your proper guide here.

Nonetheless we still do not know what it all means, and I do not see proof of anything in particular.

I also will stress my earlier point that we are not going to see alien bodies or alien technologies, or anything meaningful connected to Roswell. That is sheer fantasy, or sometimes locos.

340 million hits in the first twelve hours? More people will be believing in aliens in any case, I suspect. Or will it be demons?

It is fashionable in the comments sections of blogs to call this topic a waste of time, but the serious people in the military and national security — most of whom do not cite alien presence — do not see it that way.

And they will be releasing more materials. These materials are being released because some subsection of “the Deep State” wants to know what is going on. As do I.

William Stanley Jevons as polymath

In the 1860s Jevons built a Logical Abacus, sometimes called a logical piano, a kind of early computer that could perform (some kinds of) logical operations faster than humans could. It is held in the Museum of the History of Science at Oxford University, and you can think of its structure and operation as broadly akin to a player piano in music. It was limited in its powers, and geared mainly toward replicating Boolean logic, but extreme in its ultimate ambitions. Jevons understood the potential. In his written presentation of the project, Jevons cites the work of Charles Babbage, and noted that “material machinery is capable, in theory at least, of rivalling the labours of the most practiced mathematicians in all branches of their science. Mind thus seems able to impress some of its highest attributes upon matter, and to create its own rival in the wheels and levers of an insensible machine.” Jevons understood that science would be able to tackle some of the most difficult projects, and he wanted to be on as many of those frontiers as possible. He understood that his own work was a mere beginning, and he wanted to press forward as much as possible.25See Jevons (1870, the quotation from p.498), and also Maas (2005, chapter six). For a general background on Boolean logic, see Hailperin (1986).

Jevons also studied molecular motion in liquids and developed the concept of “pedesis,” a precursor of what we now call Brownian motion. That said, Jevons thought his pedesis was an electrical phenomenon related to osmosis, and so he turned out to be incorrect in his fundamental hypotheses. Nonetheless, this topic, like the others, showed he was an observant mind and obsessed with developing theories to explain anything and everything. He wasn’t just a pedant, rather he made real contribution to a number of scientific fields above and beyond economics.26On Jevons on pedesis and Brownian motion, see Brush (1968).

Jevons also was a “born collector” in the words of Keynes, and an extreme bibliomaniac. He accumulated thousands of books, and he lined the walls of his house and attic with them, and also stored them in piles in the attic, which became a problem for his wife upon his passing.

That is from my recent generative book The Marginal Revolution: Rise and Decline, and the Pending AI Revolution.

Growth is getting harder to find, not ideas

Relatively flat US output growth versus rising numbers of US researchers is often interpreted as evidence that ideas are getting harder to find. We build a new 45-year panel tracking the universe of US firms’ patenting to investigate the micro underpinnings of this claim, separately examining the relationships between research inputs and ideas (patents) versus ideas and growth. We find that average patents per R&D input are increasing, the elasticity of patents to R&D inputs is flat or rising, and there is no systematic evidence of a secular decline in patenting after controlling for research inputs. We then document a positive, significant, and fairly steady relationship between firms’ growth in ideas (patents) and labor productivity. Average firm growth after controlling for idea growth, however, declines. Together, these results suggest that innovative efforts play a key role in sustaining growth that has not diminished over the last four decades.

Here is the paper by Teresa C. Fort, Nathan Goldschlag, Jack Liang, Peter K. Schott, and Nikolas Zolas.