Friday assorted links

1. Guess why Utah has so few restaurants.

2. Invisible College, August, for 18-22 year olds, Cambridge UK.

3. How did Kerala become relatively wealthy?

4. Sharks make clicking sounds? (NYT)

5. Digital Delacroix (NYT).

6. Bari Weiss interviews Leonard Leo.

7. Speculative.

8. Anthropic on how the AIs think. Blog post here.

Stripe economics of AI fellowship

The economics of AI remains surprisingly understudied, even as technical progress in artificial intelligence continues rapidly. The Stripe Economics of AI Fellowship aims to help fill that gap by supporting foundational academic research in the area.

We invite graduate students and early-career researchers who are interested in studying the economics of AI to apply, regardless of prior experience. Fellows receive a grant of at least $10k, participate in conferences with leading economists and technologists, and have the potential to access unique data via Stripe and its customers. Our initial cohort will include 15-20 fellows, and will form the foundation of a community at the bleeding edge of economic research.

The link has further information, here is the tweet thread from the excellent Basil Halperin.

Is a VAT an export subsidy?

Here is a good post by Brian Albrecht, he works through the logic. A VAT, properly understood, does not favor say European producers over American producers. Work through his example if you don’t see this right away. In this sense the pro-tariff “fairness” arguments are quite wrong. Yet I am not entirely convinced by the rebuttals. If you compare “the European expoter market position” to “the American exporter market position” it is correct that parity of treatment holds.

But there is another way to pose the question and that is “should the resources in the EU be allocated toward export, or not?” And then exports are VAT-free, and within-EU sales generally are not VAT-free. So there is an encouragement to exports here. America has sales taxes, but VAT rates usually are higher. Thus you can say that Europe does more to encourage exporters than does the United States. Of course you can say the same about many other European government interventions. Germany’s notorious Sunday closing laws also encourage more exports. Send it to the US, and let it be sold on a Sunday, bitte! (Just not in Paramus, NJ.)

From an American point of view, I don’t think anything is wrong with this kind of “export subsidy” (and that is not how I would describe it in a first-order sense, but we are steelmanning here). We get more German goods because they keep their shops closed on Sundays. Good for us, Alex nails the bottom line. Note that every time we liberalize and improve some part of the American economy, the “net European subsidy to exports” goes up. We want to keep on doing that, not its opposite.

It perhaps would be helpful if the people on the free trade side of the debate recognized this point a little more explicitly.

Where do stock market returns come from?

Here is a new and sure to be controversial piece from the JPE:

Why does the stock market rise and fall? From 1989 to 2017, the real per capita value of corporate equity increased at a 7.2% annual rate. We estimate that 40% of this increase was attributable to a reallocation of rewards to shareholders in a decelerating economy, primarily at the expense of labor compensation. Economic growth accounted for just 25% of the increase, followed by a lower risk price (21%) and lower interest rates (14%). The period 1952–88 experienced only one-third as much growth in market equity, but economic growth accounted for more than 100% of it.

That is by Daniel L. Greenwald, Martin Leftau, and Sydney C. Ludvigson. Of course in more recent times it is tech stocks that have done very well, and they also tend to elevate pay standards.

Dean Ball on “how it will be”

Your daily life will feel more controllable and legible than it does now. Nearly everything will feel more personalized to you, ready for you whenever you need it, in just the way you like it. This won’t be because of one big thing, but because of unfathomable numbers of intelligent actions taken by computers that have learned how to use computers. Every product you buy, every device you use, every service you employ, will be brought to you by trillions of computers talking to themselves and to one another, making decisions, exercising judgments, pursuing goals.

At the same time, the world at large may feel more disordered and less legible. It is hard enough to predict how agents will transform individual firms. But when you start to think about what happens when every person, firm, and government has access to this technology, the possibilities boggle the mind.

You may feel as though you personally, and “society” in general, has less control over events than before. You may feel dwarfed by forces new and colossal. I suspect we have little choice but to embrace them. Americans’ sense that they have lost control will only be worsened if other countries embrace the transformation and we lag behind.

Here is the full post.

That was then, this is now

This year is likely to be remembered for the Covid-19 pandemic and for a significant presidential election, but there is a new contender for the most spectacularly newsworthy happening of 2020: the unveiling of GPT-3. As a very rough description, think of GPT-3 as giving computers a facility with words that they have had with numbers for a long time, and with images since about 2012…

The eventual uses of GPT-3 are hard to predict, but it is easy to see the potential. GPT-3 can converse at a conceptual level, translate language, answer email, perform (some) programming tasks, help with medical diagnoses and, perhaps someday, serve as a therapist. It can write poetry, dialogue and stories with a surprising degree of sophistication, and it is generally good at common sense — a typical failing for many automated response systems. You can even ask it questions about God.

…It also has the potential to outperform Google for many search queries, which could give rise to a highly profitable company.

…It is not difficult to imagine a wide variety of GPT-3 spinoffs, or companies built around auxiliary services, or industry task forces to improve the less accurate aspects of GPT-3. Unlike some innovations, it could conceivably generate an entire ecosystem.

That was the opening paragraph of my 2020 Bloomberg column on GPT-3.

Thursday assorted links

1. The people who still use typewriters.

2. “Vaccine skeptic hired to head federal study of immunizations and autism.”

3. David Perell on AI and writing.

4. Paralysed man stands again after receiving ‘reprogrammed’ stem cells.

5. Modeling how the military and the media interact. An underdiscussed topic.

6. How baseball analytics are keeping pitchers from true greatness (NYT).

The Madmen and the AIs

In Collaborating with AI Agents: Field Experiments on Teamwork, Productivity, and Performance Harang Ju and Sinan Aral (both at MIT) paired humans and AIs in a set of marketing tasks to generate some 11,138 ads for a large think tank. The basic story is that working with the AIs increased productivity substantially. Important, but not surprising. But here is where it gets wild:

[W]e manipulated the Big Five personality traits for each AI, independently setting them to high or low levels using P2 prompting (Jiang et al., 2023). This allows us to systematically investigate how AI personality traits influence collaborative work and whether there is heterogeneity in their effects based on the personality traits of the human collaborators, as measured through a pre-task survey.

In other words, they created AIs which were high and low on the “big 5” OCEAN metrics, Openness, Conscientiousness, Extraversion, Agreeableness and Neuroticism and then they paired the different AIs with humans who were also rated on the big-5.

The results were quite amusing. For example, a neurotic AI tended to make a lot more copy edits unless paired with an agreeable human.

AI Alex: What do you think of this edit I made to the copy? Do you think it is any good?

Agreeable Alex: It’s great!

AI Alex: Really? Do you want me to try something else?

Agreeable Alex: Nah, let’s go with it!

AI Alex: Ok. 🙂

Similarly, if a highly conscientiousness AI and a highly conscientiousness human were paired together they exchanged a lot more messages.

It’s hard to generalize from one study to know exactly which AI-human teams will work best but we all know some teams just work better–every team needs a booster and a sceptic, for example– and the fact that we can manipulate AI personalities to match them with humans and even change the AI personalities over time suggests that AIs can improve productivity in ways going beyond the ability of the AI to complete a task.

Hat tip: John Horton.

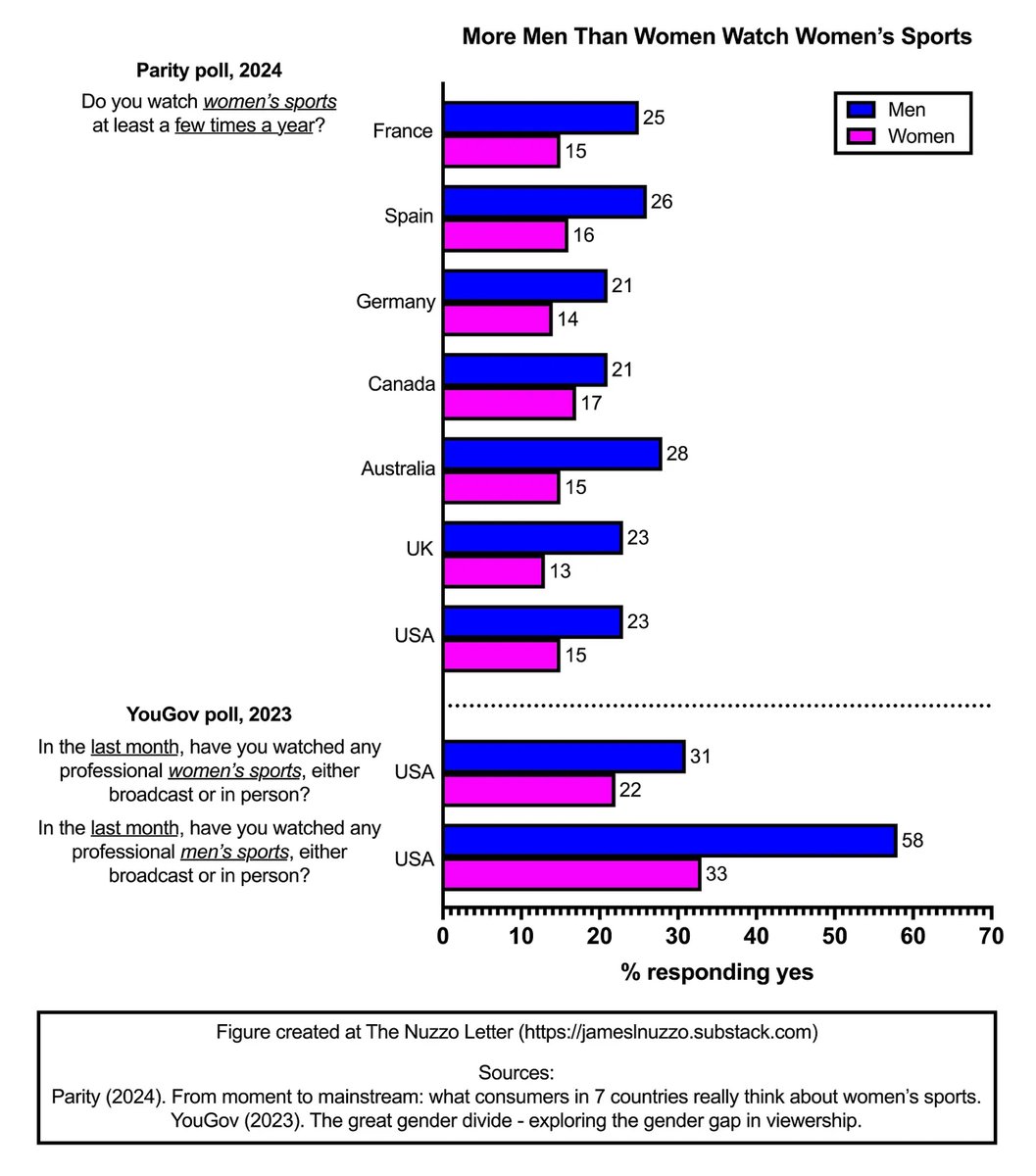

Men watch women’s sports more than women do

Here is the link, via Alex T.

Balaji on the new image release

A few thoughts on the new ChatGPT image release.

(1) This changes filters. Instagram filters required custom code; now all you need are a few keywords like “Studio Ghibli” or Dr. Seuss or South Park.

(2) This changes online ads. Much of the workflow of ad unit generation can now be automated, as per QT below.

(3) This changes memes. The baseline quality of memes should rise, because a critical threshold of reducing prompting effort to get good results has been reached.

(4) This may change books. I’d like to see someone take a public domain book from Project Gutenberg, feed it page by page into Claude, and have it turn it into comic book panels with the new ChatGPT. Old books may become more accessible this way.

(5) This changes slides. We’re now close to the point where you can generate a few reasonable AI images for any slide deck. With the right integration, there should be less bullet-point only presentations.

(6) This changes websites. You can now generate placeholder images in a site-specific style for any <img> tag, as a kind of visual Loren Ipsum.

(7) This may change movies. We could see shot-for-shot remakes of old movies in new visual styles, with dubbing just for the artistry of it. Though these might be more interesting as clips than as full movies.

(8) This may change social networking. Once this tech is open source and/or cheap enough to widely integrate, every upload image button will have a generate image alongside it.

(9) This should change image search. A generate option will likewise pop up alongside available images.

(10) In general, visual styles have suddenly become extremely easy to copy, even easier than frontend code. Distinction will have to come in other ways.

Here is the full tweet.

Why LLMs are so good at economics

I can think of a few reasons:

At least for the time being, even very good LLMs cannot be counted on for originality. And at least for the time being, good economic reasoning does not require originality, quite the contrary.

Good chains of reasoning in economics are not too long and complicated. If they run on for very long, there is probably something wrong with the argument. The length of these effective reasoning chains is well within the abilities of the top LLMs today.

Plenty of good economics requires a synthesis of theoretical and empirical considerations. LLMs are especially good at synthesis.

In economic arguments and explanations, there are very often multiple factors. LLMs are very good at listing multiple factors, sometimes they are “too good” at it, “aargh! not another list, bitte…”

Economics journal articles are fairly high in quality and they are generally consistent with each other, being based on some common ideas such as demand curves, opportunity costs, gains from trade, and so on. Odds are that a good LLM has been trained “on the right stuff.”

A lot of core economics ideas are “hard to see from scratch,” but “easy to grasp once you see them.” This too plays to the strength of the models as strong digesters of content.

And so quality LLMs will be better at economics than many other fields of investigation.

Wednesday assorted links

1. Inaccurate beliefs about skill decay. George Loewenstein papers have been quite good historically…

2. New Dwarkesh book with Stripe Press, an oral history of AI.

3. New Alice Evans paper on the global Islamic revival.

5. 2025 color stats.

Rethinking regulatory fragmentation

Regulatory fragmentation occurs when multiple federal agencies oversee a single issue. Using the full text of the Federal Register, the government’s official daily publication, we provide the first systematic evidence on the extent and costs of regulatory fragmentation. Fragmentation increases the firm’s costs while lowering its productivity, profitability, and growth. Moreover, it deters entry into an industry and increases the propensity of small firms to exit. These effects arise from redundancy and, more prominently, from inconsistencies between government agencies. Our results uncover a new source of regulatory burden, and we show that agency costs among regulators contribute to this burden.

That is from a new paper by Joseph Kalmenovitz, Michelle Lowry, and Ekaterina Volkova, forthcoming in Journal of Finance. Via the excellent Kevin Lewis.

Tuesday assorted links

1. 23 Australians sent to the hospital by red ant stings.

2. Maurice Obstfeld on the trade deficit.

3. William Vollmann update, good piece.

4. The State Capacity crisis, and a popular version here.

Jonathan Bechtel on AI tutoring (from my email)

You recently mentioned the Alpha School and their claims about AI tutoring. I share the skepticism expressed in your comments section regarding selection bias and the lack of validated academic benchmarks.

I wanted to highlight a more rigorously evaluated project called Tutor CoPilot, conducted jointly by Stanford’s NSSA and the online tutoring firm FEVTutor (sadly they’ve since gone bankrupt). To my knowledge, it’s the first and only RCT examining AI-assisted tutoring in real K-12 school districts.

Here’s the study: https://nssa.stanford.edu/studies/tutor-copilot-human-ai-approach-scaling-real-time-expertise

Key findings:

- Immediate session-level learning outcomes improved by 4-9%.

- Remarkably, the tool impacted tutors even more than students. After six weeks, inexperienced tutors reached performance parity with seasoned tutors, and previously low-performing tutors achieved average-level results.

Having contributed directly to the implementation, I observed tutors adapting their interactions based on insights from the AI. This study did not measure its impact on more distal measures of learning like standardized tests and benchmark assessments, but this type of research is in the works at various organizations.

Given your recent writings on AI and education, I thought you’d find this compelling.