Category: Science

Sentences to ponder

In fact, it was the Obama administration that paused funding for high-risk GoF studies in 2014. The ban was lifted by none other than Donald Trump in 2017. At the time, outlets like Scientific American and Science covered the decision, in articles that quoted scientists talking about what could go wrong. Remind yourself of this the next time you see rightists trumpeting some headline showing the media being wrong about something.

That is from Richard Hanania’s Substack.

Major reforms at the NSF

The National Science Foundation (NSF), already battered by White House directives and staff reductions, is plunging into deeper turmoil. According to sources who requested anonymity for fear of retribution, staff were told today that the agency’s 37 divisions—across all eight NSF directorates—are being abolished and the number of programs within those divisions will be drastically reduced. The current directors and deputy directors will lose their titles and might be reassigned to other positions at the agency or elsewhere in the federal government.

The consolidation appears to be driven in part by President Donald Trump’s proposal to cut the agency’s $9 billion budget by 55% for the 2026 fiscal year that begins on 1 October. NSF’s decision to abolish its divisions could also be part of a larger restructuring of the agency’s grantmaking process that involves adding a new layer of review. NSF watchers fear that a smaller, restructured agency could be more vulnerable to pressure from the White House to fund research that suits its ideological bent.

As soon as this evening, NSF is also expected to send layoff notices to an unspecified number of its 1700-member staff. The remaining staff and programs will be assigned to one of the eight smaller directorates. Staff will receive a memo on Friday “with details to be finalized by the end of the fiscal year,” sources tell Science. The agency is also expected to issue another round of notices tomorrow terminating grants that have already been awarded, sources say. In the past 3 weeks, the agency has pulled the plug on almost 1400 grants worth more than $1 billion.

Here is more information, this story is still developing…

My excellent Conversation with Jack Clark

This was great fun and I learned a lot, here is the audio, video, and transcript. Here is part of the episode summary:

Jack and Tyler explore which parts of the economy AGI will affect last, where AI will encounter the strongest legal obstacles, the prospect of AI teddy bears, what AI means for the economics of journalism, how competitive the LLM sector will become, why he’s relatively bearish on AI-fueled economic growth, how AI will change American cities, what we’ll do with abundant compute, how the law should handle autonomous AI agents, whether we’re entering the age of manager nerds, AI consciousness, when we’ll be able to speak directly to dolphins, AI and national sovereignty, how the UK and Singapore might position themselves as AI hubs, what Clark hopes to learn next, and much more.

An excerpt:

COWEN: Say 10 years out, what’s your best estimate of the economic growth rate in the United States?

CLARK: The economic growth rate now is on the order of 1 percent to 2 percent.

COWEN: There’s a chance at the moment, we’re entering a recession, but at average, 2.2 percent, so let’s say it’s 2.2.

CLARK: I think my bear case on all of this is 3 percent, and my bull case is something like 5 percent. I think that you probably hear higher numbers from lots of other people.

COWEN: 20 and 30, I hear all the time. To me, it’s absurd.

CLARK: The reason that my numbers are more conservative is, I think that we will enter into a world where there will be an incredibly fast-moving, high-growth part of the economy, but it is a relatively small part of the economy. It may be growing its share over time, but it’s growing from a small base. Then there are large parts of the economy, like healthcare or other things, which are naturally slow-moving, and may be slow in adoption of this.

I think that the things that would make me wrong are if AI systems could meaningfully unlock productive capacity in the physical world at a really surprisingly high compounding growth rate, automating and building factories and things like this.

Even then, I’m skeptical because every time the AI community has tried to cross the chasm from the digital world to the real world, they’ve run into 10,000 problems that they thought were paper cuts but, in sum, add up to you losing all the blood in your body. I think we’ve seen this with self-driving cars, where very, very promising growth rate, and then an incredibly grinding slow pace at getting it to scale.

I just read a paper two days ago about trying to train human-like hands on industrial robots. Using reinforcement learning doesn’t work. The best they had was a 60 percent success rate. If I have my baby, and I give her a robot butler that has a 60 percent accuracy rate at holding things, including the baby, I’m not buying the butler. Or my wife is incredibly unhappy that I bought it and makes me send it back.

As a community, we tend to underestimate that. I may be proved to be an unrealistic pessimist here. I think that’s what many of my colleagues would say, but I think we overestimate the ease with which we get into a physical world.

COWEN: As I said in print, my best estimate is, we get half a percentage point of growth a year. Five percent would be my upper bound. What’s your scenario where there’s no growth improvement? If it’s not yours, say there’s a smart person somewhere in Anthropic — you don’t agree with them, but what would they say?

Interesting throughout, definitely recommended.

Has Clothing Declined in Quality?

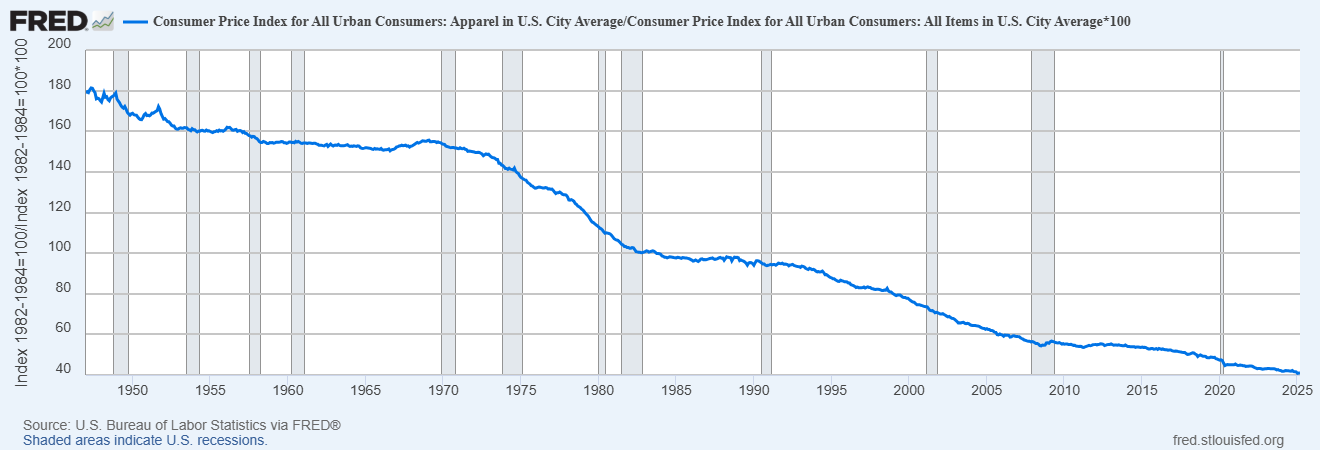

The Office of the U.S. Trade Representative (USTR) recently tweeted that they wanted to bring back apparel manufacturing to the United States. Why would anyone want more jobs with long hours and low pay, whether historically in the US or currently in places like Bangladesh? Thanks in part to international trade, the real price of clothing has fallen dramatically (see figure below). Clothing expenditure dropped from 9-10% of household budgets in the 1960s (down from 14% in 1900) to about 3% today.

Apparently, however, not everyone agrees. While some responses to my tweet revealed misunderstandings of basic economics, one interesting counter-claim emerged–the low price of imported clothing has been a false bargain, the argument goes, because the quality of clothing has fallen.

The idea that clothing has fallen in quality is very common (although it’s worth noting that this complaint was also made more than 50 years ago, suggesting a nostalgia bias, like the fact that the kids today are always going to hell). But are there reliable statistics documenting a decline in quality? In some cases, there are! For example, jeans from the 1960s-80s, for example, were often 13–16 oz denim, compared to 9–11 oz today. According to some sources, the average garment life is down modestly. The statistical evidence is not great but the anecdotes are widespread and I shall accept them. Most sources date the decline in quality to the fast fashion trend which took off in the 1990s and that provides a clue to what is really going on.

Fast fashion, led by firms like Zara, is a business model that focuses on rapidly transforming street style and runway trends into mass-produced, low-cost clothing—sometimes from runway to store within weeks. The model is not about timeless style but about synchronized consumption: aligning production with ephemeral cultural signals, i.e. to be fashionable, which is to say to be on trend, au-courant and of the moment.

It doesn’t make sense to criticize fast fashion for lacking durability—by design, it isn’t meant to last. Making it durable would actually be wasteful. The product isn’t just clothing; it’s fashionable clothing. And in that sense, quality has improved: fast fashion is better than ever at delivering what’s current. Critics who lament declining quality miss the point—it’s fun to buy new clothes and if consumers want to buy new clothes it doesn’t make sense to produce long lasting clothes. People do own many more pieces of clothing today than in the past but the flow is the fun.

So my argument is that the decline in “quality” clothing has little to do with the shift to importing but instead is consumer-driven and better understood as an increase in the quality of fashion. Testing my theory isn’t hard. Consider clothing where function, not just fashion, is paramount: performance sportswear and Personal Protective Equipment (PPE).

There has been a massive and obvious improvement in functional clothing. The latest GoreTex jackets, for example, are more than five times as water resistant (28 000 mm hydrostatic head) compared to the best waxed cotton technology of the past (~5 000 mm) and they are breathable (!) and lighter. Or consider PolarTec winter jackets, originally developed for the military these jackets have the incredible property of releasing heat when you are active but holding it in when you are inactive. (In the past, mountain climbers and workers in extreme environments had to strip on or off layers to prevent over-heating or freezing while exerting effort or resting.) Amazing new super shoes can actually help runners to run faster! Now that is high quality. Personal protective equipment has also increased in quality dramatically. Industrial workers and intense sports enthusiasts can now wear impact resistant gloves which use non-Newtonian polymers that stiffen on impact to reduce hand injuries.

Moreover, it’s not just functional clothing that has increased in quality. For those willing to look, there is in fact plenty of high-quality clothing readily available. From Iron Heart, for example, you can buy jeans made with 21oz selvedge indigo denim produced in Japan. Pair with a high-quality Ralph Lauren shirt, a Mackinaw Wool Cruiser Jacket and a nice pair of Alden boots. Experts like the excellent Derek Guy regularly highlight such high-quality options. Of course, when Derek Guy discusses clothes like this people complain about the price and accuse him of being an elitist snob. Sigh. Tradeoffs are everywhere.

Moreover, it’s not just functional clothing that has increased in quality. For those willing to look, there is in fact plenty of high-quality clothing readily available. From Iron Heart, for example, you can buy jeans made with 21oz selvedge indigo denim produced in Japan. Pair with a high-quality Ralph Lauren shirt, a Mackinaw Wool Cruiser Jacket and a nice pair of Alden boots. Experts like the excellent Derek Guy regularly highlight such high-quality options. Of course, when Derek Guy discusses clothes like this people complain about the price and accuse him of being an elitist snob. Sigh. Tradeoffs are everywhere.

Critics long for a past when goods were cheap, high quality, and Made in America—but that era never really existed. Clothing in the past was more expensive and often low quality. To the extent that some products in the past were of higher quality–heavier fabric jeans, for example–that was often because the producers of the time couldn’t produce it less expensively. Technology and trade have increased variety along many dimensions, including quality. As with fast fashion, lower quality on some dimensions can often produce a superior product. And, of course, it should be obvious but it needs saying: products made abroad can be just as good—or better—than those made domestically. Where something is made tells you little about how well it’s made.

The bottom line is that international trade has brought us more options and if today’s household were to redirect the historical 9 – 10 % share of income to clothing, it could absolutely buy garments that are heavier, better-constructed, and longer-lived than the typical mid-century mass-market clothing.

How are economics publications changing?

This study examines publications in three leading general economics journals from the 1960s through the 2020s, considering levels and trends in the demographics of authors, methodologies of the studies, and patterns of co-authorship. The average age of authors has increased nearly steadily; there has been a sharp increase in the fraction of female authors; the number of authors per paper has risen steadily; and there has been a pronounced shift to articles using newly generated data. All but the first of these trends have been most pronounced in the most recent decade. The study also examines the relationships among these trends.

That is from a new NBER working paper by Daniel Hamermesh.

Markets in everything

Creative powerhouse VML, genomic innovators The Organoid Company, and sustainable biotechnology firm Lab-Grown Leather have joined forces to develop the world’s first T-Rex leather made using the extinct creature’s DNA…

“This project is a remarkable example of how we can harness cutting-edge genome and protein engineering to create entirely new materials. By reconstructing and optimizing ancient protein sequences, we can design T. Rex leather, a biomaterial inspired by prehistoric biology, and clone it into a custom-engineered cell line,” said Thomas Mitchell, CEO of The Organoid Company…

While it was previously believed that dinosaur DNA wouldn’t survive for millions of years, recent discoveries have found collagen preserved in various dinosaur fossils, including an 80-million-year-old T Rex.

Last year, MIT researchers decoded how the dinosaur collagen survived for so long. Interestingly, they discovered a specific atomic mechanism that shields collagen from water’s damaging effects.

In this new work, the T-rex-based leather material creation method differs from plant-based or synthetic alternatives by focusing on growing biological structures in a lab. This bio-fabrication process directly cultivates leather-like tissue from cells.

The process of creating T-Rex leather uses fossilized dinosaur collagen as a template. Using this, the team will generate a complete collagen sequence for the T-Rex to cultivate new skin.

The collagen sequence will be translated into DNA and introduced into Lab-Grown Leather’s cells.

Here is the full story, via Mike Doherty. The actual product might be ready by the end of the year, at what price I do not know.

We need more elitism

Even though the elites themselves are highly imperfect. That is the theme of my latest FP column. Excerpt:

Very often when people complain about “the elites,” they are not looking in a sufficiently elitist direction.

A prime example: It is true during the pandemic that the CDC and other parts of the government gave us the impression that the vaccines would stop or significantly halt transmission of the coronavirus. The vaccines may have limited transmission to some partial degree by decreasing viral load, but mostly this was a misrepresentation, perhaps motivated by a desire to get everyone to take the vaccines. Yet the vaccine scientists—the real elites here—were far more qualified in their research papers and they expressed a more agnostic opinion. The real elites were not far from the truth.

You might worry, as I do, that so many scientists in the United States have affiliations with the Democratic Party. As an independent, this does induce me to take many of their policy prescriptions with a grain of salt. They might be too influenced by NPR and The New York Times, and more likely to favor government action than more decentralized or market-based solutions. Still, that does not give me reason to dismiss their more scientific conclusions. If I am going to differ from those, I need better science on my side, and I need to be able to show it.

A lot of people do not want to admit it, but when it comes to the Covid-19 pandemic the elites, by and large, actually got a lot right. Most importantly, the people who got vaccinated fared much better than the people who did not. We also got a vaccine in record time, against most expectations. Operation Warp Speed was a success. Long Covid did turn out to be a real thing. Low personal mobility levels meant that often “lockdowns” were not the real issue. Most of that economic activity was going away in any case. Most states should have ended the lockdowns sooner, but they mattered less than many critics have suggested. Furthermore, in contrast to what many were predicting, those restrictions on our liberty proved entirely temporary.

Recommended.

Subterranean sentences to ponder

But the fact that it’s commonplace is precisely why Earth’s subsurface biosphere is so compelling. Mud is everywhere, which means it is important. If you add up the total amount of mud underneath all the worlds’s oceans, you come up with a volume equivalent to about the entire Atlantic Ocean. And, per cubic meter, there are 100 to 100,000 times more microbial cells in mud than there are in seawater. That means that there’s so much intraterrestrial life in the subsurface that it’s hard to even fathom it. The total amount of microbial cells in the marine sediment subsurface is estimated to be 2.9 x 10 [to the 29th] cells. This is about 10,000 times more than the estimated number of stars in the universe. But that’s not the whole subsurface. You’d have to at least double this number to include the microbial cells living deep underneath the land. And some of these cells may have found pockets where the food is more abundant than the average location, so more cells can live there than our models predict. For these reasons, the actual number of microbial cells in the subsurface biosphere is certain to be much higher than our current estimates.

That is from the new and interesting IntraTerrestrtials: Discovering the Strangest Life on Earth, by Karen G Lloyd.

Parallels between our current time and 17th century England

That is the topic of my recent essay for The Free Press. Excerpt:

Ideologically, the English 17th century was weird above all else.

Millenarianism blossomed, and the occult and witchcraft became stronger obsessions. This was an age of religious and economic upheaval; King James I even wrote a book partly about witches called Daemonologie. The greater spread of pamphlets and books meant that witch accusations circulated more widely and more rapidly, and so the 1604 Witchcraft Act applied harsher punishments to supposed witches.

People were more likely to fear imminent transformation, and new groups sprouted up with names such as “Fifth Monarchy Men,” devoted to the idea that a new reign of Christ would usher in the end of the world. Protestantism splintered, giving rise to Puritanism and numerous sects, many of them extreme.

Meanwhile, Roger Williams brought ideas of free speech and freedom of conscience to America, founding what later became the state of Rhode Island. The development of economics as a science with an understanding of markets (credit Nicholas Barbon and Dudley North) dates from that time, as do the first libertarians, namely the Levellers, a liberty-oriented group from the time of the English Civil War.

All of these developments were supported by the falling price of printing, giving rise to an extensive use of pamphlets and broadsheets to communicate and debate ideas, often in London coffeehouses. Johannes Gutenberg had built the printing press for Europe much earlier, in the middle of the 15th century—but 17th-century England was the time and place when a commercial middle class could start to afford buying printed works.

I explore the parallels with today at the link, recommended.

A Blueprint for FDA Reform

The new FDA report from Joe Lonsdale and team is impressive. It has a lot of new material, is rich in specifics and bold in vision. Here are just a few of the recommendation which caught my eye:

From the prosaic: GMP is not necessary if you are not manufacturing:

In the U.S., anyone running a clinical trial must manufacture their product under full Good Manufacturing Practices (GMP) regardless of stage. This adds enormous cost (often $10M+) and more importantly, as much as a year’s delay to early-stage research. Beyond the cost and time, these requirements are outright irrational: for example, the FDA often requires three months of stability testing for a drug patients will receive after two weeks. Why do we care if it’s stable after we’ve already administered it? Or take AAV manufacturing—the FDA requires both a potency assay and an infectivity assay, even though potency necessarily reflects infectivity.

This change would not be unprecedented either. By contrast, countries like Australia and China permit Phase 1 trials with non-GMP drug with no evidence of increased patient harm.

The FDA carved out a limited exemption to this requirement in 2008, but its hands are tied by statute from taking further steps. Congress must act to fully exempt Phase 1 trials from statutory GMP. GMP has its place in commercial-scale production. But patients with six months to live shouldn’t be denied access to a potentially lifesaving therapy because it wasn’t made in a facility that meets commercial packaging standards.

Design data flows for AIs:

With modern AI and digital infrastructure, trials should be designed for machine-readable outputs that flow directly to FDA systems, allowing regulators to review data as it accumulates without breaking blinding. No more waiting nine months for report writing or twelve months for post-trial review. The FDA should create standard data formats (akin to GAAP in finance) and waive documentation requirements for data it already ingests. In parallel, the agency should partner with a top AI company to train an LLM on historical submissions, triaging reviewer workload so human attention is focused only where the model flags concern. The goal is simple: get to “yes” or “no” within weeks, not years.

Publish all results:

Clinical trials for drugs that are negative are frequently left unpublished. This is a problem because it slows progress and wastes resources. When negative results aren’t published, companies duplicate failed efforts, investors misallocate capital, and scientists miss opportunities to refine hypotheses. Publishing all trial outcomes — positive or negative—creates a shared base of knowledge that makes drug development faster, cheaper, and more rational. Silence benefits no one except underperforming sponsors; transparency accelerates innovation.

The FDA already has the authority to do so under section 801 of the FDAAA, but failed to adopt a more expansive rule in the past when it created clinicaltrials.gov. Every trial on clincaltrials.gov should have a publication associated with it that is accessible to the public, to benefit from the sacrifices inherent in a patient participating in a clinical trial.

To the visionary:

We need multiple competing approval frameworks within HHS and/or FDA. Agencies like the VA, Medicare, Medicaid, or the Indian Health Service should be empowered to greenlight therapies for their unique populations. Just as the DoD uses elite Special Operations teams to pioneer new capabilities, HHS should create high-agency “SWAT teams” that experiment with novel approval models, monitor outcomes in real time using consumer tech like wearables and remote diagnostics, and publish findings transparently. Let the best frameworks rise through internal competition—not by decree, but by results.

…Clinical trials like the RECOVERY trial and manufacturing efforts like Operation Warp Speed were what actually moved the needle during COVID. That’s what must be institutionalized. Similarly, we need to pay manufacturers to compete in rapidly scaling new facilities for drugs already in shortage today. This capacity can then be flexibly retooled during a crisis.

Right now, there’s zero incentive to rapidly build new drug or device manufacturing plants because FDA reviews move far too slowly. Yet, when crisis strikes, America must pivot instantly—scaling production to hundreds of millions of doses or thousands of devices within weeks, not months or years. To build this capability at home, the Administration and FDA should launch competitive programs that reward manufacturers for rapidly scaling flexible factories—similar to the competitive, market-driven strategies pioneered in defense by the DIU. Speed, flexibility, and scale should be the benchmarks for success, not bureaucratic checklists. While the drugs selected for these competitive efforts shouldn’t be hypothetical—focus on medicines facing shortages right now. This ensures every dollar invested delivers immediate value, eliminating waste and strengthening our readiness for future crises.

To prepare for the next emergency, we need to practice now. That means running fast, focused clinical trials on today’s pressing questions—like the use of GLP-1s in non-obese patients—not just to generate insight, but to build the infrastructure and muscle memory for speed.

Read the whole thing.

Hat tip: Carl Close.

The Madmen and the AIs

In Collaborating with AI Agents: Field Experiments on Teamwork, Productivity, and Performance Harang Ju and Sinan Aral (both at MIT) paired humans and AIs in a set of marketing tasks to generate some 11,138 ads for a large think tank. The basic story is that working with the AIs increased productivity substantially. Important, but not surprising. But here is where it gets wild:

[W]e manipulated the Big Five personality traits for each AI, independently setting them to high or low levels using P2 prompting (Jiang et al., 2023). This allows us to systematically investigate how AI personality traits influence collaborative work and whether there is heterogeneity in their effects based on the personality traits of the human collaborators, as measured through a pre-task survey.

In other words, they created AIs which were high and low on the “big 5” OCEAN metrics, Openness, Conscientiousness, Extraversion, Agreeableness and Neuroticism and then they paired the different AIs with humans who were also rated on the big-5.

The results were quite amusing. For example, a neurotic AI tended to make a lot more copy edits unless paired with an agreeable human.

AI Alex: What do you think of this edit I made to the copy? Do you think it is any good?

Agreeable Alex: It’s great!

AI Alex: Really? Do you want me to try something else?

Agreeable Alex: Nah, let’s go with it!

AI Alex: Ok. 🙂

Similarly, if a highly conscientiousness AI and a highly conscientiousness human were paired together they exchanged a lot more messages.

It’s hard to generalize from one study to know exactly which AI-human teams will work best but we all know some teams just work better–every team needs a booster and a sceptic, for example– and the fact that we can manipulate AI personalities to match them with humans and even change the AI personalities over time suggests that AIs can improve productivity in ways going beyond the ability of the AI to complete a task.

Hat tip: John Horton.

What Follows from Lab Leak?

Does it matter whether SARS-CoV-2 leaked from a lab in Wuhan or had natural zoonotic origins? I think on the margin it does matter.

First, and most importantly, the higher the probability that SARS-CoV-2 leaked from a lab the higher the probability we should expect another pandemic.* Research at Wuhan was not especially unusual or high-tech. Modifying viruses such as coronaviruses (e.g., inserting spike proteins, adapting receptor-binding domains) is common practice in virology research and gain-of-function experiments with viruses have been widely conducted. Thus, manufacturing a virus capable of killing ~20 million human beings or more is well within the capability of say ~500-1000 labs worldwide. The number of such labs is growing in number and such research is becoming less costly and easier to conduct. Thus, lab-leak means the risks are larger than we thought and increasing.

A higher probability of a pandemic raises the value of many ideas that I and others have discussed such as worldwide wastewater surveillance, developing vaccine libraries and keeping vaccine production lines warm so that we could be ready to go with a new vaccine within 100 days. I want to focus, however, on what new ideas are suggested by lab-leak. Among these are the following.

Given the risks, a “Biological IAEA” with similar authority as the International Atomic Energy Agency to conduct unannounced inspections at high-containment labs does not seem outlandish. (Indeed the Bulletin of Atomic Scientists are about the only people to have begun to study the issue of pandemic lab risk.) Under the Biological Weapons Convention such authority already exists but it has never been used for inspections–mostly because of opposition by the United States–and because the meaning of biological weapon is unclear, as pretty much everything can be considered dual use. Notice, however, that nuclear weapons have killed ~200,000 people while accidental lab leak has probably killed tens of millions of people. (And COVID is not the only example of deadly lab leak.) Thus, we should consider revising the Biological Weapons Convention to something like a Biological Dangers Convention.

BSL3 and especially BSL4 safety procedures are very rigorous, thus the issue is not primarily that we need more regulation of these labs but rather to make sure that high-risk research isn’t conducted under weaker conditions. Gain of function research of viruses with pandemic potential (e.g. those with potential aerosol transmissibility) should be considered high-risk and only conducted when it passes a review and is done under BSL3 or BSL4 conditions. Making this credible may not be that difficult because most scientists want to publish. Thus, journals should require documentation of biosafety practices as part of manuscript submission and no journal should publish research that was done under inappropriate conditions. A coordinated approach among major journals (e.g., Nature, Science, Cell, Lancet) and funders (e.g. NIH, Wellcome Trust) can make this credible.

I’m more regulation-averse than most, and tradeoffs exist, but COVID-19’s global economic cost—estimated in the tens of trillions—so vastly outweighs the comparatively minor cost of upgrading global BSL-2 labs and improving monitoring that there is clear room for making everyone safer without compromising research. Incredibly, five years after the crisis and there has be no change in biosafety regulation, none. That seems crazy.

Many people convinced of lab leak instinctively gravitate toward blame and reparations, which is understandable but not necessarily productive. Blame provokes defensiveness, leading individuals and institutions to obscure evidence and reject accountability. Anesthesiologists and physicians have leaned towards a less-punitive, systems-oriented approach. Instead of assigning blame, they focus in Morbidity and Mortality Conferences on openly analyzing mistakes, sharing knowledge, and redesigning procedures to prevent future harm. This method encourages candid reporting and learning. At its best a systems approach transforms mistakes into opportunities for widespread improvement.

If we can move research up from BSL2 to BSL3 and BSL4 labs we can also do relatively simple things to decrease the risks coming from those labs. For example, let’s not put BSL4 labs in major population centers or in the middle of a hurricane prone regions. We can also, for example, investigate which biosafety procedures are most effective and increase research into safer alternatives—such as surrogate or simulation systems—to reduce reliance on replication-competent pathogens.

The good news is that improving biosafety is highly tractable. The number of labs, researchers, and institutions involved is relatively small, making targeted reforms feasible. Both the United States and China were deeply involved in research at the Wuhan Institute of Virology, suggesting at least the possibility of cooperation—however remote it may seem right now.

Shared risk could be the basis for shared responsibility.

Bayesian addendum *: A higher probability of a lab-leak should also reduce the probability of zoonotic origin but the latter is an already known risk and COVID doesn’t add much to our prior while the former is new and so the net probability is positive. In other words, the discovery of a relatively new source of risk increases our estimate of total risk.

Caleb Watney on risk and science funding

Right now, DOGE is treating efficiency as a simple cost-cutting exercise. But science isn’t a procurement process; it’s an investment portfolio. If a venture capital firm measured efficiency purely by how little money it spent, rather than by the returns it generated, it wouldn’t last long. We invest in scientific research because we want returns — in knowledge, in lifesaving drugs, in technological capability. Generating those returns sometimes requires spending money on things that don’t fit neatly into a single grant proposal.

While it’s true that indirect costs serve an important function, they can also create perverse incentives: When the government promises to cover expenses, expenses tend to go up. But instead of slashing funding indiscriminately, we should be thinking about how to get the most out of every dollar we invest in science.

That means streamlining research regulations. Universities are drowning in bureaucracy. Since 1990, there have been 270 new rules that complicate how we conduct research. Institutional Review Boards, intended to protect people from being unethically experimented on in studies, now regularly review low-risk social science surveys that pose no real ethical concerns. Researchers generate reams of paperwork in legally mandated disclosures of every foreign contract and collaboration, even for countries such as the Netherlands that present no geopolitical risk.

We must also rethink how we select scientific research to fund.

Caleb is co-CEO of the Institute for Progress, here is more from the NYT.

The importance of the chronometer

The chronometer, one of the greatest inventions of the modern era, allowed for the first time for the precise measurement of longitude at sea. We examine the impact of this innovation on navigation and urbanization. Our identification strategy leverages the fact that the navigational benefits provided by the chronometer varied across different sea regions depending on the prevailing local weather conditions. Utilizing high-resolution data on climate, ship routes, and urbanization, we argue that the chronometer significantly altered transoceanic sailing routes. This, in turn, had profound effects on the expansion of the British Empire and the global distribution of cities and populations outside Europe.

That is from a newly published paper by Martina Miotto and Luigi Pascali. Via the excellent Kevin Lewis.

What Did We Learn From Torturing Babies?

As late as the 1980s it was widely believed that babies do not feel pain. You might think that this was an absurd thing to believe given that babies cry and exhibit all the features of pain and pain avoidance. Yet, for much of the 19th and 20th centuries, the straightforward sensory evidence was dismissed as “pre-scientific” by the medical and scientific establishment. Babies were thought to be lower-evolved beings whose brains were not yet developed enough to feel pain, at least not in the way that older children and adults feel pain. Crying and pain avoidance were dismissed as simply reflexive. Indeed, babies were thought to be more like animals than reasoning beings and Descartes had told us that an animal’s cries were of no more import than the grinding of gears in a mechanical automata. There was very little evidence for this theory beyond some gesturing’s towards myelin sheathing. But anyone who doubted the theory was told that there was “no evidence” that babies feel pain (the conflation of no evidence with evidence of no effect).

Most disturbingly, the theory that babies don’t feel pain wasn’t just an error of science or philosophy—it shaped medical practice. It was routine for babies undergoing medical procedures to be medically paralyzed but not anesthetized. In one now infamous 1985 case an open heart operation was performed on a baby without any anesthesia (n.b. the link is hard reading). Parents were shocked when they discovered that this was standard practice. Publicity from the case and a key review paper in 1987 led the American Academy of Pediatrics to declare it unethical to operate on newborns without anesthesia.

In short, we tortured babies under the theory that they were not conscious of pain. What can we learn from this? One lesson is humility about consciousness. Consciousness and the capacity to suffer can exist in forms once assumed to be insensate. When assessing the consciousness of a newborn, an animal, or an intelligent machine, we should weigh observable and circumstantial evidence and not just abstract theory. If we must err, let us err on the side of compassion.

Claims that X cannot feel or think because Y should be met with skepticism—especially when X is screaming and telling you different. Theory may convince you that animals or AIs are not conscious but do you want to torture more babies? Be humble.

We should be especially humble when the beings in question are very different from ourselves. If we can be wrong about animals, if we can be wrong about other people, if we can be wrong about our own babies then we can be very wrong about AIs. The burden of proof should not fall on the suffering being to prove its pain; rather, the onus is on us to justify why we would ever withhold compassion.

Hat tip: Jim Ward for discussion.