Category: Medicine

Physician Incomes and the Extreme Shortage of High IQ Workers

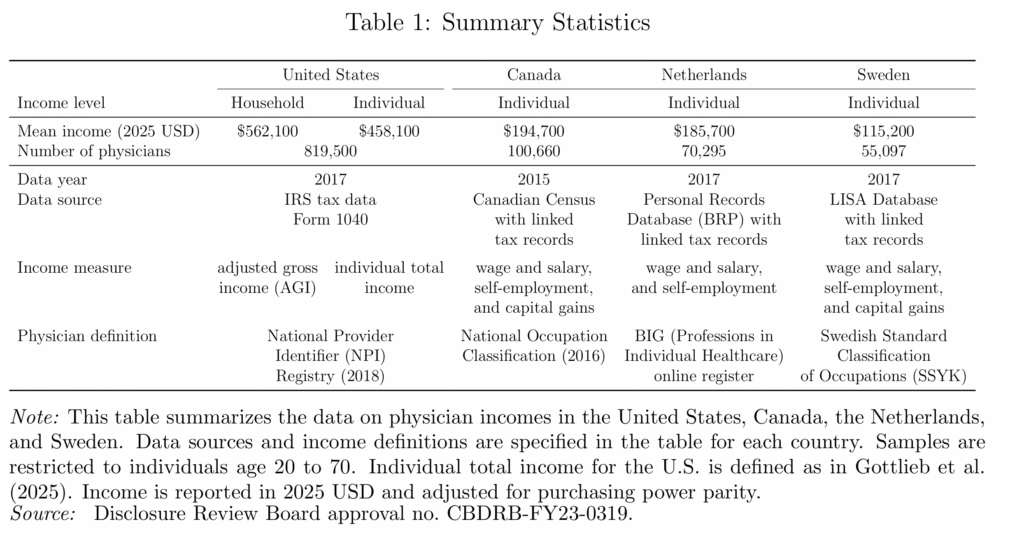

Physician incomes are extraordinarily high in the United States. A new NBER paper finds that U.S. physicians earn roughly two to four times as much as their counterparts in Canada, the Netherlands, and Sweden.

Why? Is it some feature particular to the US health care sector? Probably not. The same paper finds that physicians in the US have about the same relative income ranking as in Canada, the Netherlands, and Sweden. In other words, lots of high-skill workers in the US earn high incomes and physicians don’t look unusual relative to these other high-skill groups.

That is exactly what one would expect in an economy with an extreme shortage of high-IQ, high-skill workers. The US is a uniquely productive economy for high-skill workers which is why the US demand for foreign workers and the foreign demand to immigrate are so strong, especially at the high end.. By one estimate, “immigrants account for 32 percent of aggregate U.S. innovation.”

Immigration of high-skill workers such as with the H-1B and EB-1,2,3 programs, together with stronger U.S. education, is one way to reduce the shortage of high-skill workers. The alternative is simpler: make the economy less dynamic and less rewarding for talent. Then wages would fall and fewer ambitious people would bother coming. A solution but only if your preferred cure for scarcity is decline.

The rise of China as a global innovator in pharma (incentives matter)

This paper examines China’s transition from pharmaceutical “free rider” to global innovator over the last decade. In 2010, China accounted for less than 8% of global clinical trials; by 2020, it had surpassed the US in annual registered clinical trial volume. To study this transformation, we compile a comprehensive, synchronized database spanning the pharmaceutical drug development supply chain, covering scientific publications, clinical trials, drug development milestones for China, the U.S., and Europe, alongside drug sales and government policies over the same period. We provide strong evidence that China’s rise was primarily driven by the National Reimbursement Drug List (NRDL) reform, which dramatically expanded the effective market size for innovative drugs. We document a sharp rise in both the quantity (86% increase) and novelty of drug trials post reform, with growth concentrated in reform-exposed disease categories, first- or best-in-class drugs, and among domestic firms. A decomposition exercise reveals that the NRDL reform accounts for 43% of the growth in oncology trial activity, nearly doubling the combined contribution of upstream knowledge accumulation and talent flows (24%), while other government policies play a minor role. Finally, dynamic gains from induced innovation exceed the reform’s static gains in consumer access to innovative drugs by threefold, underscoring the importance of accounting for the reform’s long-run effects on innovation incentives in addition to near-term improvements in drug affordability.

That is from a new NBER working paper by

International Comparison of Physician Incomes

We compare physician incomes using tax data from the United States, Canada, Sweden, and the Netherlands. Physicians are concentrated in the top percentiles of the income distribution in all four countries, especially in the United States and certain specialties. Physician incomes are highest in the United States, and a decomposition shows that this mainly reflects differences in overall income distributions, rather than physicians’ locations in those distributions. This suggests that broader labor market differences, and thus physicians’ outside options, drive absolute incomes. Shifting US physicians’ incomes to match relative positions in other countries’ distributions would only marginally reduce healthcare spending.

By Aidan Buehler, et.al., from a new NBER working paper.

Tracing the Genetic Footprints of the UK National Health Service

The establishment of the UK National Health Service (NHS) in July 1948 was one of the most consequential health policy interventions of the twentieth century, providing universal and free access to medical care and substantially expanding maternal and infant health services. In this paper, we estimate the causal effect of the NHS introduction on early-life mortality and we test whether survival is selective. We adopt a regression discontinuity design under local randomization, comparing individuals born just before and just after July 1948. Leveraging newly digitized weekly death records, we document a significant decline in stillbirths and infant mortality following the introduction of the NHS, the latter driven primarily by reductions in deaths from congenital conditions and diarrhea. We then use polygenic indexes (PGIs), fixed at conception, to track changes in population composition, showing that cohorts born at or after the NHS introduction exhibit higher PGIs associated with contextually-adverse traits (e.g., depression, COPD, and preterm birth) and lower PGIs associated with contextually-valued traits (e.g., educational attainment, self-rated health, and pregnancy length), with effect sizes as large as 7.5% of a standard deviation. These results based on the UK Biobank data are robust to family-based designs and replicate in the English Longitudinal Study of Ageing and the UK Household Longitudinal Study. Effects are strongest in socioeconomically disadvantaged areas and among males. This novel evidence on the existence and magnitude of selective survival highlights how large-scale public policies can leave a persistent imprint on population composition and generate long-term survival biases.

Here is the link, via S.

If you have the right to die, you should have the right to try!

Ruxandra Teslo asks a good question:

I have a curiosity: why is it the case that it is easier to get MAID in Canada than it is to access experimental treatments which carry a higher risk? In the past, I used to think ppl do not like “deaths caused by the medical system”, but for MAID the prob of death is 100%…

The Canadians may be somewhat inconsistent on this point. Unfortunately, the Supreme Court has been consistent and has rejected medical self-defense arguments for physician assisted suicide and let stand an appeals court ruling that patients do not have a right to access drugs which have not yet been permitted for sale by the FDA (fyi, I was part of an Amici Curiae brief for this case).

Hat tip for the post title to Jason Crawford.

AI Won’t Automatically Accelerate Clinical Trials

Although I’m optimistic that AI will design better drug candidates, this alone cannot ensure “therapeutic abundance,” for a few reasons. First, because the history of drug development shows that even when strong preclinical models exist for a condition, like osteoporosis, the high costs needed to move a drug through trials deters investment — especially for chronic diseases requiring large cohorts. And second, because there is a feedback problem between drug development and clinical trials. In order for AI to generate high-quality drug candidates, it must first be trained on rich, human data; especially from early, small-n studies.

…Recruiting 1000 patients across 10 sites takes time; understanding and satisfying unclear regulatory requirements is onerous and often frustrating; and shipping temperature-sensitive vials to research hospitals across multiple states takes both time and money.

…For many diseases, however, the relevant endpoints take a very long time to observe. This is especially true for chronic conditions, which develop and progress over years or decades. The outcomes that matter most — such as disability, organ failure, or death — take a long time to measure in clinical trials. Aging represents the most extreme case. Demonstrating an effect on mortality or durable healthspan would require following large numbers of patients for decades. The resulting trial sizes and durations are enormous, making studies extraordinarily expensive. This scale has been a major deterrent to investment in therapies that target aging directly.

The Cassidy Report on the FDA

Senator Bill Cassidy (R-La.) released a new report on how to modernize the FDA. It has some good material.

… FDA’s process for reviewing new products can be an unpredictable “black box.” FDA teams can differ greatly in the extent to which they require testing or impose standards that are not calibrated to the relevant risks. The perceived disconnect between the forward leaning rhetoric and thought leadership of senior FDA officials and cautious reviewer practice creates further unpredictability. This uncertainty dampens investment and increases the time it takes for patients to receive new therapies.

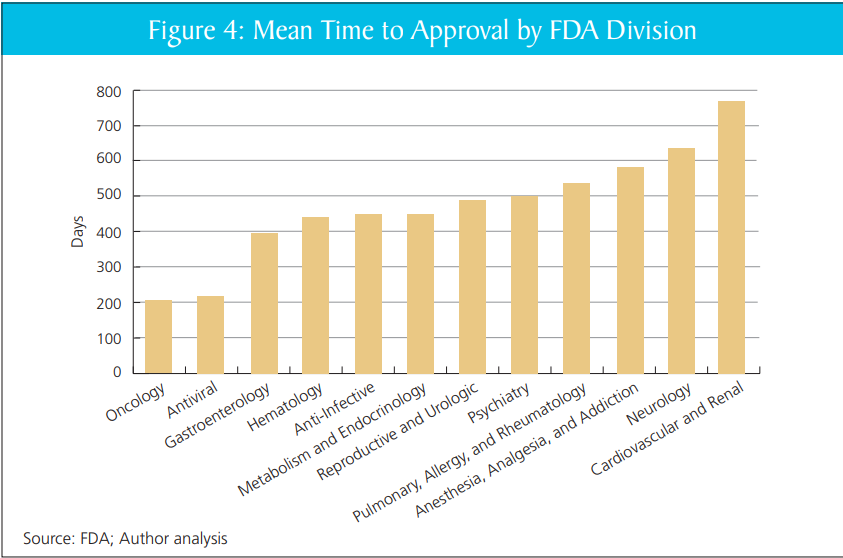

Companies report that they face a “reviewer lottery,” where critical questions hinge on the approach of a small number of individuals at FDA. Some FDA review teams are creative and forward-leaning, helping developers design programs and overcome obstacles to get needed products to patients, without cutting corners. FDA’s Oncology Center of Excellence (OCE), for example, is repeatedly identified as a model for providing predictable yet flexible options for bringing new drugs to cancer patients. OCE is now a dialogue-based regulatory paradigm that has facilitated efforts by academia, industry, the National Institutes of Health (NIH), and others to develop new cancer therapies and launch innovative programs and pilots like Project Orbis, RealTime Oncology Review.

That’s quite right. In my report with DiMasi and Milne, we show that review times vary markedly within the FDA, across divisions, without clear cause. Put aside the big questions of FDA or no FDA–scaling the practices of the better-performing divisions would likely yield large gains.

Mike Doherty further asks the AI what Alex Tabarrok would think of the Cassidy report. It replies:

Strongest features: It correctly diagnoses FDA as a bottleneck to innovation, emphasizes “least burdensome” evidence, calls for streamlined pathways (505(b)(2)-style for biologics, biosimilar simplification), and worries about U.S. losing early-phase trials to other countries. This is very much in Tabarrok’s wheelhouse: regulation as a hidden tax on innovation and growth.

Weakest features: It rarely quantifies tradeoffs, almost never confronts the “invisible graveyard” of patients who never see therapies because of delay, and treats reforms as marginal tweaks rather than testing fundamentally different regulatory models (e.g., insurance-based approval, private certification, or sunset/experimentation with parallel regimes).

If you imagine this as a draft memo handed to Tabarrok, he’d likely say: “Good directionally; now add 50% more economics, 50% more quantification, and 100% more willingness to experiment with institutional competition.”

Yeah, pretty good.

Addendum: In other FDA news see also Adam Kroetsch on Will Bayesian Statistics Transform Trials?

Addendum 2: FDA has now agreed to review Moderna’s flu vaccine which is good although the course reversal obviously speaks to the unpredictability of the FDA.

The cocaine problem seems to be getting worse again

Colombian coca cultivation fell dramatically between 2000 and 2015, a period that saw intense U.S.-backed eradication and interdiction efforts. That progress reversed in 2015, when peace talks and legal rulings in Colombia opened enforcement gaps. Coca plantation has since increased to record levels, which coincided with a sharp rise in cocaine-related overdose deaths in the U.S. We estimate how much of that rise can be causally attributed to Colombia’s new coca boom. Leveraging the unforeseen coca supply shock and cross-county differences in pre-shock cocaine exposure, we find that the surge in supply caused an immediate rise in overdose mortality in the U.S. Our analysis estimates on the order of 1,000–1,500 additional U.S. deaths per year in the late 2010s can be attributed to Colombia’s cocaine boom. Implicit annual loss in American statistical life values about $48,000 per hectare of cultivation in Colombia. If left untamed, the current level of coca cultivation (over 230,000 ha in 2022) may impose on the order of $10 billion per year in costs via overdose fatalities.

That is from a new NBER working paper by Xinming Du, Benjamin Hansen, Shan Zhang, and Eric Zou.

I Regret to Inform You that the FDA is FDAing Again

I had high hopes and low expectations that the FDA under the new administration would be less paternalistic and more open to medical freedom. Instead, what we are getting is paternalism with different preferences. In particular, the FDA now appears to have a bizarre anti-vaccine fixation, particularly of the mRNA variety (disappointing but not surprising given the leadership of RFK Jr.).

The latest is that the FDA has issued a Refusal-to-File (RTF) letter to Moderna for their mRNA influenza vaccine, mRNA-1010. An RTF means the FDA has determined that the application is so deficient it doesn’t even warrant a review. RTF letters are not unheard of, but they’re rare—especially given that Moderna spent hundreds of millions of dollars running Phase 3 trials enrolling over 43,000 participants based on FDA guidance, and is now being told the (apparently) agreed-upon design was inadequate.

Moderna compared the efficacy of their vaccine to a standard flu vaccine widely used in the United States. The FDA’s stated rationale is that the control arm did not reflect the “best-available standard of care.” In plain English, that appears to mean the comparator should have been one of the ACIP-preferred “enhanced” flu vaccines for adults 65+ (e.g., high-dose/adjuvanted) rather than a standard-dose product.

Out of context, that’s not crazy but it’s also not necessarily wise. There is nothing wrong with having multiple drugs and vaccines, some of which are less effective on average than others. We want a medical armamentarium: different platforms, different supply chains, different side-effect profiles, and more options when one product isn’t available or isn’t a good fit. The mRNA vaccines, for example, can be updated faster than standard vaccines, so having an mRNA option available may produce superior real-world effectiveness even if it were less efficacious in a head-to-head trial.

In context, this looks like the regulatory rules of the game are being changed retroactively—a textbook example of regulatory uncertainty destroying option value. STAT News reports that Vinay Prasad personally handled the letter and overrode staff who were prepared to proceed with review. Moderna took the unusual step of publicly releasing Prasad’s letter—companies almost never do this, suggesting they’ve calculated the reputational risk of publicly fighting the FDA is lower than the cost of acquiescing.

Moreover, the comparator issue was discussed—and seemingly settled—beforehand. Moderna says the FDA agreed with the trial design in April 2024, and as recently as August 2025 suggested it would file the application and address comparator issues during the review process.

Finally, Moderna also provided immunogenicity and safety data from a separate Phase 3 study in adults 65+ comparing mRNA-1010 against a licensed high-dose flu vaccine, just as FDA had requested—yet the application was still refused.

What is most disturbing is not the specifics of this case but the arbitrariness and capriciousness of the process. The EU, Canada, and Australia have all accepted Moderna’s application for review. We may soon see an mRNA flu vaccine available across the developed world but not in the United States—not because it failed on safety or efficacy, but because FDA political leadership decided, after the fact, that the comparator choice they inherited was now unacceptable.

The irony is staggering. Moderna is an American company. Its mRNA platform was developed at record speed with billions in U.S. taxpayer support through Operation Warp Speed — the signature public health achievement of the first Trump administration. The same government that funded the creation of this technology is now dismantling it. In August, HHS canceled $500 million in BARDA contracts for mRNA vaccine development and terminated a separate $590 million contract with Moderna for an avian flu vaccine. Several states have introduced legislation to ban mRNA vaccines. Insanity.

The consequences are already visible. In January, Moderna’s CEO announced the company will no longer invest in new Phase 3 vaccine trials for infectious diseases: “You cannot make a return on investment if you don’t have access to the U.S. market.” Vaccines for Epstein-Barr virus, herpes, and shingles have been shelved. That’s what regulatory roulette buys you: a shrinking pipeline of medical innovation.

An administration that promised medical freedom is delivering medical nationalism: fewer options, less innovation, and a clear signal to every company considering pharmaceutical investment that the rules can change after the game is played. And this isn’t a one-product story. mRNA is a general-purpose platform with spillovers across infectious disease and vaccines for cancer; if the U.S. turns mRNA into a political third rail, the investment, talent, and manufacturing will migrate elsewhere. America built this capability, and we’re now choosing to export it—along with the health benefits.

Immigration and health for elderly Americans

We measure the impact of increased immigration on mortality among elderly Americans, who rely on the immigrant-intensive health and long-term care sectors. Using a shift-share approach we find a strong impact of immigration on the size of the immigrant care workforce: admitting 1,000 new immigrants would lead to 142 new foreign healthcare workers, without evidence of crowd out of native health care workers. We also find striking effects on mortality: a 25% increase in the steady state flow of immigrants to the US would result in 5,000 fewer deaths nationwide. We identify reduced use of nursing homes as a key mechanism driving this result.

That is from a new NBER working paper by

Trump’s Pharmaceutical Plan

Pharmaceuticals have high fixed costs of R&D and low marginal costs. The first pill costs a billion dollars; the second costs 50 cents. That cost structure makes price discrimination—charging different customers different prices based on willingness to pay—common.

Price discrimination is why poorer countries get lower prices. Not because firms are charitable, but because a high price means poorer countries buy nothing, while any price above marginal cost is still profit. This type of price discrimination is good for poorer countries, good for pharma, and (indirectly) good for the United States: more profits mean more R&D and, over time, more drugs.

The political problem, however, is that Americans look abroad, see lower prices for branded drugs, and conclude that they’re being ripped off. Riding that grievance, Trump has demanded that U.S. prices be no higher than the lowest level paid in other developed countries.

One immediate effect is to help pharma in negotiations abroad: they can now credibly say, “We can’t sell to you at that discount, because you’ll export your price back into the U.S.” But two big issues follow.

First, this won’t lower U.S. prices on current drugs. Firms are already profit-maximizing in the U.S. If they manage to raise prices in France, they don’t then announce, “Great news—now we’ll charge less in America.” The potential upside of the Trump plan isn’t lower prices but higher pharma profits, which strengthens incentives to invest in R&D. If profits rise, we may get more drugs in the long run. But try telling the American voter that higher pharma profits are good.

The second issue is that the plan can backfire.

In our textbook, Modern Principles, Tyler and I discuss almost exactly this scenario: suppose policy effectively forces a single price across countries. Which price do firms choose—the low one abroad or the high one in the U.S.? Since a large share of profits comes from the U.S., they’re likely to choose the high price:

Pfizer CEO Albert Bourla was even more direct, saying it is time for countries such as France to pay more or go without new drugs. If forced to choose between reducing U.S. prices to France’s level or stopping supply to France, Pfizer would choose the latter, Bourla told reporters at a pharma-industry conference.

So the real question is: will other countries pay?

If France tried to force Americans to pay more to subsidize French price controls, U.S. voters would explode. Yet that’s essentially what other countries are being told but in reverse: “You must pay more so Americans can pay less.” Other countries are already stingier than the U.S., and they already bear costs for it—new drugs arrive more slowly abroad than here. Some governments may decide—foolishly, but understandably—that paying U.S.-level prices is politically impossible. If so, they won’t “harmonize upward.” They’ll follow the European way: ration, delay and go without.

In that case, nobody wins. Pharma profits fall, R&D declines, U.S. prices don’t magically drop, and patients abroad get fewer new drugs and worse care. Lose-lose-lose.

We don’t know the equilibrium, but lose-lose-lose is entirely plausible. Switzerland, for example, does not seem willing to pay more:

Yet Switzerland has shown little political willingness to pay more—threatening both the availability of medications in the country and its role as a global leader in developing therapies. Drug prices are the primary driver of the increasing cost of mandatory health coverage, and the topic generates heated debate during the annual reappraisal of insurance rates. “The Swiss cannot and must not pay for price reductions in the USA with their health insurance premiums,” says Elisabeth Baume-Schneider, Switzerland’s home affairs minister.

If many countries respond like Switzerland—and Trump’s unpopularity abroad doesn’t help—the sector ends up less profitable and innovation slows. Voters may feel less “ripped off,” but they’ll be buying that feeling with fewer drugs and sicker bodies.

The Effects of Ransomware Attacks on Hospitals and Patients

As cybercriminals increasingly target health care, hospitals face the growing threat of ransomware attacks. Ransomware is a type of malicious software that prevents users from accessing electronic systems and demands a ransom to restore access. We create and link a database of hospital ransomware attacks to Medicare claims data. We quantify the effects of ransomware attacks on hospital operations and patient outcomes. Ransomware attacks decrease hospital volume by 17–24 percent during the initial attack week, with recovery occurring within 3 weeks. Among patients already admitted to the hospital when a ransomware attack begins, in-hospital mortality increases by 34–38 percent.

That is by Hannah Neprash, Claire McGlave, and Sayeh Nikpay, recently published in American Economic Journal: Economic Policy.

What happens when dating goes online?

This paper studies how online dating platforms have impacted marital outcomes, assortative matching, and sexually transmitted disease (STD) rates in the United States. We construct county-level measures of online dating usage using data from website-based platforms (2002-2013) and mobile app-based platforms (2017-2023). Leveraging county-level variation and an instrumental variable strategy, we show in the desktop era, a 1% increase in online dating sessions raises divorce rates by 0.50%, while in the mobile era, a 1% increase in online dating activity lowers marriage and divorce rates by 0.40% and 0.33%, respectively. We also document shifts in assortative matching. Desktop sites reduce sorting along education and employment dimensions, whereas mobile sites reduce sorting by employment, but increase sorting by race. Across both eras, we find no evidence that greater online dating usage increases average STD rates. Average effects are negative or statistically insignificant, but are positive for some subpopulations. We develop a search and matching model where technological changes impact search costs, market size, and market noise can explain our empirical findings.

That is from a new paper by Daniel Ershov, Jessica Fong, and Pinar Yildirim. Via the excellent Kevin Lewis.

AI Physicians At Last

In 2004 (!) I wrote:

Many people complain that medicine is too impersonal. I think it is not impersonal enough. I have nothing against my physician (a local magazine says he is one of the best in the area) but I would prefer to be diagnosed by a computer. A typical physician spends most of the day playing twenty questions. Where does it hurt? Do you have a cough? How high is the patient’s blood pressure? But an expert system can play twenty questions better than most people. An expert system can use the best knowledge in the field, it can stay current with the journals, and it never forgets.

It took longer than it should have, but we are finally here. Today, most people already use AI to help diagnose and manage medical conditions, and now:

Utah is letting artificial intelligence — not a doctor — renew certain medical prescriptions. No human involved.

It’s a pilot program for routine renewals but a welcome start. The AMA, of course, is not pleased.

In a statement, Dr. John Whyte, CEO and executive vice president at the American Medical Association, said: “While AI has limitless opportunity to transform medicine for the better, without physician input it also poses serious risks to patients and physicians alike.”

One concern is misuse or abuse, including the possibility that people struggling with addiction could try to game automated systems to obtain drugs inappropriately. Another concern is missing subtle clinical red flags or drug interactions that a doctor would catch.

It’s amazing that anyone can say these things with a straight face. As far as I know, AI has never run a pill mill, unlike human physicians. And the AI

“missing subtle clinical red flags or drug interactions that a doctor would catch.” Is this a joke?

Direct and Indirect Effects of Vaccines: Evidence from COVID-19

Sorry people, but the verdict on this one continues to come in:

We estimate direct and indirect vaccine effectiveness and assess how far the infection-reducing externality extends from the vaccinated, a key input to policy decisions. Our empirical strategy uses nearly universal microdata from a single state and relies on the six-month delay between 12- and 11-year-old COVID vaccine eligibility. Vaccination reduces cases by 80 percent, the direct effect. This protection spills over to close contacts, producing a household-level indirect effect about three-fourths as large as the direct effect. However, indirect effects do not extend to schoolmates. Our results highlight vaccine reach as important to consider when designing policy for infectious disease.

That is from American Economic Journal: Applied Economics, by Seth Freedman, Daniel W. Sacks, Kosali Simon, and Coady Wing. So many different methods and papers are pointing in the same direction…