Herbert Gintis has passed away, RIP

ChatGPT and the revenge of history

I have been posing it many questions about Jonathan Swift, Adam Smith, and the Bible. Chat does very well in all those areas, and rarely hallucinates. Is it because those are settled, well-established texts, with none of the drama “still in action”?

I suspect Chat is a boon for the historian and the historian of ideas. You can ask Chat about obscure Swift pamphlets and it knows more about them than Google does, or Wikipedia does, by a long mile. Presumably it “reads” them for you?

When I ask about current economists or public intellectuals, however, more errors creep in. Hallucinations become common rather than rare. The most common hallucination I find is that Chat invents co-authorships and conference co-sponsorships like crazy. If you ask it about two living people, and whether they have worked together, the fantasy life version will be rather active, maybe fifty percent of the time?

Presumably that bug will be fixed, but still it seems that for the time being Chat has shifted some real intellectual heft back in antiquarian directions. Perhaps it is harder for statistical estimation to predict words about events that are still going on?

Here are some tips for using ChatGPT.

Of course Chat is already a part of my regular research and learning routine. Woe be unto those who cannot or do not use it effectively! I feel sorry for them, get with the program people…

David Wallace-Wells on the pandemic

Rather than quote the parts where he says nice things about Alex and me, how about a wee excerpt on the GBD crowd:

Dr. Bhattacharya, for instance, proclaimed in The Wall Street Journal in March 2020 that Covid-19 was only one-tenth as deadly as the flu. In January 2021 he wrote an opinion essay for the Indian publication The Print suggesting that the majority of the country had acquired natural immunity from infection already and warning that a mass vaccination program would do more harm than good for people already infected. Shortly thereafter, the country’s brutal Delta wave killed perhaps several million Indians. In May 2020, Dr. Gupta suggested that the virus might kill around five in 10,000 people it infected, when the true figure in a naïve population was about one in 100 or 200, and that Covid was “on its way out” in Britain. At that point, it had killed about 45,000 Britons, and it would go on to kill about 170,000 more. The following year, Dr. Bhattacharya and Dr. Kulldorff together made the same point about the disease in the United States — that the pandemic was “on its way out” — on a day when the American death toll was approaching 600,000. Today it is 1.1 million and growing.

It has fallen down the memory hole a bit just how um…”off” these people were, and that is the polite word. That said, I don’t think they should have been banned from any social media platforms. Here is the full NYT piece, excellent throughout, and mostly about other topics. For the pointer I thank Alex T.

Thursday assorted links

Shruti Rajagopalan and Janhavi Nilekani podcast

In this episode, Shruti speaks with [the excellent] Janhavi Nilekani about India’s high rate of C-sections compared with vaginal births, problems with maternal healthcare, the present and future of Indian midwifery and much more. Nilekani is the founder and chair of the Aastrika Foundation, which seeks to promote a future in which every woman is treated with respect and dignity during childbirth, and the right treatment is provided at the right time. She is a development economist by training and now works in the field of maternal health. She obtained her Ph.D. in public policy from Harvard and holds a 2010 B.A., cum laude, in economics and international studies from Yale.

Here is the link.

Is America suffering a ‘social recession’?

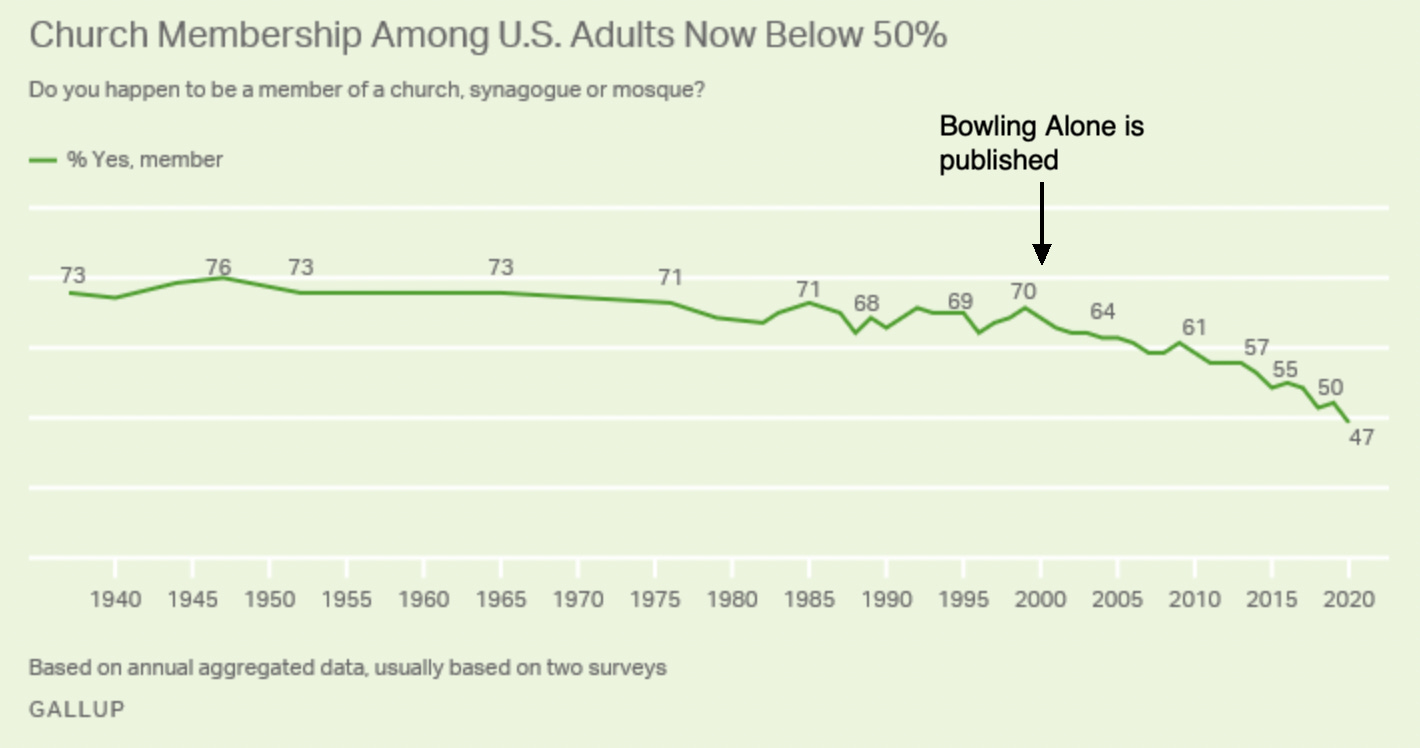

Anton Cebalo documents a decline in sex, friends and trust. Here’s a few points I found of interest. I had come to think about church membership in America as pretty much fixed around 70%. That was true for decades but not recently:

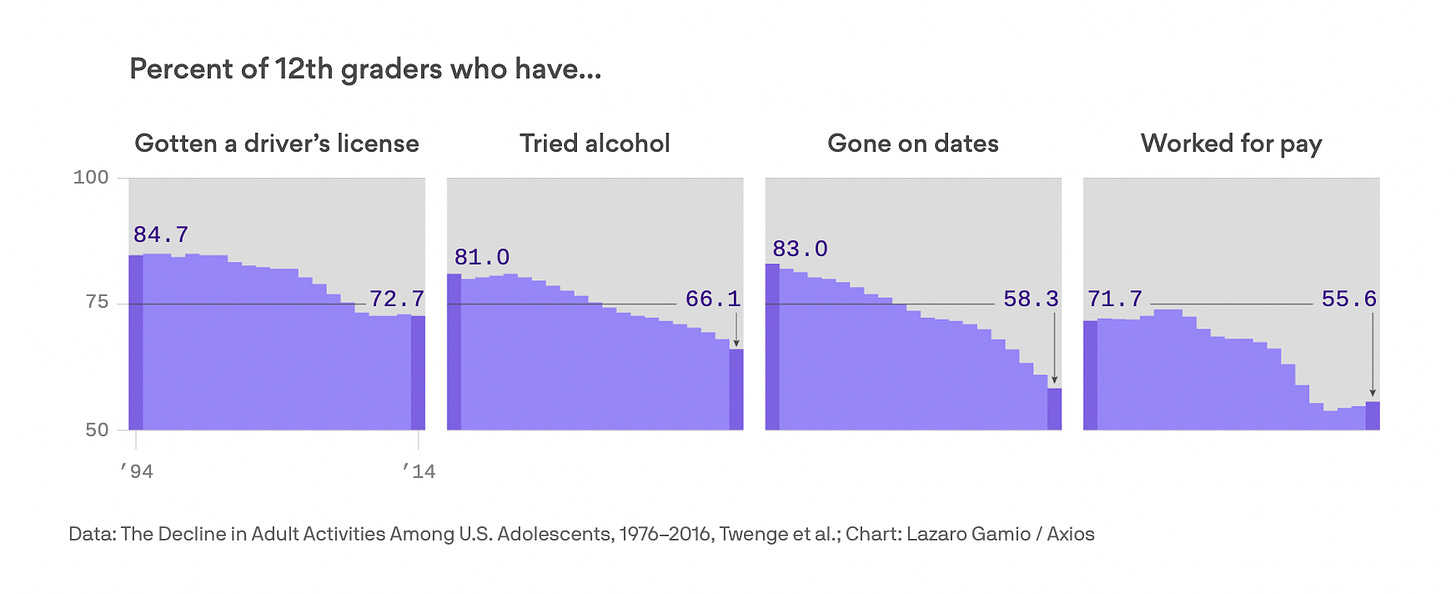

Here’s another trend, the extension of adoloscence. More and more we are treating young adults like children and in many respects they are more like children of earlier years.

There have been many psychological profiles of “late adulthood,” common among those born from the 1990s onward. Many of the milestones — getting a driver’s license, moving out, dating, starting work, and so on — have been delayed for many young adults.

The trend became obvious starting in the 2010s. In 2019, it was compiled in a comprehensive study titled The Decline in Adult Activities Among U.S. Adolescents, 1976-2016.

See Cebalo for more.

Are scientific breakthroughs less fundamental?

From Max Kozlov, do note the data do not cover the very latest events:

The number of science and technology research papers published has skyrocketed over the past few decades — but the ‘disruptiveness’ of those papers has dropped, according to an analysis of how radically papers depart from the previous literature.

Data from millions of manuscripts show that, compared with the mid-twentieth century, research done in the 2000s was much more likely to incrementally push science forward than to veer off in a new direction and render previous work obsolete. Analysis of patents from 1976 to 2010 showed the same trend.

“The data suggest something is changing,” says Russell Funk, a sociologist at the University of Minnesota in Minneapolis and a co-author of the analysis, which was published on 4 January in Nature. “You don’t have quite the same intensity of breakthrough discoveries you once had.”

The authors reasoned that if a study was highly disruptive, subsequent research would be less likely to cite the study’s references, and instead cite the study itself. Using the citation data from 45 million manuscripts and 3.9 million patents, the researchers calculated a measure of disruptiveness, called the ‘CD index’, in which values ranged from –1 for the least disruptive work to 1 for the most disruptive.

The average CD index declined by more than 90% between 1945 and 2010 for research manuscripts (see ‘Disruptive science dwindles’), and by more than 78% from 1980 to 2010 for patents. Disruptiveness declined in all of the analysed research fields and patent types, even when factoring in potential differences in factors such as citation practices…

The authors also analysed the most common verbs used in manuscripts and found that whereas research in the 1950s was more likely to use words evoking creation or discovery such as, ‘produce’ or ‘determine’, that done in the 2010s was more likely to refer to incremental progress, using terms such as ‘improve’ or ‘enhance’.

Here is the piece, and here is the original research by Michael Park Erin Leahey, and Russell J. funk.

What is going wrong with American higher education?

Yes, yes all the Woke and PC stuff, but let us also look into the matter more deeply, as in my latest Bloomberg column. There is a serious talent drain due to excess bureaucratization, among other issues:

Another problem is the ongoing mental health crisis among America’s youth. This is not the fault of universities, to be clear, but a lot of unhappy students make for a less enjoyable college experience. The warm glow that so many baby boomers associate with their college years may not be reproduced by the current generation. They might instead look back on a quite troubled time, and in turn have less school loyalty.

I have also observed (as have many of my colleagues) that students seem to have more absences, excuses and missed assignments. No matter what the causes of those developments, they make it harder to run an effective university.

In fact, many of the smartest young people I know are deciding against a career in academia, even if that was their initial intent. They see too much bureaucracy and not enough time for the academic work itself. Students in the biosciences, at least the ones I talk to, seem to be an exception, perhaps because the opportunities to change the world are so obvious.

In my own field, economics, the prospect of having to do a “pre-doc” and then six years for a Ph.D. is driving away creative talent. On the research side, there is an obsession with finding the correct empirical techniques for causal inference. Initially a merited and beneficial development, this approach is becoming an intellectual straitjacket. There are too many papers focusing on a suitably narrow topic to make the causal inference defensible, rather than trying to answer broader, more useful but also more difficult questions.

…As committee obligations, paperwork and referee reports accumulate, the idea that academia allows you to be in charge of your own time seems ever more distant. Bureaucratization is eating away at the free time of professors. Much of the glamour of the job is gone, and my fear is that the system increasingly attracts conformists.

And don’t forget this disaggregation:

There are also big differences within universities. I have been a professor for more than three decades and speak often at other campuses. My impression is that presidents, provosts and deans are relatively sane, if only because they face real trade-offs as they draw up budgets, raise money and make payroll. University staff or student groups, on the other hand, often have no sense of the underlying constraints, and so advocate for ideas and practices that lead to some ridiculous stories. The actual decision makers are frequently not strong enough to push back, so they accept the demands as a way to survive or even advance.

Recommended.

*Rise*

Applications are now open for Rise! Rise is a global initiative that finds brilliant people who need opportunity and supports them for life as they work to serve others and build a better world. The program starts at ages 15–17 and offers Global Winners access to benefits including need-based scholarships, a fully-funded residential summit, mentorship, career development, potential funding, and more. Applications are open until January 25, 2023.

Wednesday assorted links

1. ChatGPT and Bing will be integrated. And Large Language Models as corporate lobbyists.

2. “German is a very ‘spank me’ language,” she tells a Melbourne woman struggling pronounce a porcelain manufacturer’s name. “Königliche,” the woman coughs. Standing beside a handwritten chart of tableware terminology, Ms. Ho trills with delight: “You got it! See? You think ‘spank me,’ immediately you got it.” (NYT link). What in the heck was that article about? Cracking cultural codes perhaps?

3. The “stay at home girlfriend” trend.

4. How much are Russian cyberattacks diminishing?

5. The published meta-study on lead and crime updates the results somewhat, in line with my expectations.

Privatization Improves Airports

Laurent Belsie summarizes a new NBER paper, All Clear for Takeoff: Evidence from Airports on the Effects of Infrastructure Privatization:

When private equity funds buy airports from governments, the number of airlines and routes served increases, operating income rises, and the customer experience improves.

…As of 2020, nearly 20 percent of the world’s airports had been privatized. Private equity (PE), usually through dedicated infrastructure funds, is playing an increasing role in privatization, purchasing 102 airports out of a total of 437 that have ever been privatized.

…A key metric of airport efficiency is passengers per flight. The more customers an airport can serve with existing runways and gates, the more services it can deliver and the more earnings it can generate. When PE funds buy government-owned airports, the number of passengers per flight rises an average 20 percent. There’s no such increase when non-PE private firms acquire an airport. Overall passenger traffic rises under both types of private ownership, but the rise at PE-owned airports, 84 percent, is four times greater than that at non-PE-owned private airports. Freight volumes and the number of flights, other measures of efficiency, show a similar pattern. Evidence from satellite image data indicates that PE owners increase terminal size and the number of gates. This capacity expansion helps enable the volume increases and points to the airport having been financially constrained under previous ownership.

…PE firms tend to attract new low-cost carriers to their airports, which in turn may lead to greater competition and offer consumers better service and lower prices. With regard to routes, PE acquirers increase the number of new routes, especially international routes, more than other buyers. International passengers are often the most profitable airport users, especially in developing countries.

A PE acquisition is also associated with a decline in flight cancellations and an increase in the likelihood of receiving a quality award. When an airport shifts from non-PE private to PE ownership, its odds of winning an award rise by 6 percentage points. The average chance of winning such an award is just 2 percent.

The fees that airports charge to airlines rise after airport privatizations. When the buyer is a PE firm, there is also a push to deregulate government limits on those fees. For example, after three Australian airports were privatized in the mid-1990s, the price caps governing airport revenues were replaced with a system of price monitoring that allows the government to step in if fees or revenues become excessive.

The net effect of a PE acquisition is a rough doubling of an airport’s operating income, due mostly to higher revenues from airlines and retailers in the terminal rather than cost-cutting. The driving forces behind these improvements appear to be new management strategies, which likely includes greater compensation for managers, alongside investments in new capacity as well as better passenger services and technology.

My reading program for the half-year to come

To be clear, I very much like Lex Fridman’s proposed reading list, and I hope to reread many of those books. Most of them are much deeper than their sometimes reputations, I might add. (For all the stupid whining about the list, odd that no one is asking why he doesn’t read more in Russian.)

If you are curious, here is what I have planned for the first half of the year to come:

1. Review copies that come my way — considerable numbers of them are great! And thank you all for sending.

2. Reading or rereading through the works of Jonathan Swift, for a paper I am writing. The topic is Swift on state-church relations, and as relates to some points from Rene Girard, and Swift on science, as it relates to Peter Thiel.

3. The history of American comedy and stand-up, as relates to a future CWT with Noam Dworman.

4. What is recommended to me by credible others, throughout the course of the year.

5. Many books in Indian history, for the 1600-1800 period, give or take, to prep for a likely CWT with William Dalrymple.

6. Reread and re-study of the New Testament, for CWTs with Tom Holland and David Bentley Hart. And for its own sake too.

7. More British and Irish history, from all eras. That will mean reading in clusters, rather than obsessing over particular volumes. Lately I’ve been reading about British cultural and architectural heritage issues, in part to get a different and more advanced bead on YIMBY-NIMBY conflicts, and also to relearn British history through the history of its major buildings.

8. Haldor Laxness and Jon Fosse are among the classic novels in my queue, neither would be a reread. And I will start the new Javier Marías that comes out in English, it takes me too long in Spanish. Twentieth century Polish poetry, and more on the Polish history of ideas and literature. And I’ve vowed to read more museum catalogs (more picture books!). In German I will try Goethe’s Dichtung und Wahrheit, reread some Rilke, some Tuschel, and maybe some more Herta Müller.

9. Other stuff too, most of all what I buy spontaneously. Just yesterday Deepti Kapoor’s Age of Vice arrived at the house. There is some Eugene Volodazkin on the way.

10. I’ve been reading a good deal lately about neural nets, transformers, and other AI-related topics, but not understanding it very well. YouTube videos have helped only a little. We’ll see if I ramp up those efforts or discard them. I am learning a lot from playing around with GPT, however, and maybe I’ll ask it what else I should read.

Beware the dangers of crypto regulation

That is the topic of my latest Bloomberg column, here is one bit:

No matter how strong the temptation, we should not overregulate.

Begin with two central facts. First, there are numerous ways for small and large investors to lose their money, including by investing in risky equities. Regulating crypto won’t end that danger. Second, despite being one of the largest financial frauds in history, FTX has not created systemic financial risk, which should be the main concern of regulators. And market forces already have made the risk from crypto much smaller: At the peak of crypto values in late 2021, crypto assets had a total value of about $2 trillion; as of this writing, that figure is about $845 billion.

And:

Crypto regulation is not easy to do well. If crypto institutions are treated like regular depository institutions, requiring heavy layers of capital and lots of legal staffing, crypto innovation is likely to dwindle. Such innovation has been more the province of eccentric geniuses than of mainstream regulated institutions. It is hard to imagine Satoshi Nakamoto or Vitalik Buterin at Goldman Sachs.

And what exactly should be the goal of crypto regulation? To make stablecoins truly stable in nominal value? Is that even possible? Or to encourage market participants to see those assets as inherently fluctuating in value?

Neither academic research nor market experience offers clear answers. With systemic risk currently low, perhaps it is better to wait and learn more before moving ahead with regulation. And on a purely practical level, very few members of Congress (or their staff members) have a good working knowledge of crypto and all of its current wrinkles and innovations.

There is much more of value at the link.

Tuesday assorted links

1. Age distribution of CWT guests.

2. ChatGPT and coding. And an explanation of transformers. And another guide here. And chat bot vs. the doctors.

3. Sopranos actor finds $10 million Guercino.

4. Richard Hanania defends Canadian euthanasia.

5. Are enrollments up at faith-based colleges?

6. Major new art and photography museum coming soon to Bangalore.

Why do so many prices end with 99 cents?

Firms arguably price at 99-ending prices because of left-digit bias—the tendency of consumers to perceive a $4.99 as much lower than a $5.00. Analysis of retail scanner data on 3500 products sold by 25 US chains provides robust support for this explanation. I structurally estimate the magnitude of left-digit bias and find that consumers respond to a 1-cent increase from a 99-ending price as if it were more than a 20-cent increase. Next, I solve a portable model of optimal pricing given left-digit biased demand. I use this model and other pricing procedures to estimate the level of left-digit bias retailers perceive when making their pricing decisions. While all retailers respond to left-digit bias by using 99-ending prices, their behavior is consistently at odds with the demand they face. Firms price as if the bias were much smaller than it is, and their pricing is more consistent with heuristics and rule-of-thumb than with optimization given the structure of demand. I calculate that retailers forgo 1 to 4 percent of potential gross profits due to this coarse response to left-digit bias.

That is from a forthcoming paper by Avner Strulov-Shlain. Via the excellent Kevin Lewis.