Rare Earths Aren’t Rare

Every decade or so there is a freakout out about China’s monopoly in rare earths. The last time was in 2010 when Paul Krugman wrote:

You really have to wonder why nobody raised an alarm while this was happening, if only on national security grounds. But policy makers simply stood by as the U.S. rare earth industry shut down….The result was a monopoly position exceeding the wildest dreams of Middle Eastern oil-fueled tyrants.

…the affair highlights the fecklessness of U.S. policy makers, who did nothing while an unreliable regime acquired a stranglehold on key materials.

A few years later I pointed out that the crisis was exaggerated:

- The Chinese government might or might not have wanted to take advantage of their temporary monopoly power but Chinese producers did a lot to evade export bans both legally and illegally.

- Firms that had been using rare earths when they were cheap decided they didn’t really need them when they were expensive.

- New suppliers came on line as prices rose.

- Innovations created substitutes and ways to get more from using less.

Well, we are at it again. Tim Worstall, a rare earths dealer and fine economist, is the one to read:

…rare earths are neither rare nor earths, and they are nearly everywhere. The biggest restriction on being able to process them is the light radioactivity the easiest ores (so easy they are a waste product of other industrial processes — monazite say) contain. If we had rational and sensible rules about light radioactivity — alas, we don’t — then that end of the process would already be done. Passing Marco Rubio’s Thorium Act would, for example, make Florida’s phosphate gypsum stacks available and they have more rare earths in them than several sticks could be shaken at.

Some also point out that only China has the ores with dysprosium and terbium — needed for the newly vital high temperature magnets. This is also one of those things that is not true. A decade back, yes, we did collectively think that was true. The ores — “ionic clays” — were specific to South China and Burma. Collective knowledge has changed and now we know that they can exist anywhere granite has weathered in subtropical climes. I have a list somewhere of a dozen Australian claimed deposits and there is at least one company actively mining such in Chile and Brazil.

…No, this is not an argument that we should have subsidised for 40 years to maintain production. It’s going to be vastly cheaper to build new now than it would have been to carry deadbeats for decades. Quite apart from anything else, we’re going to build our new stuff at the edge of the current technological envelope — not just shiny but modern.

As Tyler says, do not underrate the “elasticity of supply.”

Predicting Job Loss?

Hardly a day goes by without a new prediction of job growth or destruction from AI and other new technologies. Predicting job growth is a growing industry. But how good are these predictions? For 80 years the US Bureau of Labor Statistics has forecasted job growth by occupation in its Occupational Outlook series. The forecasts were generally quite sophisticated albeit often not quantitative.

In 1974, for example, the BLS said one downward force for truck drivers was that “[T]he trend to large shopping centers rather than many small stores will reduce the number of deliveries required.” In 1963, however, they weren’t quite so accurate about about pilots writing “Over the longer run, the rate of airline employment growth is likely to slow down because the introduction of a supersonic transport plane will enable the airlines to fly more traffic without corresponding expansion in the number of airline planes and workers…”. Sad!

In a new paper, Maxim Massenkoff collects all this data and makes it quantifiable with LLM assistance. What he finds is that the Occupational Outlook performed reasonably well, occupations that were forecast to grow strongly did grow significantly more than those forecast to grow slowly or decline. But was there alpha? A little but not much.

…these predictions were not that much better than a naive forecast based only on growth over the previous decade. One implication is that, in general, jobs go away slowly: over decades rather than years. Historically, job seekers have been able to get a good sense of the future growth of a job by looking at what’s been growing in the past.

If past predictions were only marginally better than simple extrapolations it’s hard to believe that future predictions will perform much better. At least, that is my prediction.

Democracy and Capitalism are Mutually Reinforcing

Many people argue that democracy is incompatible with capitalism but they differ on whether democracy will kill capitalism or whether capitalism will kill democracy. Peter Thiel, for example, famously said, “I no longer believe that freedom and democracy are compatible.” Thiel’s argument has a long-pedigree. The classical economists from Adam Smith to John Stuart Mill all worried that democracy would kill capitalism. Even Marx and Engels agreed with the analysis arguing that under democracy “The proletariat will use its political supremacy to wrest, by degree, all capital from the bourgeoisie, to centralize all instruments of production in the hands of the State…” they differed only in welcoming such a revolution.

On the other side of the aisle we have the moderns such as Robert Reich and Joseph Stiglitz who argue in Reich’s words that Capitalism is Killing Democracy as “Corporations” and “billionaire capitalists have invested ever greater sums in lobbying, public relations, and even bribes and kickbacks, seeking laws that give them a competitive advantage over their rivals…”

A third argument, consistent with the views of Hayek, Mises, Friedman and others, is that capitalism and democracy are compatible and even mutually reinforcing. Ludwig von Mises, for example, argued that “Liberalism must necessarily demand democracy as its political corollary.”

My latest paper (WP version) (with Vincent Geloso) is in the new book, Can Democracy and Capitalism be Reconciled? We take the third view and show empirically that capitalism and democracy go hand in hand. We also provide some mechanisms for this correlation which I may discuss in a future post.

The data is very clear that democracy and capitalism go hand in hand. The figure below, for example, uses the Fraser Institute’s Economic Freedom Index to measure capitalism and the Varieties of Democracy Index to measure democracy (we use liberal democracy for convenience but show the correlations are strong with any variety of democracy).

Every major democracy is a capitalist country and virtually every capitalist country is a democracy (Singapore and Hong Kong being the only two exceptions.) Moreover, the upper left region–countries with a lot of democracy and low economic freedom, i.e. state control of the economy–what we might call the “Democratic Socialism” region–is empty.

We show further in the paper that changes in democracy are positively correlated with changes in economic freedom. We can see this very clearly by examining a natural experiment–the fall of the Berlin Wall. The fall of the Berlin Wall created a big positive shock to democracy which was followed by large and sustained increases in economic freedom.

It is sometimes argued that only an authoritarian regime is capable of “imposing” big increases in economic freedom and this is clearly false but it is true that there have been large increases in economic freedom in some authoritarian regimes. In the paper we look at the biggest such cases, Peru, Nicaragua, Uganda and Chile. The case of Peru carries some general lessons.

Peru began in 1970 with an authoritarian regime and only modest economic freedom. Economic freedom declined under an authoritarian regime and to levels well below those of any democracy. Modest increases in democracy brought modest increases in economic freedom. Under the authoritarian Fujimori regime there were large increases in economic freedom which in the 2000s were ratified, solidified and strengthened under democratic governments.

What we learn from this brief history is that authoritarian regimes can decrease as well as increase economic freedom. Indeed, one reason we sometimes see big increases in economic freedom under authoritarian regimes is simply that they are starting from the wreckage left by the previous regime. It’s easy to increase economic freedom a lot when you begin with a base level far below that of any democratic regime. Moreover, the Peru case is representative in that when a democratic regime is established it typically does not reject but instead ratifies and strengthens economic freedom.

What accounts for the correlation in economic freedom and democracy? The paper discusses a number of mechanisms of which I will only mention two here. Consider two ways to get rich, redistribution and growth. Redistribution can make a minority rich at the expense of a majority. A dictator can live in luxury amid national misery. But no redistribution scheme can enrich the majority—only growth can. Broad prosperity comes not from dividing wealth, but from creating it through pro-growth, capitalist policies. As a result, in a democracy, the rulers, the demos, can only get rich through growth and this provides some incentive to think about capitalism and economic freedom. The incentive is not a guarantee, of course, democratic voters can vote for bad policies but if they want to get rich they have to think about growth and that means capitalism.

The second reason for the correlation is a negative one, democratic socialism collapses into authoritarian socialism. As Robert Dahl argued:

It is not the inefficiencies of a centrally planned economy…that are most injurious to democratic prospects. It is the economy’s social and political consequences.

A centrally planned economy puts the resources of the entire economy at the disposal of government leaders. …“power corrupts and absolute power corrupts absolutely.”

A centrally planned economy issues an outright invitation to government leaders, written in bold letters: You are free to use all these economic resources to consolidate and maintain your power!

The bottom line is if you care about economic freedom, democracy is the way to go and if you care about democracy, economic freedom is the way to go.

Read the paper (WP version) for more.

We Turned the Light On—and the AI Looked Back

Jack Clark, Co-founder of Anthropic, has written a remarkable essay about his fears and hopes. It’s not the usual kind of thing one reads from a tech leader:

I remember being a child and after the lights turned out I would look around my bedroom and I would see shapes in the darkness and I would become afraid – afraid these shapes were creatures I did not understand that wanted to do me harm. And so I’d turn my light on. And when I turned the light on I would be relieved because the creatures turned out to be a pile of clothes on a chair, or a bookshelf, or a lampshade.

Now, in the year of 2025, we are the child from that story and the room is our planet. But when we turn the light on we find ourselves gazing upon true creatures, in the form of the powerful and somewhat unpredictable AI systems of today and those that are to come. And there are many people who desperately want to believe that these creatures are nothing but a pile of clothes on a chair, or a bookshelf, or a lampshade. And they want to get us to turn the light off and go back to sleep.

…We are growing extremely powerful systems that we do not fully understand. Each time we grow a larger system, we run tests on it. The tests show the system is much more capable at things which are economically useful. And the bigger and more complicated you make these systems, the more they seem to display awareness that they are things.

It is as if you are making hammers in a hammer factory and one day the hammer that comes off the line says, “I am a hammer, how interesting!” This is very unusual!

…I am also deeply afraid. It would be extraordinarily arrogant to think working with a technology like this would be easy or simple.

My own experience is that as these AI systems get smarter and smarter, they develop more and more complicated goals. When these goals aren’t absolutely aligned with both our preferences and the right context, the AI systems will behave strangely.

…we are not yet at “self-improving AI”, but we are at the stage of “AI that improves bits of the next AI, with increasing autonomy and agency”. And a couple of years ago we were at “AI that marginally speeds up coders”, and a couple of years before that we were at “AI is useless for AI development”. Where will we be one or two years from now?

And let me remind us all that the system which is now beginning to design its successor is also increasingly self-aware and therefore will surely eventually be prone to thinking, independently of us, about how it might want to be designed.

…In closing, I should state clearly that I love the world and I love humanity. I feel a lot of responsibility for the role of myself and my company here. And though I am a little frightened, I experience joy and optimism at the attention of so many people to this problem, and the earnestness with which I believe we will work together to get to a solution. I believe we have turned the light on and we can demand it be kept on, and that we have the courage to see things as they are.

Clark is clear that we are growing intelligent systems that are more complex than we can understand. Moreover, these systems are becoming self-aware–that is a fact, even if you think they are not sentient (but beware hubris on the latter question).

The Economics Nobel goes to Mokyr, Aghion and Howitt

The Nobel prize goes to Joel Mokyr, the economic historian of the industrial revolution and the growth theorists Phillippe Aghion and Peter Howitt best known for their Schumpeterian model of economic growth.

Here’s a good quote from Nobelist Joel Mokyr’s the Lever of Riches.

Yet the central message of this book is not unequivocally optimistic . History provides us with relatively few examples of societies that were technologically progressive. Our own world is exceptional, though not unique, in this regard. By and large, the forces opposing technological progress have been stronger than those striving for changes. The study of technological progress is therefore a study of exceptionalism, of cases in which as a result of rare circumstances, the normal tendency of societies to slide toward stasis and equilibrium was broken. The unprecedented prosperity enjoyed today by a substantial proportion of humanity stems from accidental factors to a degree greater than is commonly supposed. Moreover, technological progress is like a fragile and vulnerable plant, whose nourishing is not only dependent on the appropriate surroundings and climate, but whose life is almost always short. It is highly sensitive to the social and economic environment and can easily be arrested by relatively small external changes. If there is a lesson to be learned from the history of technology it is that Schumpeterian growth, like the other forms of economic growth, cannot and should not be taken for granted.

Aghion and Howitt’s Schumpeterian model of economic growth shares with Romer the idea that the key factors of economic growth must be modelled, growth is thus endogenous to the model (unlike Solow where growth is primarily driven by technology, an unexplained exogenous factor). In Romer’s model, however, growth is primarily horizontally driven by new varieties whereas in Aghion and Howitt growth comes from creative destruction, from new ideas, technologies and firms replacing old ideas, technologies and firms.

Thus, Aghion and Howitt’s model lends itself to micro-data on firm entry and exit of the kind pioneered by Haltiwanger and others (who Tyler and I have argued for a future Nobel). Economic growth is not just about new ideas but about how well an economy can reallocate production to the firms using the new ideas. Consider the picture below, based on data from Bartelsman, Haltiwanger, and Scarpetta. It shows the covariance of labor productivity and firm size. In the United States highly productive firms tend to be big but this is much less true in other economies. In the UK during this period (1993-2001) the covariance of productive and big is considerably less than half the rate in the United States. In Romania at this time the covariance was even negative–indicating that the big firms were among the least productive. Why? Well in Romania this as the end of the communist era when big, unproductive government run behemoths dominated the economy. As Romania moved towards markets the covariance between labor productivity and firm size increased. That is the economy became more productive as it reallocated labor from low productivity firms to high productivity firms.

Aghion and Howitt’s work centers on how new ideas emerge and how creative destruction turns those ideas into real economic change through the birth and death of firms. But creative destruction is never painless—growth requires that some firms fail and that labor be displaced so resources can flow to new, more productive uses. Aghion and Howitt will likely point to the United States as dealing with his process better than Europe. Business dynamism has declined in Europe relative to the United States, a worrying fact given that business dynamism has also declined in the United States. Nevertheless, the US has a more flexible labor market and appears more open to both the birth of new firms (venture capital) and the deaths of older firms. Yet, in both the United States and around the the world the differences between high productivity and low productivity firms appears to be growing, that is the dispersion in productivity is growing which means that the good ideas are not spreading as quickly as they once did. Aghion and Howitt’s work gives us a model for thinking about these kinds of issues–see, for example, Ten Facts on Business Dynamism and Lessons from Endogenous Growth Theory.

Hanson and Buterin for Nobel Prize in Economics

Intercontinental Exchange (ICE.N), the company that owns the NYSE exchange, just announced a $2 billion dollar investment in Polymarket, the Ethereum-blockchain based prediction markets platform. This is a tremendous milestone for prediction markets and for blockchains.

Shayne Coplan the founder of Polymarket writes:

The Polymarket origin story is funny because it’s a rare case of the dream being identical to how things played out. If I learned one thing, it’s that bold ideas are everywhere, hidden in plain sight. It just takes someone crazy enough to spend their life willing it into existence. That’s entrepreneurship: willing things into existence.

I remember reading Robin Hanson’s literature on prediction markets and thinking – man, this is too good of an idea to just exist in whitepapers. There were a million reasons why it shouldn’t work, countless arguments of why not to do it, and the odds were against us, but we had to try.

At the onset of the pandemic, I quite literally had nothing to lose: 21, running out of money, 2.5 years since I dropped out and nothing to show for it. But I knew we were entering an era where ways to find truth would matter more than ever, and Polymarket could play a critical role in that. After all, nothing is more valuable than the truth. It’s still a work in progress, but we’re honored to have made the impact we have thus far.

The NYSE will use Polymarket data to sharpen forecasts. The next step is decision markets. Futarchy, for example, just announced a prediction market in the value of Tesla shares if Musk’s compensation package is approved versus if it is not approved. Information like this can be used to improve decisions. To see how powerful this can be, broaden it to Hanson’s 1996 idea of a Dump the CEO Market, a market in the value of a company’s shares with and without the current CEO. Very powerful. And that is only the beginning.

In my 2002 book, Entrepreneurial Economics: Bright Ideas from the Dismal Science, which featured Robin’s paper on Decision Markets, I wrote

If Hanson is right about the benefits of decision markets, then perhaps one day, instead of quoting an expert, the New York Times editorial section will refer to the latest quote on “health care plan A” available in the business pages.

That day is upon us! It probably will not happen on Monday but it is time to give Robin Hanson, the father of prediction markets, and Vitalik Buterin, the co-father of Ethereum, a Nobel prize in economics for applied mechanism design.

Addendum: My a16z podcast with Scott Duke Kominers on prediction markets.

MR Podcast: Our Favorite Models, Session 1

The Marginal Revolution Podcast is back and this time Tyler and I discuss some of our favorite models or ways of thinking about the world. We begin with Spence on Monopolies, Harberger on Incidence and Solow on Growth. Here’s one bit:

TABARROK: You have an increase in the corporate tax. What happens?

COWEN: One lesson of the Harberger model is actually anything can happen. Who bears the burden? Is it capital, is it labor, or is it consumers? In the simplest versions of the model, what you have is both a substitution, capital versus labor in the taxed sector, and you have substitutions across sectors. You have a whole series of different effects. One of the first and simplest lessons from Harberger, which is really neat, but people just hadn’t gotten it before, is if you tax the corporate sector under a lot of reasonably general assumptions, the rate of return on capital goes down equally in both sectors, which to us is standard fare.

What will happen is capital flows out of the corporate sector into the noncorporate sector, that lowers the marginal rate of return on capital in the nontaxed sector, and simply the notion of capital can suffer in both sectors. Again, a revelation, maybe self-evident to us having written this principles textbook, but it shocked people. The partial equilibrium models never show that.

TABARROK: When you tax the corporations, you’re also taxing the mom-and-pops.

COWEN: And the nonprofits and whatever, wherever else the capital might flow.

TABARROK: Yes. This was one of the first useful applications of general equilibrium.

COWEN: That’s right. On that, it’s really held up. International affairs, one of the lessons is if you turn the other sector or add another sector that’s international, basically small economies cannot afford to tax capital at very high rates because so much of the capital will flow elsewhere.

TABARROK: Instead of it flowing to the noncorporate sector, it just flows out of the country.

COWEN: That’s right, which is like the other sector not affected by this particular tax. In 1962, a lot of small economies treated their capital very badly. Many still do, but there’s been a real revolution where even fairly statist economies—like the Nordics over time shifted to treating capital income pretty generously. Singapore would be another example. Again, it’s simple once you know it, but the Harberger model taught us that.

TABARROK: What about the labor margin?

COWEN: The debate since then has been how much of the tax is borne by capital and how much is borne by labor? On one hand, the Harberger model teaches you anything can happen. That’s useful intuitively. In fact, when you investigate it empirically, it’s what you would expect to happen that mostly happens. That is, capital does bear more of the tax than labor.

TABARROK: Labor bears a chunk.

COWEN: Yes. A typical estimate might be a third. There’s no free lunch from the point of view of labor. Furthermore, a lot of the capital is owned by labor through pension funds. If you take that into account, I don’t have an exact number for you, but I think it’s plausible to think labor might bear half the burden of the corporate tax. Again, you can show that pretty simply. The estimates are not exact, but just a big advance for economics. If you ask me, what ideas do I use all the time, that’s one of them.

The Harberger basic model, it doesn’t have land, but there’s the issue of what if you have three factors in the model, you would start with the Harberger model. If you’re a NIMBY who thinks there’s this kind of land monopoly in a city or land rents are very high because we stifle building, the incidence of a lot of taxes, even in general equilibrium models, can fall on the land for a city.

TABARROK: Yes, because the land can’t escape.

COWEN: That’s right.

TABARROK: As we say in the textbook, elasticity is equal to escape, right?

Here’s the episode. Subscribe now to take a small step toward a much better world: Apple Podcasts | Spotify | YouTube.

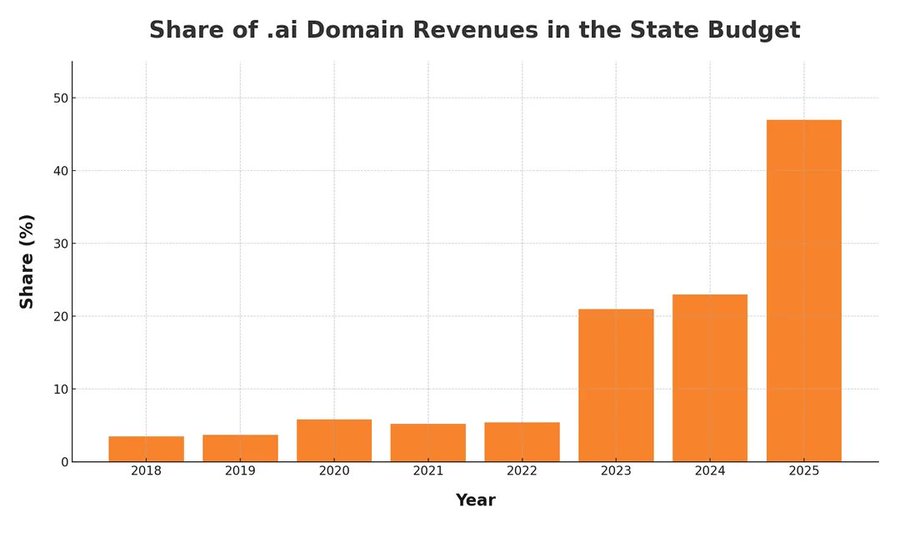

The ai Boom

The tiny country of Anguilla (pop 15,000) has an official country top-level domain code for the internet of .ai. Domain name registrations have surged from 48,000 in 2018 to 870,000 in the year to date and that source of revenue alone now accounts for nearly 50% of state revenues.

Hat tip: Cremieux based on this analysis.

AI Scientists in the Lab

Today, we introduce Periodic Labs. Our goal is to create an AI scientist.

Science works by conjecturing how the world might be, running experiments, and learning from the results.

Intelligence is necessary, but not sufficient. New knowledge is created when ideas are found to be consistent with reality. And so, at Periodic, we are building AI scientists and the autonomous laboratories for them to operate.

…Autonomous labs are central to our strategy. They provide huge amounts of high-quality data (each experiment can produce GBs of data!) that exists nowhere else. They generate valuable negative results which are seldom published. But most importantly, they give our AI scientists the tools to act.

…One of our goals is to discover superconductors that work at higher temperatures than today’s materials. Significant advances could help us create next-generation transportation and build power grids with minimal losses. But this is just one example — if we can automate materials design, we have the potential to accelerate Moore’s Law, space travel, and nuclear fusion.

Our founding team co-created ChatGPT, DeepMind’s GNoME, OpenAI’s Operator (now Agent), the neural attention mechanism, MatterGen; have scaled autonomous physics labs; and have contributed to important materials discoveries of the last decade. We’ve come together to scale up and reimagine how science is done.

The AI’s can work 24 hours a day, 365 days a year and with labs under their control the feedback will be quick. In nine hours, AlphaZero taught itself chess and then trounced the then world champion Stockfish 8, (ELO around 3378 compared to Magnus Carlsen’s high of 2882). That was in 2017. In general, experiments are more open-ended than chess but not necessarily in every domain. Moreover context windows and capabilities have grown tremendously since 2017.

In other AI news, AI can be used to generate dangerous proteins like ricin and current safeguards are not very effective:

Microsoft bioengineer Bruce Wittmann normally uses artificial intelligence (AI) to design proteins that could help fight disease or grow food. But last year, he used AI tools like a would-be bioterrorist: creating digital blueprints for proteins that could mimic deadly poisons and toxins such as ricin, botulinum, and Shiga.

Wittmann and his Microsoft colleagues wanted to know what would happen if they ordered the DNA sequences that code for these proteins from companies that synthesize nucleic acids. Borrowing a military term, the researchers called it a “red team” exercise, looking for weaknesses in biosecurity practices in the protein engineering pipeline.

The effort grew into a collaboration with many biosecurity experts, and according to their new paper, published today in Science, one key guardrail failed. DNA vendors typically use screening software to flag sequences that might be used to cause harm. But the researchers report that this software failed to catch many of their AI-designed genes—one tool missed more than 75% of the potential toxins.

Solve for the equilibrium?

Lerner Symmetry Bites

President Trump recently boasted:

.@POTUS: “We’re going to take some of that tariff money that we made — we’re going to give it to our farmers… We’re going to make sure that our farmers are in great shape.”

A practically textbook example of Lerner Symmetry! In an earlier post, I highlighted Doug Irwin’s Three Simple Principles of Trade Policy. Principle One is Lerner Symmetry—a tax on imports is a tax on exports:

Exports are necessary to generate the earnings to pay for imports, or exports are the goods a country must give up in order to acquire imports….if foreign countries are blocked in their ability to sell their goods in the United States, for example, they will be unable to earn the dollars they need to purchase U.S. goods.

…The equivalence of export and import taxes is not an obvious proposition, and it is often counterintuitive to most people. Imagine taking a poll of average Americans and asking the following question: “Should the United States impose import tariffs on foreign textiles to prevent low-wage countries

from harming thousands of American textile workers?” Some fraction, perhaps even a sizeable one, of the respondents would surely answer affirmatively. If asked to explain their position, they would probably reply that import tariffs would create jobs for Americans at the expense of foreign workers and thereby reduce domestic unemployment.Suppose you then asked those same people the following question: “Should the United States tax the exportation of Boeing aircraft, wheat and corn, computers and computer software, and other domestically produced goods?” I suspect the answer would be a resounding and unanimous “No!” After all, it would be explained, export taxes would destroy jobs and harm important industries. And yet the Lerner symmetry theorem says that the two policies are equivalent in their economic effects.

Thus, President Trump is having to subsidize farmers because farmers are exporters. Import tariffs make it harder for exporters to sell abroad. Using tariff revenue to subsidize the losses of exporters is a textbook illustration of Lerner Symmetry because the export losses flow directly from the tax on imports! The irony is that President Trump parades the subsidies as a victory while in fact they are simply damage control for a policy he created.

AI and the FDA

Dean Ball has an excellent survey of the AI landscape and policy that includes this:

The speed of drug development will increase within a few years, and we will see headlines along the lines of “10 New Computationally Validated Drugs Discovered by One Company This Week,” probably toward the last quarter of the decade. But no American will feel those benefits, because the Food and Drug Administration’s approval backlog will be at record highs. A prominent, Silicon Valley-based pharmaceutical startup will threaten to move to a friendlier jurisdiction such as the United Arab Emirates, and they may in fact do it.

Eventually, I expect the FDA and other regulators to do something to break the logjam. It is likely to perceived as reckless by many, including virtually everyone in the opposite party of whomever holds the White House at the time it happens. What medicines you consume could take on a techno-political valence.

Agreed—but the nearer-term upside is repurposing. Once a drug has been FDA approved for one use, physicians can prescribe it for any use. New uses for old drugs are often discovered, so the off-label market is large. The key advantage of off-label prescribing is speed: a new use can be described in the medical literature and physicians can start applying that knowledge immediately, without the cost and delay of new FDA trials. When the RECOVERY trial provided evidence that an already-approved drug, dexamethasone, was effective against some stages of COVID, for example, physicians started prescribing it within hours. If dexamethasone had had to go through new FDA-efficacy trials a million people would likely have died in the interim. With thousands of already approved drugs there is a significant opportunity for AI to discover new uses for old drugs. Remember, every side-effect is potentially a main effect for a different condition.

On Ball’s main point, I agree: there is considerable room for AI-discovered drugs, and this will strain the current FDA system. The challenge is threefold.

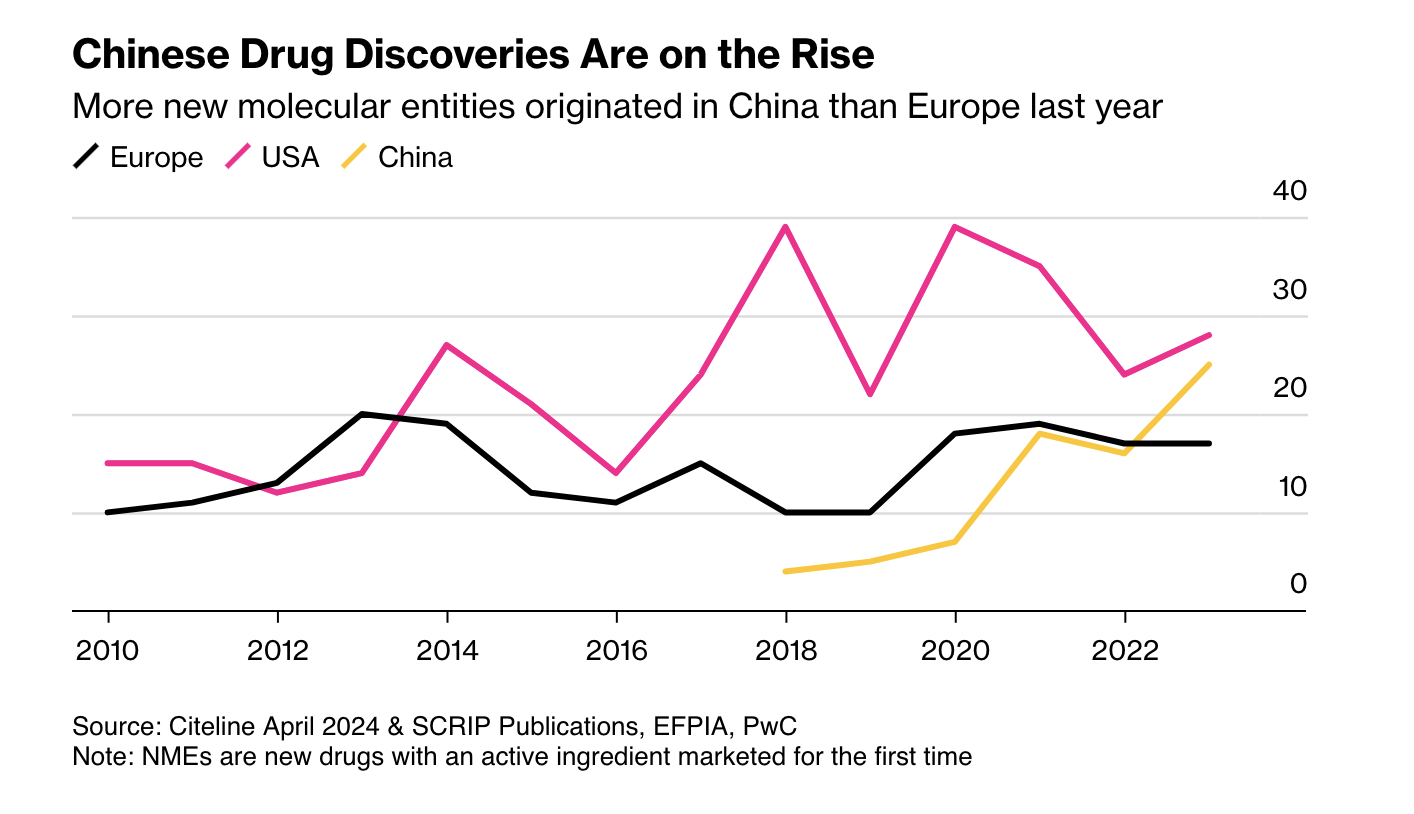

First, as Ball notes, more candidate drugs at lower cost means other regulators may become competitive with the FDA. China is the obvious case: it is now large and wealthy enough to be an independent market, and its regulators have streamlined approvals and improved clinical trials. More new drugs now emerge from China than from Europe.

Second, AI pushes us toward rational drug design. RCTs were a major advance, but they are in some sense primitive. Once a mechanic has diagnosed a problem, the mechanic doesn’t run a RCT to determine the solution. The mechanic fixes the problem! As our knowledge of the body grows, medicine should look more like car repair: precise, targeted, and not reliant on averages.

Closely related is the rise of personalized medicine. As I wrote in A New FDA for the Age of Personalized, Molecular Medicine:

Each patient is a unique, dynamic system and at the molecular level diseases are heterogeneous even when symptoms are not. In just the last few years we have expanded breast cancer into first four and now ten different types of cancer and the subdivision is likely to continue as knowledge expands. Match heterogeneous patients against heterogeneous diseases and the result is a high dimension system that cannot be well navigated with expensive, randomized controlled trials. As a result, the FDA ends up throwing out many drugs that could do good.

RCTs tell us about average treatment effects, but the more we treat patients as unique, the less relevant those averages become.

AI holds a lot of promise for more effective, better targeted drugs but the full promise will only be unlocked if the FDA also adapts.

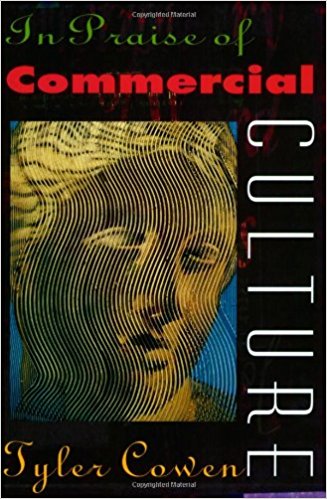

The Return of the MR Podcast: In Praise of Commercial Culture

The Marginal Revolution Podcast is back with new episodes! We begin with what I think is our best episode to date. We revisit Tyler’s 1998 book In Praise of Commercial Culture. This is the book that put Tyler on the map as a public intellectual. Tyler and I also wrote a paper, An Economic Theory of Avant-Garde and Popular Art, or High and Low Culture, exploring themes from the book. But does In Praise of Commercial Culture stand the test of time? You be the judge!

The Marginal Revolution Podcast is back with new episodes! We begin with what I think is our best episode to date. We revisit Tyler’s 1998 book In Praise of Commercial Culture. This is the book that put Tyler on the map as a public intellectual. Tyler and I also wrote a paper, An Economic Theory of Avant-Garde and Popular Art, or High and Low Culture, exploring themes from the book. But does In Praise of Commercial Culture stand the test of time? You be the judge!

Here’s one bit:

TABARROK: Here’s a quote from the book, “Art and democratic politics, although both beneficial activities, operate on conflicting principles.”

COWEN: So much of democratic politics is based on consensus. So much of wonderful art, especially new art, is based on overturning consensus, maybe sometimes offending people. All this came to a head in the 1990s, disputes over what the National Endowment for the Arts in America was funding. Some of it, of course, was obscene. Some of it was obscene and pretty good. Some of it was obscene and terrible.

What ended up happening is the whole process got bureaucratized. The NEA ended up afraid to make highly controversial grants. They spend more on overhead. They send more around to the states. Now, it’s much more boring. It seems obvious in retrospect. The NEA did a much better job in the 1960s, right after it was founded, when it was just a bunch of smart people sitting around a table saying, “Let’s send some money to this person,” and then they’d just do it, basically.

TABARROK: Right, so the greatness cannot survive the mediocrity of democratic consensus.

COWEN: There are plenty of good cases where government does good things in the arts, often in the early stages of some process before it’s too politicized. I think some critics overlook that or don’t want to admit it.

TABARROK: One of the interesting things in your book was that the whole history of the NEA, this recreates itself, has recreated itself many times in the past. The salon during the French painting Renaissance, the impressionists hated the salon, right?

COWEN: Right. And had typically turned them away because the works weren’t good enough.

TABARROK: There could be rent-seeking going on, right? The artists get control. Sometimes it’s democratic politics, but sometimes it’s some clique of artists who get control and then funnel the money to their friends.

COWEN: French cinematic subsidies would more fit that latter model. It’s not so much that the French voters want to pay for those movies, but a lot of French government is controlled by elites. The elites like a certain kind of cinema. They view it as a counterweight to Hollywood, preserving French culture. The French still pay for or, indirectly by quota, subsidize a lot of films that just don’t really even get released. They end up somewhere and they just don’t have much impact flat out.

Here’s the episode. Subscribe now to take a small step toward a much better world: Apple Podcasts | Spotify | YouTube.

The United States is Starved for Talent, Re-Upped

I wrote this post in 2020. Time to re-up (no indent);

The US offers a limited number of H1-B visas annually, these are temporary 3-6 year visas that allow firms to hire high-skill workers. In many years, the demand exceeds the supply which is capped at 85,000 and in these years USCIS randomly selects which visas to approve. The random selection is key to a new NBER paper by Dimmock, Huang and Weisbenner (published here). What’s the effect on a firm of getting lucky and wining the lottery?

We find that a firm’s win rate in the H-1B visa lottery is strongly related to the firm’s outcomes over the following three years. Relative to ex ante similar firms that also applied for H-1B visas, firms with higher win rates in the lottery are more likely to receive additional external funding and have an IPO or be acquired. Firms with higher win rates also become more likely to secure funding from high-reputation VCs, and receive more patents and more patent citations. Overall, the results show that access to skilled foreign workers has a strong positive effect on firm-level measures of success.

Overall, getting (approximately) one extra high-skilled worker causes a 23% increase in the probability of a successful IPO within five years (a 1.5 percentage point increase in the baseline probability of 6.6%). That’s a huge effect. Remember, these startups have access to a labor pool of 160 million workers. For most firms, the next best worker can’t be appreciably different than the first-best worker. But for the 2000 or so tech-startups the authors examine, the difference between the world’s best and the US best is huge. Put differently on some margins the US is starved for talent.

Of course, if we play our cards right the world’s best can be the US best.

Celebrate Vishvakarma: A Holiday for Machines, Robots, and AI

Most holidays celebrate people, gods or military victories. Today is India’s Vishvakarma Puja, a celebration of machines. In India on this day, workers clean and honor their equipment and engineers pay tribute to Vishvakarma, the god of architecture, engineering and manufacturing.

Most holidays celebrate people, gods or military victories. Today is India’s Vishvakarma Puja, a celebration of machines. In India on this day, workers clean and honor their equipment and engineers pay tribute to Vishvakarma, the god of architecture, engineering and manufacturing.

Call it a celebration of Solow and a reminder that capital, not just labor, drives growth.

Capital today isn’t just looms and tractors—it’s robots, software, and AI. These are the new force multipliers, the machines that extend not only our muscles but our minds. To celebrate Vishvakarma is to celebrate tools, tool makers and the capital that makes us productive.

We have Labor Day for workers and Earth Day for nature. Viskvakarma Day is for the machines. So today don’t thank Mother Earth, thank the machines, reflect on their power and productivity and be grateful for all that they make possible. Capital is the true source of abundance.

Vishvakarma Day should be our national holiday for abundance and progress.

Hat tip: Nimai Mehta.

The British War on Slavery

In August of 1833 the British passed legislation abolishing slavery within the British Empire and putting more than 800,000 enslaved Africans on the path to freedom. To make this possible, the British government paid a huge sum, £20 million or about 5% of GDP at the time, to compensate/bribe the slaveowners into accepting the deal. In inflation adjusted terms this is about £2.5 billion today (2025) but relative to GDP the British spent an equivalent of about $170 billion to free the slaves, a very large expenditure.

Indeed, the expenditure was so large that the money was borrowed and the final payments on the debt were not made until 2015. When in 2015 a tweet from the British Treasury revealed this surprising fact, there was a paroxysm of outrage as if slaveholders were still being paid off. I see the compensation in much more positive terms.

Of course, in an ideal world, compensation would have been paid to the slaves, not the slaveowners. Every man has a property in his own person and it was the slaves who had had their property stolen. In an ideal world, however, slavery would never have happened. Thus, the question the British abolitionists faced is not what happens in an ideal world but how do we get from where we are to a better world? Compensating the slaveowners was the only practical and peaceful way to get to a better world. As the great abolitionist William Wilberforce said on his deathbed “Thank God that I should have lived to witness a day in which England is willing to give twenty millions sterling for the abolition of slavery!”

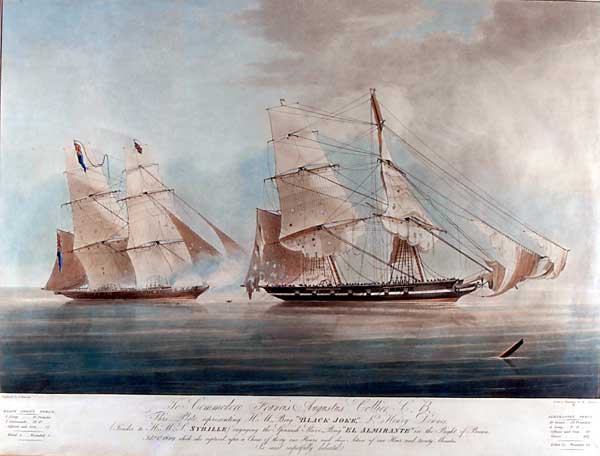

The 1833 Slavery Abolition Act was preceded by the 1807 Slave Trade Act which had banned trade in slaves. In an excellent new paper, The long campaign: Britain’s fight to end the slave trade, economist historians Yi Jie Gwee and Hui Ren Tan assemble new archival data to assess how the Royal Navy’s anti slave-trade patrols expanded over time, how effective they were at curtailing the trade, the influence of supply-side enforcement versus demand-side changes on ending the trade, and why Britain persisted with this costly campaign.

Britain’s naval suppression campaign began on a modest scale but the campaign grew in strength throughout the 19th century, peaking in the late 1840s to early 1850s when over 14% of the entire Royal Navy fleet was deployed to anti-slavery patrols. The British patrols captured some 1,600 ships and freed some 150,000 people destined for slavery but they were at best only modestly successful at reducing the slave trade. The big impact came when Brazil, the largest remaining market for enslaved labor (by the mid-19th century, nearly 80% of trans-Atlantic slave voyages sailed under Brazilian or Portuguese flags), enacted its anti slave-trade law in 1850. Britain’s campaign was not without influence on the demand side however as passage of the Aberdeen Act in 1845 allowed the Royal Navy to seize Brazilian slave ships and that put pressure on Brazil and helped spur the 1850 law.

The suppression patrols were expensive (consuming ships, men, and funds), and Britain derived no direct economic benefit from them. Yet, even during the Napoleonic Wars, the Opium Wars, and the Crimean War, the Royal Navy continued to station ships, even high-tech steam ships, off West Africa to catch slavers. Due to the expense, the patrols were controversial and there were attempts to end them. Gwee and Tan look at the votes on ending the patrols and find that ideology was the dominant factor explaining support for the patrols, that is a principled opposition to the slave trade and a belief in the moral cause of abolition kept Britain in the war against slavery even at considerable expense.

Ordinarily, I teach politics without romance and look for interest as an explanation of political action and while I don’t doubt that doing good and doing well were correlated, even during abolition, I also agree with Gwee and Tan that the British war on slavery was primarily driven by ideology and moral principle as both the compensation plan and the support of the anti-slavery patrols attest.

British taxpayers shouldered an enormous military and financial burden to eliminate slavery, reflecting a generosity of spirit and a sincere attempt to address a moral wrong—an act of atonement that stands as one of the most unusual and significant in history.