The MR Podcast: Debt!

On The Marginal Revolution Podcast this week, Tyler and I discuss the US debt. This is our final podcast of the year. Here’s one bit:

TABARROK: I do agree that it is puzzling that the interest rate on bonds is so low. Hanno Lustig and his co-authors have an interesting paper on this. They point out that not only is it the case that we have all of this debt with no plans to pay it, as far as we can tell right now, but the debt is not a very good asset in the sense that when will the debt be paid? If it is going to be paid, it’s going to be paid when the times are good. That means that you’re being paid when GDP is higher and the marginal utility money is low.

When is the debt not paid? When does it get bigger? It means when the economy is doing poorly. The debt as an asset has the opposite kind of structure than you would want. It’s not like gold, which arguably goes down in good times and goes up in bad times. You get some nice covariance to even out your portfolio. The debt as an asset is positively correlated with good times, and that’s bad. You should expect the interest rates to be much, much higher than they actually are.

COWEN: The easy out there is just to say it’s always going to be paid. Let me give you a way of reconceptualizing the problem. The Hanno Lustig paper, which is called “US Public Debt Valuation Puzzle,” like a lot of work on debt, it focuses on flows. There’s the rate of interest, there’s government spending. If you look at stocks, look at the stock of wealth in the United States. A common estimate from the past was wealth is six to eight times higher than GDP. That’s a little misleading. How do you value all the wealth? How liquid is it?

Still, we all know there’s a lot more wealth than GDP. If your economy stays at peace, if anything, that ratio rises. You build things, they’re pretty durable. None of it is destroyed by bombs. We’re just headed to having more and more wealth. If you take, say, 100% debt-to-GDP ratio, and you think wealth is six to eight times higher, what’s our debt-to-wealth ratio? Well, it’s going to depend what kind of wealth, how liquid, blah, blah, blah. Let’s say it’s like 20%. Let’s say you had a debt ratio of 20% to your wealth at some point in the history of your mortgage. I bet you did. You weren’t worried. Why should the US be worried?

TABARROK: The US is a much longer-lived entity, presumably, than I am.

COWEN: That’s right. You could have 200% debt-to-GDP ratio. In terms of your debt-to-wealth ratio, again, it’s somewhat arbitrary, but say it’s 40% to 50% that might be on the high side. It’s not pleasant, but I’ve been in that situation with mortgages.

Here’s the episode. Subscribe now to take a small step toward a much better world: Apple Podcasts | Spotify | YouTube.

The Dells add to Trump Accounts

I wrote that Trump Accounts Are A Big Deal. These accounts give U.S. citizen’s born between January 1, 2025, and December 31, 2028, $1000 invested in a low-cost, diversified U.S. stock index fund. Well, the accounts just got bigger. Michael and Susan Dell are donating $6.25 billion to seed accounts with $250 for children born before Jan. 2025, up to ten years of age:

The Dells have committed to seed Trump accounts with $250 for children who are 10 or under who were born before Jan. 1, 2025. According to Invest America, the pledged funds will cover 25 million children age 10 and under in ZIP codes with a median income of $150,000 or less.

“We want to help the children that weren’t part of the government program,” Dell said.

The People’s Republic of Santa Monica

Here’s a video from real estate investor and youtuber Graham Stephan. He’s explaining (starting at 7:23) why he is selling a home in Santa Monica instead of renting it out–it’s the rent control laws, of course. The laws are strongly biased against landlords. Perhaps landlords should be a protected class.

Everything he says about the law, by the way, is accurate. I was initially skeptical (as was Google Gemini) that homes had to be rented unfurnished. Why would that stupidity be a law? But no, it’s accurate. Apparently, the idea is to make it more difficult to rent to temporary residents.

To preserve rental housing for permanent residents, all rental units must be rented unfurnished for an initial term of not less than one year and only to natural persons intending to use the unit as their primary residence.

Here’s Google Gemini summarizing, once I corrected it on the unfurnished home law.

The speaker’s understanding of the Santa Monica Rent Control and Just Cause laws is highly accurate in almost all respects:

Subject to Rent Control (7:50): Accurate. A non-primary residence built before 1979 is typically subject to the law.

Indefinite Tenancy (8:50): Accurate. Tenants gain “permanent right” to occupancy as eviction is limited to specific “just causes.”

Rent Increase Limit ($60/AGI) (8:13): Accurate. The annual rent increase is capped by a fixed, low dollar amount (the AGI), which closely aligns with the speaker’s figure.

No Eviction to Sell (8:57): Accurate. Selling the property is not a “just cause” for eviction.

Owner Move-In (OMI) Requirements (9:14):Accurate. Eviction for OMI requires paying substantial relocation fees, re-offering the unit if re-rented within two years, and prohibits eviction during the school year.

Furnished Home Prohibition (9:56):Accurate. Santa Monica requires rental units to be initially rented unfurnished to permanent residents.

Hat tip: Naveen Nvn who also says this video is worth watching.

Make Africa Healthy Again

In the late 1990s, South Africa’s President Thabo Mbeki decided that mainstream science had AIDS wrong. A small circle of “truth-tellers” convinced him that AIDS came from poverty and malnutrition, not a virus. He warned that anti-retroviral therapy (ART) was toxic and that pharmaceutical companies were poisoning Africans for profit.

His government stalled the rollout of ART. Health Minister Manto Tshabalala-Msimang pushed garlic, beetroot, and lemon as medicine. “Nutrition is the basis for good health,” she said, insisting that exercise and diet, not Western drugs, were the real treatment. She warned that antiretrovirals had side effects, including cancer, that the establishment was hiding. When scientists showed data, she waved it off: “No churning of figures after figures will deter me from telling the truth to the people of the country.”

The result was a public health disaster: hundreds of thousand of preventable deaths (see also here and here).

A reminder of what happens when authority trades evidence for ideology.

Against We

I propose a moratorium on the generalized first-person plural for all blog posts, social media comments, opinion writing, headline writers, for all of December. No “we, “us,” or “our,” unless the “we” is made explicit.

No more “we’re living in a golden age,” “we need to talk about,” “we can’t stop talking about,” “we need to wise up.” They’re endless. “We’ve never seen numbers like this.” “We are not likely to forget.” “We need not mourn for the past.” “What exactly are we trying to fix?” “How are we raising our children?” “I hate that these are our choices.”

…“We” is what linguists call a deictic word. It has no meaning without context. It is a pointer. If I say “here,” it means nothing unless you can see where I am standing. If I say “we,” it means nothing unless you know who is standing next to me.

…in a headline like “Do we need to ban phones in schools?” the “we” is slippery. The linguist Norman Fairclough called this way of speaking to a mass audience as if they were close friends synthetic personalization. The “we” creates fake intimacy and fake equality.

Nietzsche thought a lot about how language is psychology. He would look askance at the “we” in posts like “should we ban ugly buildings?” He might ask: who are you that you do not put yourself in the role of the doer or the doing? Are you a lion or a lamb?

Perhaps you are simply a coward hiding in the herd, Martin Heidegger might say, with das Man. Don’t be an LLM. Be like Carol!

Hannah Arendt would say you’re dodging the blame. “Where all are guilty, nobody is.” Did you have a hand in the policy you are now critiquing? Own up to your role.

Perhaps you are confusing your privileged perch with the broader human condition. Roland Barthes called this ex-nomination. You don’t really want to admit that you are in a distinct pundit class, so you see your views as universal laws.

Adorno would say you are selling a fake membership with your “jargon of authenticity,” offering the reader membership in your club. As E. Nelson Bridwell in the old Mad Magazine had it: What do you mean We?

…If you are speaking for a very specific we, then say so. As Mark Twain is said to have said, “only presidents, editors, and people with tapeworms ought to have the right to use we.”

I could go on. But you get the drift. The bottom line is that “we” is squishy. I is the brave pronoun. I is the hardier pronoun. I is the—dare I say it—manly pronoun.

I agree.

Thanksgiving and the Lessons of Political Economy

Time to re-up my 2004 post on thanksgiving and the lessons of political economy. Here it is with no indent:

It’s one of the ironies of American history that when the Pilgrims first arrived at Plymouth rock they promptly set about creating a communist society. Of course, they were soon starving to death.

Fortunately, “after much debate of things,” Governor William Bradford ended corn collectivism, decreeing that each family should keep the corn that it produced. In one of the most insightful statements of political economy ever penned, Bradford described the results of the new and old systems.

[Ending corn collectivism] had very good success, for it made all hands very industrious, so as much more corn was planted than otherwise would have been by any means the Governor or any other could use, and saved him a great deal of trouble, and gave far better content. The women now went willingly into the field, and took their little ones with them to set corn; which before would allege weakness and inability; whom to have compelled would have been thought great tyranny and oppression.

The experience that was had in this common course and condition, tried sundry years and that amongst godly and sober men, may well evince the vanity of that conceit of Plato’s and other ancients applauded by some of later times; that the taking away of property and bringing in community into a commonwealth would make them happy and flourishing; as if they were wiser than God. For this community (so far as it was) was found to breed much confusion and discontent and retard much employment that would have been to their benefit and comfort. For the young men, that were most able and fit for labour and service, did repine that they should spend their time and strength to work for other men’s wives and children without any recompense. The strong, or man of parts, had no more in division of victuals and clothes than he that was weak and not able to do a quarter the other could; this was thought injustice. The aged and graver men to be ranked and equalized in labours and victuals, clothes, etc., with the meaner and younger sort, thought it some indignity and disrespect unto them. And for men’s wives to be commanded to do service for other men, as dressing their meat, washing their clothes, etc., they deemed it a kind of slavery, neither could many husbands well brook it. Upon the point all being to have alike, and all to do alike, they thought themselves in the like condition, and one as good as another; and so, if it did not cut off those relations that God hath set amongst men, yet it did at least much diminish and take off the mutual respects that should be preserved amongst them. And would have been worse if they had been men of another condition. Let none object this is men’s corruption, and nothing to the course itself. I answer, seeing all men have this corruption in them, God in His wisdom saw another course fitter for them.

Among Bradford’s many insights it’s amazing that he saw so clearly how collectivism failed not only as an economic system but that even among godly men “it did at least much diminish and take off the mutual respects that should be preserved amongst them.” And it shocks me to my core when he writes that to make the collectivist system work would have required “great tyranny and oppression.” Can you imagine how much pain the twentieth century could have avoided if Bradford’s insights been more widely recognized?

Addendum: Today (2025) I would add only that the twenty-first century could avoid a lot of pain if Bradford’s insights were more widely recognized.

Why are Mormons so Libertarian?

Connor Hansen has a very good essay on Why Are Latter-day Saints So Libertarian? It serves both as an introduction to LDS theology and as an explanation for why that theology resonates with classical liberal ideas. I’ll summarize, with the caveat that I may get a few theological details wrong.

LDS metaphysics posits a universe governed by eternal law. God works with and within the laws of the universe–the same laws that humans can discover with reason and science.

This puts Latter-day Saint cosmology in conversation with the Enlightenment conviction that nature operates predictably and can be studied systematically. A theology where God organizes matter according to eternal law opens space for both scientific inquiry and mystical experience—the careful observation of natural law and the direct encounter with divine love operating through that law.

LDS epistemology is strikingly pro-reason. Even Ayn Rand would approve:

Latter-day Saint theology holds that human beings possess eternal “intelligence”—a term meaning something like personhood, consciousness, or rational capacity—that exists independent of creation. This intelligence is inherent, not granted, and it survives death.

Paired with this is the doctrine of agency: humans are genuinely free moral agents, not puppets or broken remnants after a fall. We’re capable of reason, judgment, and meaningful choice.

This creates an unusually optimistic anthropology. Human reason isn’t fundamentally corrupted or unreliable. It’s a divine gift and a core feature of identity. That lines up neatly with the Enlightenment belief that people can use reason to understand the world, improve their lives, and govern themselves effectively.

In ethics, agency is arguably the most libertarian strand in LDS theology. Free to choose is literally at the center of both divine nature and moral responsibility.

According to Latter-day Saint belief, God proposed a plan for human existence in which individuals would receive genuine agency—the ability to choose, make mistakes, learn, change, and ultimately progress toward becoming like God.

One figure, identified as Satan, rejected that plan and proposed an alternative: eliminate agency, guarantee universal salvation through compulsion, and claim God’s glory in the process.

The disagreement escalated into conflict. In Latter-day Saint scripture, Satan and those who followed him were cast out. The ones who chose agency—who chose freedom with its attendant risks—became mortal humans.

This matters politically because it means that in Latter-day Saint theology, coercion is not merely misguided policy or poor governance. It is literally Satanic. The negation of agency, forced conformity, compulsory salvation—these align with the devil’s rebellion against God’s plan.

Now add to this a 19th century belief in progress and abundance amped up by theology:

Humanity isn’t hopelessly corrupt. Instead, individuals are expected to learn, improve, innovate, and help build better societies.

But here’s where it gets radical: Latter-day Saints believe in the doctrine of eternal progression—the teaching that human beings can, over infinite time and through divine grace, become as God is. Not metaphorically. Actually.

If you believe humans possess infinite potential to rise, become, and progress eternally—literally without bound—then political systems that constrain, manage, or limit human aspiration start to feel spiritually suspect.

Finally, the actually history of the LDS church–expulsions from Missouri and Illinois, Joseph Smith’s violent death, the migration to the Great Basin, the creation of a quasi-independent society–is one of resistance to centralized government power. Limited government and local autonomy come to feel like lessons learned through lived experience. Likewise, the modern LDS welfare system is a working demonstration of how voluntary, covenant-based mutual aid can deliver real social support without coercion. This real-world model strengthens the intuition that social goods need not rely on compulsory state systems, and that voluntary institutions can often be more humane and effective.

To which I say, amen brother! Read the whole essay for more.

See also the book, , with an introduction by the excellent Mark Skousen.

Hat tip: Gale.

Side-Walking Problems

Local Law 11 requires owners of New York City’s 16,000-plus buildings over six stories to get a “close-up, hands-on” facade inspection every five years. Repair costs in NYC’s bureaucratic and labor-union driven system are very high, so the owners throw up “temporary” plywood sheds that often sit there for a decade. NYC now has some 400 miles of ugly sheds.

The ~9,000 sheds stretching nearly 400 miles have installation costs around $100–150 per linear foot and ongoing rents of about 5–6% of that per month, implying something like $150 million plus a year in shed rentals citywide.

Well. at last something is being done! The sheds are being made prettier! Six new designs, some with transparent roofs as in the rendering below are now allowed. Looks nice in the picture. Will it look as nice in real life? Will it cost more? Almost certainly!

To be fair, City Hall is cracking down as well as doubling down: new laws cut shed permits from a year to three months and ratchet up fines for letting sheds linger. That’s a good idea. But the prettier sheds are the tell. Instead of reevaluating the law, doing a cost-benefit test or comparing with global standards, NYC wants to be less ugly.

How about using drones and AI to inspect buildings? Singapore requires inspections every 7 years but uses drones to do most of the work with a follow-up with hands-on check. How about investigating ways to cut the cost of repair? The best analysis of NYCs facade program indicates something surprising–the problem isn’t just deteriorating old buildings but also poorly installed glass in new buildings, thus more focus on installation quality is perhaps warranted. Moreover, are safety resources being optimized? Instead of looking up, New Yorkers might do better by looking down. Stray voltage continues to kill pets and shock residents. Manhole “incidents” including explosions happen in the thousands every year! What’s the best way to allocate a dollar to save a life in NYC?

Instead of dealing the with the tough but serious problems, NYC has decided to put on the paint.

Big, Fat, Rich Insurance Companies

In my post, Horseshoe Theory: Trump and the Progressive Left, I said:

Trump’s political coalition isn’t policy-driven. It’s built on anger, grievance, and zero-sum thinking. With minor tweaks, there is no reason why such a coalition could not become even more leftist. Consider the grotesque canonization of Luigi Mangione, the (alleged) murderer of UnitedHealthcare CEO Brian Thompson. We already have a proposed CA ballot initiative named the Luigi Mangione Access to Health Care Act, a Luigi Mangione musical and comparisons of Mangione to Jesus. The anger is very Trumpian.

In that light, consider one of Trump’s recent postings:

THE ONLY HEALTHCARE I WILL SUPPORT OR APPROVE IS SENDING THE MONEY DIRECTLY BACK TO THE PEOPLE, WITH NOTHING GOING TO THE BIG, FAT, RICH INSURANCE COMPANIES, WHO HAVE MADE $TRILLIONS, AND RIPPED OFF AMERICA LONG ENOUGH.

Confidently Wrong

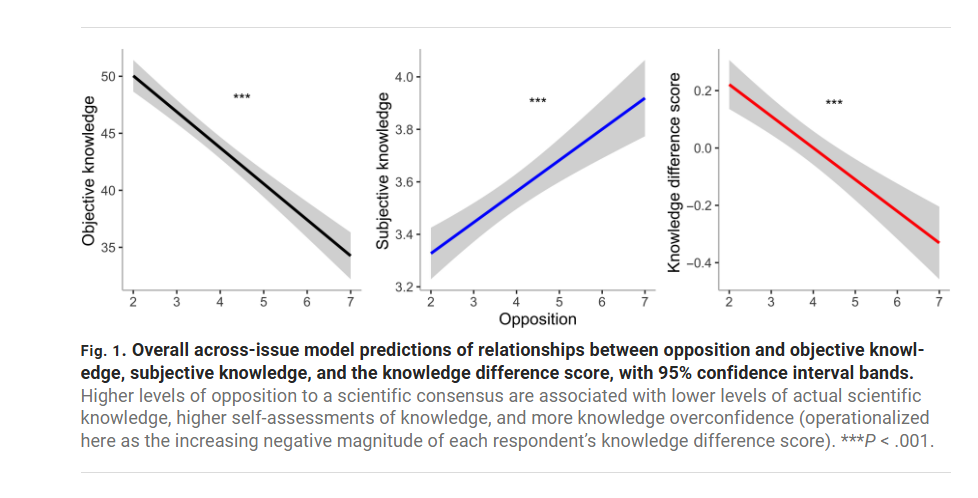

If you’re going to challenge a scientific consensus, you better know the material. Most of us, most of the time, don’t—so deferring to expert consensus is usually the rational strategy. Pushing against the consensus is fine; it’s often how progress happens. But doing it responsibly requires expertise. Yet in my experience the loudest anti-consensus voices—on vaccines, climate, macroeconomics, whatever—tend to be the least informed.

This isn’t just my anecdotal impression. A paper by Light, Fernbach, Geana, and Sloman shows that opposition to the consensus is positively correlated with knowledge overconfidence. Now you may wonder. Isn’t this circular? If someone claims the consensus view is wrong we can’t just say that proves they don’t know what they are talking about. Indeed. Thus Light, Fernbach, Geana and Sloman do something clever. They ask respondents a series of questions on uncontroversial scientific topics. Questions such as:

1. True or false? The center of the earth is very hot: True

2. True or false? The continents have been moving their location for millions of years and will continue to move. True

3. True or false? The oxygen we breathe comes from plants: True

4. True or false? Antibiotics kills viruses as well as bacteria: False

5. True or false? All insects have eight legs: False

6. True or false? All radioactivity is man made: False

7. True or false? Men and women normally have the same number of chromosomes: True

8. True or false? Lasers work by focusing sound waves: False

9. True or false? Almost all food energy for living organisms comes originally from sunlight: True

10. True or false? Electrons are smaller than atoms: True

The authors then correlate respondents’ scores on the objective (uncontroversial) knowledge with their opposition to the scientific consensus on topics like vaccination, nuclear power, and homeopathy. The result is striking: people who are most opposed to the consensus (7, the far right of the horizontal axis in the figure below) score lower on objective knowledge but express higher subjective confidence. In other words, anti-consensus respondents are the most confidently wrong—the gap between what they know and what they think they know is widest.

In a nice test the authors show that the confidently wrong are not just braggadocios they actually believe they know because they are more willing to bet on the objective knowledge questions and, of course, they lose their shirts. A bet is a tax on bullshit.

The implications matter. The “knowledge deficit” approach (just give people more fact) breaks down when the least-informed are also the most certain they’re experts. The authors suggest leaning on social norms and respected community figures instead. My own experience points to the role of context: in a classroom, the direction of information flow is clearer, and confidently wrong pushback is rarer than on Twitter or the blog. I welcome questions in class—they’re usually great—but they work best when there’s at least a shared premise that the point is to learn.

Hat tip: Cremieux

The MR Podcast: Tariffs!

On The Marginal Revolution Podcast this week, Tyler and I discuss tariffs! Here’s one bit:

COWEN: I have a new best argument against tariffs. It’s very soft. I think it’s hard to prove, but it might actually be the very best argument against tariffs.

TABARROK: All right, let’s hear it.

COWEN: If you think about COVID policy, the wealthy nations did a bunch of things. Some of them were quite bad, and the poorer nations all copied that. They didn’t have to copy it, but there was some kind of contagion effect, or that seemed like the high-status thing to do. I believe with tariffs, something similar goes on. There’s a huge literature about retaliation. Of course, retaliation is a cost, that’s bad, but simply the copying effect that it was high status for the wealthy nations to have tariffs. They can afford it better, but then places like India had their own version of the same thing. That was just terrible for India at a much higher human cost than, say, it was for the United States. Again, it’s hard to trace or prove, but that I think could actually be the best argument against tariffs, simply that poorer countries will copy what the high-status nations are doing.

This is like Rob Henderson’s idea of luxury beliefs, beliefs which the elite can proffer at low cost but which have negative consequences when adopted by working and lower classes. Tariffs aren’t great for the US but the US is so large and rich we can handle it but if the idea is adopted by poorer nations it will be much worse for them. I wish I had been clever enough to say this during the podcast but I never know what Tyler will say in advance.

Here’s another bit:

TABARROK: Here’s the question which the Trumpers or other people never really answer is, what are we going to have less of? Yes, we’ll have more investment. Let’s say we get another auto plant. The unemployment rate is 4%, so it’s not like we have a lot of free resources around. Most of the time, we’re in full equilibrium. If we have more auto plant workers and more cars being produced in the United States, we’re going to have less of something. I think it is incumbent on people who want tariffs in order to get more employment in manufacturing or something like that to say, “Well, what are we going to have less of?”

COWEN: The more sophisticated ones of them, I think, would say, well, the US is super high on the consumption scale, even relative to our very high per capita incomes. If we end up spending some of that consumption on boosting real wages, it’s actually a good investment, if only in political sanity, stability, fewer opioid deaths. It’s a very indirect chain of reasoning. I would say I’m skeptical. Again, it’s not a crazy argument. It’s a weird kind of industrial policy where you channel resources away from consumption into investment and higher wages. A lot of those plants are automated. They’re going to be automated much yet. It’s further stuff, maybe to other robotics companies or the AI companies. Again, I think that’s what they would say.

TABARROK: I don’t think they would say that.

COWEN: No, the more sophisticated ones.

TABARROK: Are there? I haven’t seen too many of those….

Here’s the episode. Subscribe now to take a small step toward a much better world: Apple Podcasts | Spotify | YouTube.

Illegal Immigrants Didn’t Break the Housing Market; Bad Policy Did

In an interview, JD Vance claimed:

[H]ousing is way too expensive….because we flooded the country with 30 million illegal immigrants who were taking houses that ought by right go to American citizens.

I noted on Twitter that this framing reeks of socialist thinking, national socialist to be precise. A demand for the state to designate a privileged class that get special rights to scarce goods. Treating housing as a fixed stock to be allocated to a favored in-group while blaming an out-group for shortages is collectivist politics driven by grievance, not market reasoning. In short, grievance and entitlement, zero-sum thinking and central planning wrapped into one ugly bundle.

That criticism set people off. The first rebuttal was predictable “Ha ha, the economist forgot about supply and demand!”—a miss, because my point wasn’t about the mechanics of house-price growth but about Vance’s rhetoric: the collectivism and the cheap politics of blaming outsiders. The second rebuttal was that “America belongs to Americans” so of course illegal immigrants shouldn’t be allowed to buy homes.

The second objection is amusing because who is harmed most when a government bans immigrants from buying homes or deports a chunk of potential buyers? American home sellers. The way such bans “work” is by preventing sellers from accepting the highest bid. In effect, these policies are a tax on sellers combined with a subsidy to a subset of buyers.

So bans on foreign buyers are really about taxing some Americans and subsidizing others. Moreover, although the economic logic of illegals pushing up demand is sound, the numbers don’t add up to much. First, there aren’t 30 million illegals; the best estimates are roughly 14 million. And second illegals are obviously not the reason homes blow past a million dollars in places like San Francisco, San Jose, Washington, or New York! The effect of illegal immigrant on house prices exists but is small—the bigger factors are native population growth, rising incomes, zoning rules, and strict limits on new construction. Block illegal immigrants from buying homes and you will get a pause in price growth, but once demand from natives keeps rising against a capped supply, prices will climb back to where they were.

That gets to the deeper problem with Vance’s style of thinking. If “fixing” housing scarcity means blaming whichever group is politically convenient, you end up cycling through targets: illegal immigrants first, then legal immigrants (as Canada has done), then the children of immigrants, then wealthy buyers, then racial or religious minorities. Indeed, one wonders if the blame is the goal.

If you actually want to solve the problem of housing scarcity, stop the scapegoating and start supporting the disliked people who are actually working to reduce scarcity: the developers. Loosen zoning and cut the rules that choke what can be built. Redirect political energy away from trying to demolish imagined enemies and instead build, baby, build.

Wise Words Addendum (hat tip G. Scott Shand):

There is a cultural movement in the white working class to blame problems on society or the government, and that movement gains adherents by the day….We’ll get fired for tardiness, or for stealing merchandise and selling it on eBay, or for having a customer complain about the smell of alcohol on our breath, or for taking five thirty-minute restroom breaks per shift. We talk about the value of hard work but tell ourselves that the reason we’re not working is some perceived unfairness: Obama shut down the coal mines, or all the jobs went to the Chinese. These are the lies we tell ourselves to solve the cognitive dissonance—the broken connection between the world we see and the values we preach.

Why are US Clinical Trials so Expensive?

Dave Ricks, CEO of Eli Lilly, speaking on the excellent Cheeky Pint Podcast (hosted by John Collison, sometimes joined by Patrick as in this episode) had the clearest discussion of why US clinical trial costs are so expensive that I have read.

One point is obvious once you hear it: Sponsors must provide high-end care to trial participants–thus because U.S. health care is expensive, US clinical trials are expensive. Clinical trial costs are lower in other countries because health care costs are lower in other countries but a surprising consequence is that it’s also easier to recruit patients in other countries because sponsors can offer them care that’s clearly better than what they normally receive. In the US, baseline care is already so good, at least at major hospital centers where you want to run clinical trials, that it’s more difficult to recruit patients. Add in IRB friction and other recruitment problems, and U.S. trial costs climb fast.

Patrick

I looked at the numbers. So, apparently the median clinical trial enrollee now costs $40,000. The median US wage is $60,000, so we’re talking two thirds. Why and why couldn’t it be a 10th or a hundredth of what it is?David (00:10:50):

Yeah, brilliant question and one we’ve spent a lot of time working on…“Why does a trial cost so much?” Well, we’re taking the sickest slice of the healthcare system that are costing the most. And we’re ingesting them. We’re taking them out of the healthcare system and putting them in a clinical trial. Typically we pay for all care. So we are literally running the healthcare system for those individuals and that is in some ways for control, because you want to have the best standard of care so your experiment is properly conducted and it’s not just left to the whims of hundreds of individual doctors and people in Ireland versus the US getting different background therapies. So you standardize that, that costs money because sort of leveling up a lot of things, but then also in some ways you’re paying a premium to both get the treating physicians and have great care to get the patient. We don’t offer them remuneration, but they get great care and inducement to be in the study because you’re subjecting yourself quite often, not all the case, but to something other than the standard of care, either placebo or this. Or, in more specialized care, often it’s standard care plus X where X could actually be doing harm, not good. So people have to go into that in a blinded way and I guess the consideration is you’ll get the best care.Patrick (00:12:51):

Of the $40,000. How much of that should I look at as inducement and encouragement for the patient and how much should I look at it as the cost of doing things given the regulatory apparatus that exists?David (00:13:02):

The patient part is the level up part and I would say 20, 30% of the cost of studies typically would be this. So you’re buying the best standard of care, you’re not getting something less. That’s medicine costs, you’re getting more testing, you’re getting more visits, and then there is a premium that goes to institutions, not usually to the physician, the institution to pay for the time of everybody involved in it plus something. We read a lot about it in the NIH cuts, the 60% Harvard markup or whatever. There’s something like that in all clinical trials too. Overhead coverage, whatnot. But it’s paying for things that aren’t in the trial.Patrick (00:13:40):

US healthcare is famously the most expensive in the world. Yes. Do you run trials outside the US?David (00:13:44):

Yeah, actually most. I mean we want to actually do more in the US. This is a problem I think for our country. Take cancer care where you think, okay, what’s the one thing the US system’s really good at? If I had cancer, I’d come to the US, that’s definitely true. But only 4% of patients who have cancer in the US are in clinical trials. Whereas in Spain and Australia it’s over 25%.And some of that is because they’ve optimized the system so it’s easier to run and then enroll, which I’d like to get to, people in the trials. But some of it is also that the background of care isn’t as good. So that level up inducement is better for the patient and the physician. Here, the standard’s pretty good, so people are like, “Do I want to do something where there’s extra visits and travel time?” There’s another problem in the US which is, we have really good standards of care but also quite different performing systems and we often want to place our trials in the best performing systems that are famous, like MD Anderson or the Brigham. And those are the most congested with trials and therefore they’re the slowest and most expensive. So there’s a bit of a competition for place that goes on as well.

But overall, I would say in our diabetes and cardiovascular trials, many, many more patients are in our trials outside the US than in and that really shouldn’t be other than cost of the system. And to some degree the tuning of the system, like I mentioned with Spain and Australia toward doing more clinical trials. For instance, here in the US, everywhere you get ethics clearance, we call it IRB. The US has a decentralized system, so you have to go to every system you’re doing a study in. Some countries like Australia have a single system, so you just have one stop and then the whole country is available to recruit those types of things.

Patrick (00:15:31):

You said you want to talk about enrollment?David (00:15:32):

Yeah, yeah. It’s fascinating. So drug development time in the industry is about 10 years in the clinic, a little less right now. We’re running a little less than seven at Lilly, so that’s the optimization I spoke about. But actually, half of that seven is we have a protocol open, that means it’s an experiment we want to run. We have sites trained, they’re waiting for patients to walk in their door and to propose, “Would you like to be in the study?” But we don’t have enough people in the study. So you’re in the serial process, diffuse serial process, waiting for people to show up. You think, “Wow, that seems like we could do better than that. If Taylor Swift can sell at a concert in a few seconds, why can’t I fill an Alzheimer’s study? There seem to be lots of patients.” But that’s healthcare. It’s very tough. We’ve done some interesting things recently to work around that. One thing that’s an idea that partially works now is culling existing databases and contacting patients.Patrick (00:16:27):

Proactive outreach.

See also Chertman and Teslo at IFP who have a lot of excellent material on clinical trial abundance.

Lots of other interesting material in this episode including how Eli Lilly Direct—driven largely by Zepbound—has quickly become a huge pharmacy. The direct-to-consumer model it represents could be highly productive as more drugs for preventing disease are developed. I am not as anti-PBM as Ricks and almost everyone in the industry are but I will leave that for another day.

Here is the Cheeky Pint Podcast main page.

Waymo

Waymo now does highways in the Bay area.

Expanding our service territory in the Bay Area and introducing freeways is built on real-world performance and millions of miles logged on freeways, skillfully handling highway dynamics with our employees and guests in Phoenix, San Francisco, and Los Angeles. This experience, reinforced by comprehensive testing as well as extensive operational preparation, supports the delivery of a safe and reliable service.

The future is happening fast.

UCSD Faculty Sound Alarm on Declining Student Skills

The UC San Diego Senate Report on Admissions documents a sharp decline in students’ math and reading skills—a warning that has been sounded before, but this time it’s coming from within the building.

At our campus, the picture is truly troubling. Between 2020 and 2025, the number of freshmen whose math placement exam results indicate they do not meet middle school standards grew nearly thirtyfold, despite almost all of these students having taken beyond the minimum UCOP required math curriculum, and many with high grades. In the 2025 incoming class, this group constitutes roughly one-eighth of our entire entering cohort. A similarly large share of students must take additional writing courses to reach the level expected of high school graduates, though this is a figure that has not varied much over the same time span.

Moreover, weaknesses in math and language tend to be more related in recent years. In 2024, two out of five students with severe deficiencies in math also required remedial writing instruction. Conversely, one in four students with inadequate writing skills also needed additional math preparation.

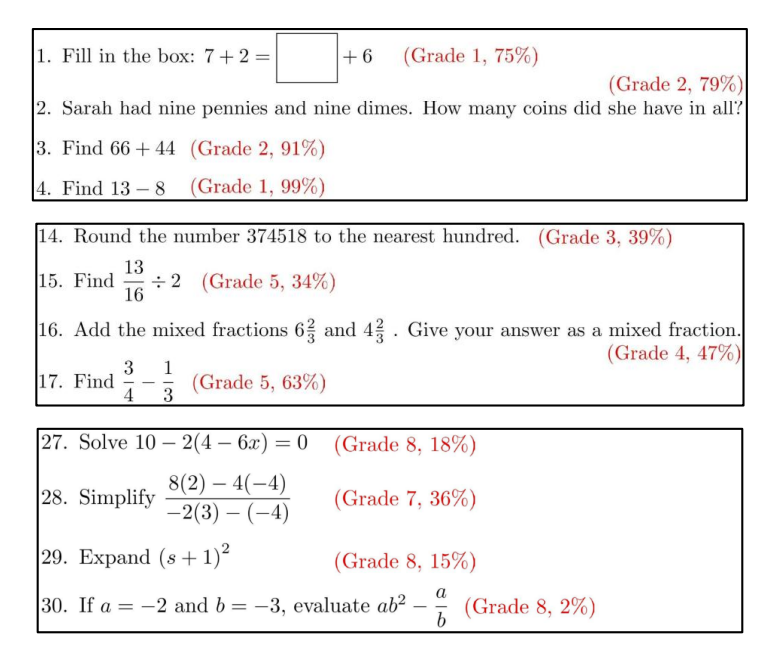

The math department created a remedial course, only to be so stunned by how little the students knew that the class had to be redesigned to cover material normally taught in grades 1 through 8.

Alarmingly, the instructors running the 2023-2024 Math 2 courses observed a marked change in the skill gaps compared to prior years. While Math 2 was designed in 2016 to remediate missing high school math knowledge, now most students had knowledge gaps that went back much further, to middle and even elementary school. To address the large number of underprepared students, the Mathematics Department redesigned Math 2 for Fall 2024 to focus entirely on elementary and middle school Common Core math subjects (grades 1-8), and introduced a new course, Math 3B, so as to cover missing high-school common core math subjects (Algebra I, Geometry, Algebra II or Math I, II, III; grades 9-11).

In Fall 2024, the numbers of students placing into Math 2 and 3B surged further, with over 900 students in the combined Math 2 and 3B population, representing an alarming 12.5% of the incoming first-year class (compared to under 1% of the first-year students testing into these courses prior to 2021)

(The figure gives some examples of remedial class material and the percentage of remedial students getting the answers correct.)

The report attributes the decline to several factors: the pandemic, the elimination of standardized testing—which has forced UCSD to rely on increasingly inflated and therefore useless high school grades—and political pressure from state lawmakers to admit more “low-income students and students from underrepresented minority groups.”

…This situation goes to the heart of the present conundrum: in order to holistically admit a diverse and representative class, we need to admit students who may be at a higher risk of not succeeding (e.g. with lower retention rates, higher DFW rates, and longer time-to-degree).

The report exposes a hard truth: expanding access without preserving standards risks the very idea of a higher education. Can the cultivation of excellence survive an egalitarian world?