Category: Science

Voices From 2099

Great little video. Winner of the Foresight Institute’s $10,000 prize for Existential Hope. Go Bryan Johnson!

My Conversation with Alison Gopnik

Here is the audio, video, and transcript. Here is part of the episode summary:

Tyler and Alison cover how children systematically experiment on the world and what study she’d run with $100 million, why babies are more conscious than adults and what consciousness even means, episodic memory and aphantasia, whether Freud got anything right about childhood and what’s held up best from Piaget, how we should teach young children versus school-age kids, how AI should change K-12 education and Gopnik’s case that it’s a cultural technology rather than intelligence, whether the enterprise of twin studies makes sense and why she sees nature versus nurture as the wrong framework entirely, autism and ADHD as diagnostic categories, whether the success of her siblings belies her skepticism about genetic inheritance, her new project on the economics and philosophy of caregiving, and more.

Excerpt:

COWEN: If it’s something like height, where there is clearly an environmental component, especially if the child is not well-fed, but it seems perfectly fine to say above a certain dietary level, it’s mostly genetic, right? No one says that’s ambiguous, and more and more traits will become like that.

GOPNIK: Well, first of all, I’m not sure that’s true. To a striking degree, the traits that people have looked at, like educational attainment, for example — we haven’t found consistent relationships to genetics. I think the reason for that is exactly because there’s this very complicated developmental process that goes from the genetics to the outcome.

Even if you think about fruit flies, for example. I have some geneticist colleagues who work on this — fruit fly sex determination. You’d think, “Well, that has to be just the result of genes.” It turns out that there’s this long developmental — long by fruit fly standards — developmental process that goes from the genetics to the proteins to the morphology, and there’s lots of possibility of variation throughout that. I think that hasn’t turned out to be a scientifically helpful way of understanding what’s going on in development.

The other thing, of course, is, from my perspective, the common features of, say, what kids are doing are much more interesting than the variations. What I really want to know is how is it that anyone could have a brain that enables them to accomplish these amazing capacities? Thinking about, is this child smarter than the other one, given how unbelievably smart all of them are to begin with, I just think it’s not an interesting question.

COWEN: But say, what you would call the lay belief that smarter parents give birth to smarter children, at least above subsistence — surely you would accept that, right?

GOPNIK: Again, what does smarter mean?

COWEN: How you would do on an IQ test.

GOPNIK: What does genetics mean? It’s interesting, Tyler, that IQ tests, for example — they have their own scholarly and scientific universe, but they’re not something that we would teach about or think about in a developmental psychology class, and there’s a good principled reason for that. The good principled reason — this has come up a lot in AI recently. There’s this idea in AI of artificial general intelligence, and that is assuming that there’s something called general intelligence.

Again, I think, a lot like consciousness or life, it’s one of these lay ideas about how people work. When you actually look at children, for example, what you see is not just that there isn’t a single thing that’s general intelligence. You actually see different cognitive capacities that are in tension with one another. You mentioned one about the scientist who’s trying to think of some new idea versus the scientist who’s looking at a more specific idea, right? A classic example of this tension that I’ve talked about and studied is in computer sciences: exploration versus exploitation.

What do you count as IQ? In fact, most of what IQ is about is how well do you do in school? How well do you do on school tests? That’s actually, in many respects, in tension with how good are you at exploring the world around you? The kinds of things that you need to do to have particular goals, to accomplish them, the kinds of things that we emphasize a lot, say, in a school context, are actually in tension. This gets back to the point about babies being more conscious than we are — are actually in tension with the kinds of things that will let you explore.

Think about the Bayesian example. If you have a flatter prior, and you pay more attention to evidence, you are probably not going to do as well on an IQ test…

COWEN: There’s you — you’re tenured at Berkeley, you’re famous. There’s Blake, The Definitive Warhol Biography, and Adam, who’s amazing, writes for the New Yorker, and you don’t believe inheritability and IQ being very concrete things? I just don’t get it. I think you’re in denial.

GOPNIK: Actually, I think that example is maybe partly why I don’t believe in that. In fact, what I do believe is that the effect of caregiving is to increase variability, is to increase variation. Our family, our care — there were six of us in 11 years. My parents were graduate students, and even before they were graduate students, they were that great generation of immigrant kids.

We had this combination of a great deal of warmth, a great deal of love, an enormous amount of stuff that was around us — books and ideas. We got taken to the Guggenheim, when Adam was three and I was four, for the opening of the Guggenheim. We both remember this vividly. But we were also completely free. We were just in regular public schools. As was true in those days, in general, we came home after school, and we basically did whatever it was that we wanted. I was involved. The kids were taking care of each other a lot of the time.

The result is that you get a lot of variation. It’s an interesting example in our family where we have six kids who presumably all have somewhat similar genetics, all in that 11 years grow up in the same context, and they come out completely differently. They come out with really different strengths, really different weaknesses, things that they’re good at, things that they’re not good at. Even if you think about what Blake and Adam and I are like as thinkers, we’re all foxes instead of hedgehogs. We’re all people who have done lots of different things and thought about lots of different things.

So, my view is that what nurture will do is let you have variability. That’s the thing that, in a sense, is heritable. That’s contradictory, the idea that what’s heritable is the standard deviation instead of the mean, but that’s my view about that. I think my childhood did have the effect of making me suspicious of those simple nature-nurture oppositions.

Here are the books of Alison Gopnik.

Robot Lab

Google’s Deep Mind Lab is going to build a materials science lab in the UK, manned by robots and humans:

To help turbocharge scientific discovery, we will establish Google DeepMind’s first automated laboratory in the UK in 2026, specifically focused on materials science research. A multidisciplinary team of researchers will oversee research in the lab, which will be built from the ground up to be fully integrated with Gemini. By directing world-class robotics to synthesize and characterize hundreds of materials per day, the team intends to significantly shorten the timeline for identifying transformative new materials.

This is a very big deal. Gemini won’t just read papers. It will design the experiments, run the experiments, learn from the successes and failures and then recursively improve. It’s an attempt to learn the game of material science in the same way AlphaGo learned the game of Go.

China fertility facts of the day

A Chinese billionaire was seeking parental rights to at least four unborn children, and the court’s additional research showed that he had already fathered or was in the process of fathering at least eight more—all through surrogates.

When Pellman called Xu Bo in for a confidential hearing in the summer of 2023, he never entered the courtroom, according to people who attended the hearing. The maker of fantasy videogames lived in China and appeared via video, speaking through an interpreter. He said he hoped to have 20 or so U.S.-born children through surrogacy—boys, because they’re superior to girls—to one day take over his business.

Several of his kids were being raised by nannies in nearby Irvine as they awaited paperwork to travel to China. He hadn’t yet met them, he told the judge, because work had been busy…

Some Chinese parents, inspired by Elon Musk’s 14 known children, pay millions in surrogacy fees to hire women in the U.S. to help them build families of jaw-dropping size. Xu calls himself “China’s first father” and is known in China as a vocal critic of feminism. On social media, his company said he has more than 100 children born through surrogacy in the U.S.

Another wealthy Chinese executive, Wang Huiwu, hired U.S. models and others as egg donors to have 10 girls, with the aim of one day marrying them off to powerful men, according to people close to the executive’s education company.

…“Elon Musk is becoming a role model now,” said Zhang. An increasing number of “crazy rich” clients are commissioning dozens, or even hundreds, of U.S.-born babies with the goal of “forging an unstoppable family dynasty,” he said.

Here is the full WSJ article.

Addendum: In the comments Gilligan writes: “On the positive side, we will be able to tax the heirs’ world wide income for the rest of their natural lives.”

The Tech Labs initiative

…the National Science Foundation’s Technology, Innovation and Partnerships directorate at long last announced its Tech Labs initiative, which is intended to provide $10-$50 million a year to independent research teams (and yes, that is a per team dollar amount, not the initiative’s entire budget).

The intent is to provide “entrepreneurial teams of proven scientists the freedom and flexibility to pursue breakthrough science at breakneck speed, without needing to frequently stop and apply for additional grant funding with each new idea or development.”

The idea has many precursors, including all of the independent research labs and organizations going back several decades, the recent burst of philanthropy for new institutes and organizations, the idea of focused research organizations (here’s a good piece from today), Caleb Watney’s excellent piece proposing X-Labs, and Jeffrey Tsao’s proposal for Bell Labs X.

But this is the first time the federal government has gotten into the business of actively pushing for institutional diversity and for scientific funding at the team level.

Huge, if it works.

Here is more from Stuart Buck. Here is Caleb Watney in the WSJ.

A Ukrainian mathematician requests mathematical assistance

…an expert in general relativity or a mathematical physicist familiar with PPN methods, weak-field gravitational tests, and variational principles…

For the two technical appendices (ψ-preconditioning and χ-flattening), I would need:

• a quantum algorithms researcher (QSP/QSVT/QLSA/QAE) to assess the correctness of the operator transformations and the potential complexity gains;

• a quantum control or pulse-level compilation engineer (pulse-level, virtual-Z) to evaluate whether the phase-drift compensation algorithm can be implemented realistically on actual hardware.

Please email me if you think you might be of assistance.

*The Age of Disclosure*

I have now watched the whole movie. The first twenty-eight minutes are truly excellent, the best statement of the case for taking UAPs seriously. It is impressive how they lined up dozens of serious figures, from the military and intelligence services, willing to insist that UAPs are a real phenomenon, supported by multiple sources of evidence. Not sensor errors, not flocks of birds, and not mistakes in interpreting images. This part of the debate now should be considered closed. It is also amazing that Marco Rubio has such a large presence in the film, as of course he is now America’s Secretary of State.

You will note this earlier part of the movie does not insist that UAPs are aliens.

After that point, the film runs a lot of risks. About one-third of what is left is responsible, along the lines of the first twenty-eight minutes. But the other two-thirds or so consists of quite unsupported claims about alien beings, bodies discovered, reverse engineering, quantum bubbles, and so on. You will not find dozens of respected, credentialed, obviously non-crazy sources confirming any of those propositions. The presentation also becomes too conspiratorial. Still, part of the latter part of the movie remains good and responsible.

Overall I can recommend this as an informative and sometimes revelatory compendium of information. It does not have anything fundamentally new, but brings together the evidence in the aggregate better than any other source I know,and it assembles the best and most credible set of testifiers. And then there are the irresponsible bits, which you can either ignore (though still think about), or use as a reason to dismiss the entire film. I will do the former.

My very fun Conversation with Blake Scholl

Here is the audio, video, and transcript. This was at a live event (the excellent Roots of Progress conference), so it is only about forty minutes, shorter than usual. Here is the episode summary:

Blake Scholl is one of the leading figures working to bring back civilian supersonic flight. As the founder and CEO of Boom Supersonic, he’s building a new generation of supersonic aircraft and pushing for the policies needed to make commercial supersonic travel viable again. But he’s equally as impressive as someone who thinks systematically about improving dysfunction—whether it’s airport design, traffic congestion, or defense procurement—and sees creative solutions to problems everyone else has learned to accept.

Tyler and Blake discuss why airport terminals should be underground, why every road needs a toll, what’s wrong with how we board planes, the contrasting cultures of Amazon and Groupon, why Concorde and Apollo were impressive tech demos but terrible products, what Ayn Rand understood about supersonic transport in 1957, what’s wrong with aerospace manufacturing, his heuristic when confronting evident stupidity, his technique for mastering new domains, how LLMs are revolutionizing regulatory paperwork, and much more.

Excerpt:

COWEN: There’s plenty about Boom online and in your interviews, so I’d like to take some different tacks here. This general notion of having things move more quickly, I’m a big fan of that. Do you have a plan for how we could make moving through an airport happen more quickly? You’re in charge. You’re the dictator. You don’t have to worry about bureaucratic obstacles. You just do it.

SCHOLL: I think about this in the shower like every day. There is a much better airport design that, as best I can tell, has never been built. Here’s the idea: You should put the terminals underground. Airside is above ground. Terminals are below ground. Imagine a design with two runways. There’s an arrival runway, departure runway. Traffic flows from arrival runway to departure runway. You don’t need tugs. You can delete a whole bunch of airport infrastructure.

Imagine you pull into a gate. The jetway is actually an escalator that comes up from underneath the ground. Then you pull forward, so you can delete a whole bunch of claptrap that is just unnecessary. The terminal underground should have skylights so it can still be incredibly beautiful. If you model fundamentally the thing on a crossbar switch, there are a whole bunch of insights for how to make it radically more efficient. Sorry. This is a blog post I want to write one day. Actually, it’s an airport I want to build.

And;

COWEN: I’m at the United desk. I have some kind of question. There’s only two or three people in front of me, but it takes forever. I notice they’re just talking back and forth to the assistant. They’re discussing the weather or the future prospects for progress, total factor productivity. I don’t know. I’m frustrated. How can we make that process faster? What’s going wrong there?

SCHOLL: The thing I most don’t understand is why it requires so many keystrokes to check into a hotel room. What are they writing?

What are they writing?

Confidently Wrong

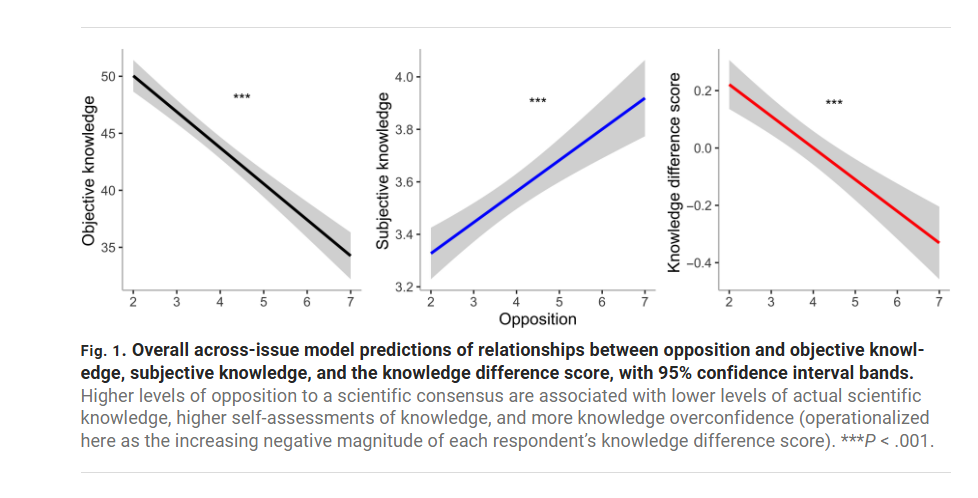

If you’re going to challenge a scientific consensus, you better know the material. Most of us, most of the time, don’t—so deferring to expert consensus is usually the rational strategy. Pushing against the consensus is fine; it’s often how progress happens. But doing it responsibly requires expertise. Yet in my experience the loudest anti-consensus voices—on vaccines, climate, macroeconomics, whatever—tend to be the least informed.

This isn’t just my anecdotal impression. A paper by Light, Fernbach, Geana, and Sloman shows that opposition to the consensus is positively correlated with knowledge overconfidence. Now you may wonder. Isn’t this circular? If someone claims the consensus view is wrong we can’t just say that proves they don’t know what they are talking about. Indeed. Thus Light, Fernbach, Geana and Sloman do something clever. They ask respondents a series of questions on uncontroversial scientific topics. Questions such as:

1. True or false? The center of the earth is very hot: True

2. True or false? The continents have been moving their location for millions of years and will continue to move. True

3. True or false? The oxygen we breathe comes from plants: True

4. True or false? Antibiotics kills viruses as well as bacteria: False

5. True or false? All insects have eight legs: False

6. True or false? All radioactivity is man made: False

7. True or false? Men and women normally have the same number of chromosomes: True

8. True or false? Lasers work by focusing sound waves: False

9. True or false? Almost all food energy for living organisms comes originally from sunlight: True

10. True or false? Electrons are smaller than atoms: True

The authors then correlate respondents’ scores on the objective (uncontroversial) knowledge with their opposition to the scientific consensus on topics like vaccination, nuclear power, and homeopathy. The result is striking: people who are most opposed to the consensus (7, the far right of the horizontal axis in the figure below) score lower on objective knowledge but express higher subjective confidence. In other words, anti-consensus respondents are the most confidently wrong—the gap between what they know and what they think they know is widest.

In a nice test the authors show that the confidently wrong are not just braggadocios they actually believe they know because they are more willing to bet on the objective knowledge questions and, of course, they lose their shirts. A bet is a tax on bullshit.

The implications matter. The “knowledge deficit” approach (just give people more fact) breaks down when the least-informed are also the most certain they’re experts. The authors suggest leaning on social norms and respected community figures instead. My own experience points to the role of context: in a classroom, the direction of information flow is clearer, and confidently wrong pushback is rarer than on Twitter or the blog. I welcome questions in class—they’re usually great—but they work best when there’s at least a shared premise that the point is to learn.

Hat tip: Cremieux

Matt Yglesias on aphantasia

What I tend to approach from the outside are unpleasant experiences. Life is a mix of ups and downs, but I’m not really haunted by sad experiences or disturbing things that I’ve seen. I can tell you about the time I found a dead body in the alley and called the authorities to report it, and my recollection is it was pretty gross, but I certainly don’t have any pictures of that in my iPhone.

Sometimes I see something that causes me to update my views of the world. But when I saw the body, I was already aware, factually, that drug overdose deaths were becoming common in D.C., so I felt that I hadn’t really learned anything new. At the time I was victimized by crime, the amount of violent crime in this city had been on a steady downward trend for a very long time, so it didn’t cause me to change my views at all. Several years later, that downward trend started to reverse and, after a few years of gradual growth, there were some sharp jumps, and then I got worried and started calling for policy changes.

And I think this is a strength of the aphantasic worldview. Something bad happened to me that was statistically anomalous, so I didn’t change my views. When the broader situation changed, I did change my views, even though actually nothing bad happened to me personally. And that’s because the right way to assess crime trends is to try to get a statistically valid view of the situation, not overindex on the happenstance of your life.

Here is the full essay. Here is Hollis Robbins on related issues.

There is no great stagnation (not any more — really!)

A remote-controlled robot the size of a grain of sand can swim through blood vessels to deliver drugs before dissolving into the body. The technology could allow doctors to administer small amounts of drugs to specific sites, avoiding the toxic side effects of body-wide therapies.

…The system has yet to be trialled in people, but it shows promise because it works in a roughly human-sized body, and because all its components have already been shown to be biocompatible, says Bradley Nelson, a mechanical engineer at Swiss Federal Institute of Technology (ETH) in Zurich, who co-led the work.

We will see, but it is wonderful that such an idea is even in the running. Here is the full article, via A.J.

UCSD Faculty Sound Alarm on Declining Student Skills

The UC San Diego Senate Report on Admissions documents a sharp decline in students’ math and reading skills—a warning that has been sounded before, but this time it’s coming from within the building.

At our campus, the picture is truly troubling. Between 2020 and 2025, the number of freshmen whose math placement exam results indicate they do not meet middle school standards grew nearly thirtyfold, despite almost all of these students having taken beyond the minimum UCOP required math curriculum, and many with high grades. In the 2025 incoming class, this group constitutes roughly one-eighth of our entire entering cohort. A similarly large share of students must take additional writing courses to reach the level expected of high school graduates, though this is a figure that has not varied much over the same time span.

Moreover, weaknesses in math and language tend to be more related in recent years. In 2024, two out of five students with severe deficiencies in math also required remedial writing instruction. Conversely, one in four students with inadequate writing skills also needed additional math preparation.

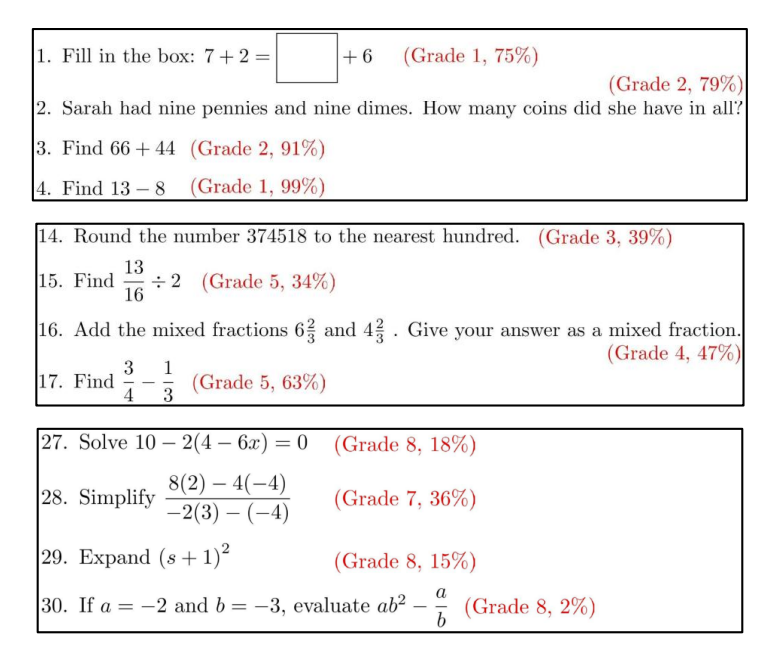

The math department created a remedial course, only to be so stunned by how little the students knew that the class had to be redesigned to cover material normally taught in grades 1 through 8.

Alarmingly, the instructors running the 2023-2024 Math 2 courses observed a marked change in the skill gaps compared to prior years. While Math 2 was designed in 2016 to remediate missing high school math knowledge, now most students had knowledge gaps that went back much further, to middle and even elementary school. To address the large number of underprepared students, the Mathematics Department redesigned Math 2 for Fall 2024 to focus entirely on elementary and middle school Common Core math subjects (grades 1-8), and introduced a new course, Math 3B, so as to cover missing high-school common core math subjects (Algebra I, Geometry, Algebra II or Math I, II, III; grades 9-11).

In Fall 2024, the numbers of students placing into Math 2 and 3B surged further, with over 900 students in the combined Math 2 and 3B population, representing an alarming 12.5% of the incoming first-year class (compared to under 1% of the first-year students testing into these courses prior to 2021)

(The figure gives some examples of remedial class material and the percentage of remedial students getting the answers correct.)

The report attributes the decline to several factors: the pandemic, the elimination of standardized testing—which has forced UCSD to rely on increasingly inflated and therefore useless high school grades—and political pressure from state lawmakers to admit more “low-income students and students from underrepresented minority groups.”

…This situation goes to the heart of the present conundrum: in order to holistically admit a diverse and representative class, we need to admit students who may be at a higher risk of not succeeding (e.g. with lower retention rates, higher DFW rates, and longer time-to-degree).

The report exposes a hard truth: expanding access without preserving standards risks the very idea of a higher education. Can the cultivation of excellence survive an egalitarian world?

In defense of Schumpeter

Factories of Ideas? Big Business and the Golden Age of American Innovation (Job Market Paper) [PDF]

This paper studies the Great Merger Wave (GMW) of 1895-1904—the largest consolidation event in U.S. history—to identify how Big Business affected American innovation. Between 1880 and 1940, the U.S. experienced a golden age of breakthrough discoveries in chemistry, electronics, and telecommunications that established its technological leadership. Using newly constructed data linking firms, patents, and inventors, I show that consolidation substantially increased innovation. Among firms already innovating before the GMW, consolidation led to an increase of 6 patents and 0.6 breakthroughs per year—roughly four-fold and six-fold increases, respectively. Firms with no prior patents were more likely to begin innovating. The establishment of corporate R\&D laboratories served as a key mechanism driving these gains. Building a matched inventor–firm panel, I show that lab-owning firms enjoyed a productivity premium not due to inventor sorting, robust within size and technology classes. To assess whether firm-level effects translated into broader technological progress, I examine total patenting within technological domains. Overall, the GMW increased breakthroughs by 13% between 1905 and 1940, with the largest gains in science-based fields (30% increase).

That is the job market paper of Pier Paolo Creanza, who is on the market this year from Princeton.

My excellent Conversation with Sam Altman

Recorded live in Berkeley, at the Roots of Progress conference (an amazing event), here is the material with transcript, here is the episode summary:

Sam Altman makes his second appearance on the show to discuss how he’s managing OpenAI’s explosive growth, what he’s learned about hiring hardware people, what makes roon special, how far they are from an AI-driven replacement to Slack, what GPT-6 might enable for scientific research, when we’ll see entire divisions of companies run mostly by AI, what he looks for in hires to gauge their AI-resistance, how OpenAI is thinking about commerce, whether GPT-6 will write great poetry, why energy is the binding constraint to chip-building and where it’ll come from, his updated plan for how he’d revitalize St. Louis, why he’s not worried about teaching normies to use AI, what will happen to the price of healthcare and hosing, his evolving views on freedom of expression, why accidental AI persuasion worries him more than intentional takeover, the question he posed to the Dalai Lama about superintelligence, and more.

Excerpt:

COWEN: What is it about GPT-6 that makes that special to you?

ALTMAN: If GPT-3 was the first moment where you saw a glimmer of something that felt like the spiritual Turing test getting passed, GPT-5 is the first moment where you see a glimmer of AI doing new science. It’s very tiny things, but here and there someone’s posting like, “Oh, it figured this thing out,” or “Oh, it came up with this new idea,” or “Oh, it was a useful collaborator on this paper.” There is a chance that GPT-6 will be a GPT-3 to 4-like leap that happened for Turing test-like stuff for science, where 5 has these tiny glimmers and 6 can really do it.

COWEN: Let’s say I run a science lab, and I know GPT-6 is coming. What should I be doing now to prepare for that?

ALTMAN: It’s always a very hard question. Even if you know this thing is coming, if you adapt your —

COWEN: Let’s say I even had it now, right? What exactly would I do the next morning?

ALTMAN: I guess the first thing you would do is just type in the current research questions you’re struggling with, and maybe it’ll say, “Here’s an idea,” or “Run this experiment,” or “Go do this other thing.”

COWEN: If I’m thinking about restructuring an entire organization to have GPT-6 or 7 or whatever at the center of it, what is it I should be doing organizationally, rather than just having all my top people use it as add-ons to their current stock of knowledge?

ALTMAN: I’ve thought about this more for the context of companies than scientists, just because I understand that better. I think it’s a very important question. Right now, I have met some orgs that are really saying, “Okay, we’re going to adopt AI and let AI do this.” I’m very interested in this, because shame on me if OpenAI is not the first big company run by an AI CEO, right?

COWEN: Just parts of it. Not the whole thing.

ALTMAN: No, the whole thing.

COWEN: That’s very ambitious. Just the finance department, whatever.

ALTMAN: Well, but eventually it should get to the whole thing, right? So we can use this and then try to work backwards from that. I find this a very interesting thought experiment of what would have to happen for an AI CEO to be able to do a much better job of running OpenAI than me, which clearly will happen someday. How can we accelerate that? What’s in the way of that? I have found that to be a super useful thought experiment for how we design our org over time and what the other pieces and roadblocks will be. I assume someone running a science lab should try to think the same way, and they’ll come to different conclusions.

COWEN: How far off do you think it is that just, say, one division of OpenAI is 85 percent run by AIs?

ALTMAN: Any single division?

COWEN: Not a tiny, insignificant division, mostly run by the AIs.

ALTMAN: Some small single-digit number of years, not very far. When do you think I can be like, “Okay, Mr. AI CEO, you take over”?

Of course we discuss roon as well, not to mention life on the moons of Saturn…

Track the 2025-2026 Economics Job Market

“Econ Now aggregates economics PhD job market papers, job postings for economists, and economics conference information all in one place.”

Link here, I have yet to try it.