Results for “age of em” 17234 found

AGI and the division of powers within government

I’m not sure the AGI concept is entirely well-defined, but let’s put aside the more dramatic scenarios and assume that AI can perform at least some of the following functions:

1. Evaluate many policies and regulations better than human analysts can.

2. Sometimes outperform and outguess asset price markets.

3. Formulate the most effective campaign strategies for politicians.

4. Understand and manage geopolitics better than humans can.

5. Write better Supreme Court opinions and, for a given ethical point of view, produce a better ruling.

You could add to that list, but you get the point. These are a big stretch beyond current models, but not on the super-brain level.

One option, of course, is simply that everyone can use this service, like the current GPT-4, and then few questions arise about differential political access. But what if the service is expensive, and/or access is restricted for reasons of law, regulation, and national security? Exactly who or what in government allocates use of the service within government?

Can any member of the House of Representatives pay the service a visit and ask away? Do incumbents then end up with a major new advantage over challengers?

How do you stop the nuttier Reps from giving away the information they can access, perhaps to unsalubrious parties or foreign powers? Don’t national security issues suddenly become much tougher, as if all Reps suddenly are on the Senate Intelligence Committee?

Surely the President can claim it is a weapon of sorts and access it at will? Can he or she veto the access of other individuals? Will the rival running for President, from the other party, have any access at all?

Can the national security establishment veto the access of individuals within the political establishment? If so, does the Executive Branch and national security establishment gain greatly in power?

Have we now created a kind of “fourth branch” of government?

Do we ask the AI who or what should get access?

Say the Republicans or Democrats win a trifecta? Do they now have a kind of monopoly access over the AI?

Can the technically non-governmental Fed access it? If so, just the chair, the whole FOMC, or the staff as well? If the staff cannot access it, what good are they?

We haven’t even talked about federalism yet — what if a governor has a pressing query? Will Texas build its own model?

Let’s say this is the UK — does the party in opposition have equal access to the AI? Exactly which legal entity with which governance mechanism counts as “the party in opposition”? Can you start a small party, opposing the national government, just to get access?

Say some Brits are in a coalition with one of those tiny parties from Northern Ireland. Can the coalition partner demand access on equal terms? (How about Sinn Fein?) How about in PR systems?

Doesn’t this make all political coalitions higher stakes, more fraught, and more fragile? And more suffused with security risks?

Inquiring minds wish to know.

A few random Tucker Carlson thoughts

A few times I was invited to be on the show, typically after I would write something on immigration. I always refused, figuring I wouldn’t get fair treatment and would only feed a very unfair method of conducting the discourse.

I never have been a regular watcher, not of any news show, but I have seen him a number of times, often in other venues or homes, or I might pause when moving through the channels. It struck me each time how remarkably talented and smart and energetic he was, while what he said was very often not smart at all. (I’ve heard the same about his core smarts from a few different people who knew him when he was younger.) It also struck me how fluently he could coin an attack phrase, maybe better than anyone else but DT?

It is now increasingly debated how much he meant the different things he was saying, for instance about the last presidential election. And was it smart to put criticisms of Fox management into texts?

The biggest lessons here are cautionary ones. First, the biggest stars can do some very unwise things, and eventually so many of them do.

Second, “the right wing” should pay heed to the reality that many of its most talented representatives go down such dark paths. Since most (non-right wing) people are reluctant to admit Carlson’s extreme talent, this point does not always come up. Carlson, of course, was doing very well with his chosen path, and he still has the option of doing very well again.

But in which directions will the constellations of the audience and of fame point him? Which exactly was the guiding star he lacked?

Generative AI at Work

We study the staggered introduction of a generative AI-based conversational assistant using data from 5,179 customer support agents. Access to the tool increases productivity, as measured by issues resolved per hour, by 14 percent on average, with the greatest impact on novice and low-skilled workers, and minimal impact on experienced and highly skilled workers. We provide suggestive evidence that the AI model disseminates the potentially tacit knowledge of more able workers and helps newer workers move down the experience curve. In addition, we show that AI assistance improves customer sentiment, reduces requests for managerial intervention, and improves employee retention.

That is from a new NBER working paper by Erik Brynjolfsson, Danielle Li, and Lindsey R. Raymond.

Voters as Mad Scientists: Essays on Political Irrationality

Bryan Caplan’s latest collection of essays, Voters as Mad Scientists: Essays on Political Irrationality is out and, as the kids say, it’s a banger. Voters as Mad Scientists includes classics on social desirability bias, the ideological Turing test, the Simplistic Theory of Left and Right and more. Lots of wisdom in these short essays. Bryan is a pundit who writes for the long run. Here’s one on the historically hollow cries of populism:

Bryan Caplan’s latest collection of essays, Voters as Mad Scientists: Essays on Political Irrationality is out and, as the kids say, it’s a banger. Voters as Mad Scientists includes classics on social desirability bias, the ideological Turing test, the Simplistic Theory of Left and Right and more. Lots of wisdom in these short essays. Bryan is a pundit who writes for the long run. Here’s one on the historically hollow cries of populism:

History textbooks are full of populist complaints about business: the evils of Standard Oil, the horrors of New York tenements, the human body parts in Chicago meat packing plants. To be honest, I haven’t taken these complaints seriously since high school….Still, I periodically wonder if my nonchalance is unjustified. Populists rub me the wrong way, but how do I know they didn’t have a point? After all, I have near-zero first-hand knowledge of what life was like in the heyday of Standard Oil, New York tenements, or Chicago meat-packing. What would I have thought if I was there?

Yet, Bryan continues, there is a test. What do populists say about the technological revolutions of the 2000s which Bryan has seen with this own eyes?

I’ve seen the tech industry dramatically improve human life all over the world.

Amazon is simply the best store that ever existed, by far, with incredible selection and unearthly convenience. The price: cheap.

Facebook, Twitter, and other social media let us socialize with our friends, comfortably meet new people, and explore even the most obscure interests. The price: free.

Uber and Lyft provide high-quality, convenient transportation. The price: really cheap.

Skype is a sci-fi quality video phone. The price: free. YouTube gives us endless entertainment. The price: free.

Google gives us the totality of human knowledge! The price: free.

That’s what I’ve seen. What I’ve heard, however, is totally different. The populists of our Golden Age are loud and furious. They’re crying about “monopolies” that deliver fire-hoses worth of free stuff. They’re bemoaning the “death of competition” in industries (like taxicabs) that governments forcibly monopolized for as long as any living person can remember. They’re insisting that “only the 1% benefit” in an age when half of the high-profile new businesses literally give their services away for free. And they’re lashing out at businesses for “taking our data” – even though five years ago hardly anyone realized that they had data.

My point: If your overall reaction to business progress over the last fifteen years is even mildly negative, no sensible person will try to please you, because you are impossible to please. Yet our new anti-tech populists have managed to make themselves a center of pseudo-intellectual attention.

Read the whole thing and follow Bryan at Bet On It.

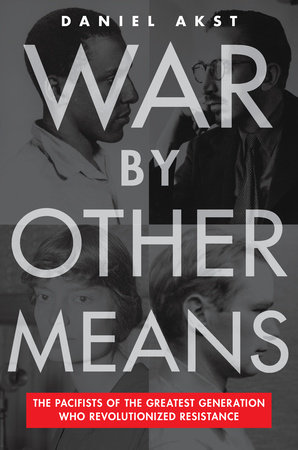

War by Other Means

In The Trial of The Chicago Seven, the Aaron Sorkin movie about the group of anti–Vietnam War protesters charged with inciting riots at the 1968 Democratic National Convention, the focus is on the antics of Abbie Hoffman (Sacha Baron Cohen) and Jerry Rubin (Jeremy Strong). It’s a good movie but their story is not the only story. Among the Chicago Seven was an older, quieter, more bemused David Dellinger (played in the movie by John Carroll Lynch). It was not Dellinger’s first trial. In 1940 Dellinger had refused to register for the then-new draft, the first peacetime draft in America’s history, and he had been imprisoned as a conscientious objector and pro-pacifism protester. Dellinger served a year in federal prison in Danbury, CT, and upon his release, he adamantly refused to register once more. He was imprisoned for an additional two years in the maximum-security facility at Lewisburg, Pennsylvania, where he engaged in hunger strikes and endured periods of solitary confinement. Dellinger was the real deal.

In The Trial of The Chicago Seven, the Aaron Sorkin movie about the group of anti–Vietnam War protesters charged with inciting riots at the 1968 Democratic National Convention, the focus is on the antics of Abbie Hoffman (Sacha Baron Cohen) and Jerry Rubin (Jeremy Strong). It’s a good movie but their story is not the only story. Among the Chicago Seven was an older, quieter, more bemused David Dellinger (played in the movie by John Carroll Lynch). It was not Dellinger’s first trial. In 1940 Dellinger had refused to register for the then-new draft, the first peacetime draft in America’s history, and he had been imprisoned as a conscientious objector and pro-pacifism protester. Dellinger served a year in federal prison in Danbury, CT, and upon his release, he adamantly refused to register once more. He was imprisoned for an additional two years in the maximum-security facility at Lewisburg, Pennsylvania, where he engaged in hunger strikes and endured periods of solitary confinement. Dellinger was the real deal.

In War by Other Means, Daniel Akst recaptures an older generation of anti-war, pro-pacifism protesters; people like Dellinger, the radical Catholic Dorothy Day, Bayard Rustin, Dwight MacDonald and others. This earlier group grew out of the disillusionment that many Americans felt after World War One–they resolved to never again be entangled in European death and destruction.

During the interwar period, moreover, the United States had developed perhaps the largest and best-organized pacifist movement in the world. Pacifism was part of the curriculum at some schools and firmly on the agenda of the mainline Protestant denominations that were such important institutions in the life of this churchgoing nation at the time….Pacifism was well established on campuses thanks to a massive and diverse national student anti-war movement….During the thirties pacifism, probably surpassed even the Depression as the dominant social issue in American liberal Protestantism…

It wasn’t just liberal Protestants, Pacifism also drew on the isolationist tradition:

…isolationism simply wanted to keep America out of other peoples’ bloody conflicts; it advocated strength through preparedness and put faith in the vastness of the oceans to keep us safe. “Isolationism” has become a dirty word since its heyday in the thirties, when it came into common usage. But in fact it started life as a pejorative, one used by American expansionists in the late nineteenth century to tar the righteous killjoys who objected to burgeoning US imperialism…

Regrettably, the unique blend of “left-wing” pacifism and “right-wing” isolationism, once prevalent in America, has largely vanished. Mostly vanished also is–for want of better terms–the Christian and left-wing libertarianism of people like Dellinger, Day and MacDonald, who although being on the left and hardly pro-market had a deep appreciation for individualism and civil society and a fear of the homogenizing and brutalizing role of the state.

Daniel Akst’s War by Other Means is an important and engaging look at a cast of remarkable American characters and their unique blend of ideological pacifism.

Addendum: Nick Gillespie at Reason has a very good interview with Akst.

AI and economic liability

I’ve seen a number of calls lately to place significant liability on the major LLM models and their corporate owners, and so I cover that topic in my latest Bloomberg column. There are numerous complications, and I cover a mere smidgen of them, but still more analytics are needed here. Excerpt:

Imagine a bank robbery that is organized through emails and texts. Would the email providers or phone manufacturers be held responsible? Of course not. Any punishment or penalties would be meted out to the criminals…

In the case of the bank robbery, the providers of the communications medium or general-purpose technology (i.e., the email account or mobile device) are not the lowest-cost avoiders and have no control over the harm. And since general-purpose technologies — such as mobile devices or, more to the point, AI large language models — have so many practical uses, the law shouldn’t discourage their production with an additional liability burden.

Of course there are many more complications, and I am not saying zero corporate liability is always correct. But we do need to start with the analytics, and a simple fear of AI-related consequences does settle the matter. There is this:

On a more practical level, liability assignment to the AI service just isn’t going to work in a lot of areas. The US legal system, even when functioning well, is not always able to determine which information is sufficiently harmful. A lot of good and productive information — such as teaching people how to generate and manipulate energy — can also be used for bad purposes.

Placing full liability on AI providers for all their different kinds of output, and the consequences of those outputs, would probably bankrupt them. Current LLMs can produce a near-infinite variety of content across many languages, including coding and mathematics. If bankruptcy is indeed the goal, it would be better for proponents of greater liability to say so.

Here is a case where partial corporate liability may well make sense:

It could be that there is a simple fix to LLMs that will prevent them from generating some kinds of harmful information, in which case partial or joint liability might make sense to induce the additional safety. If we decide to go this route, we should adopt a much more positive attitude toward AI — the goal, and the language, should be more about supporting AI than regulating it or slowing it down. In this scenario, the companies might even voluntarily adopt the beneficial fixes to their output, to improve their market position and protect against further regulatory reprisals.

Again, not the final answers but I am imploring people to explore the real analytics on these questions.

Questions pondered by Gwern

Here is one:

Why does writing in the morning (anecdotally so far) seem to be so effective for writers, even ones who are not morning persons? While programmers, which seems like a similar occupation, are invariably owls?

Here is a much longer list.

Using AI in politics

Could AI be used to generate strategic advantage in politics and elections?

Without doubt. We used it to improve prediction of the true critical voters in 2016 (but not to improve the execution of digital marketing, per the Cadwalladr conspiracy) and the true critical voters and true marginal seats in 2019. Competent campaigns everywhere could already, pre-GPT, use AI tools to improve performance.

We did some simple experiments last year to see if you could run ‘synthetic’ focus groups and ‘synthetic’ polls inside a LLM. Yes you can. We interrogated synthetic swing voters and synthetic MAGA fans on, for example, Trump running again. Responses are indistinguishable from real people as you might expect. And polling experiments similarly produced results very close to actual polls. Some academic papers have been published showing similar ideas to what we experimented with. There is no doubt that a competent team could use these emerging tools to improve tools for politics and perform existing tasks faster and cheaper. And one can already see this starting (look at who David Shor is hiring).

It’s a sign of how fast AI is moving that this idea was new last summer (I first heard it discussed among top people roughly July), we and others tested it, and focus has moved to new ideas without ~100% of those in mainstream politics today having any idea these possibilities exist.

“Almost space” markets in everything

The space race just got a new entrant. France’s Zephalto is offering passengers the chance to travel to the stratosphere in a balloon, starting at €120,000 ($132,000) per person in 2025.

“I partnered with the French space agency, and we worked on the concept of the balloon together,” says Zephalto founder and aerospace engineer Vincent Farret d’Astiès.

He tells Bloomberg that he’s planning on 60 flights a year, with just six passengers on board each flight. The company aims to provide an experience that brings the best bits of French hospitality—fine food, wine and design—to the edges of space for those who can afford the six-figure ticket.

Balloons filled with helium or hydrogen will depart from France with two pilots on board and rise 25 kilometers (15.5 miles) into the stratosphere for 1 1/2 hours. Once at peak altitude, which is about three times higher than for a commercial airliner, the balloon will stay for three hours, giving guests a chance to take in views previously seen only by astronauts. The descent will take a further hour and a half, for a six-hour round trip.

Here is more from Sarah Rappaport at Bloomberg. Via Daniel Lippman.

A Mosquito Factory?!

A “mosquito factory” might sound like the last thing you’d ever want, but Brazil is constructing a facility capable of producing five billion mosquitoes annually. The twist? The factory will breed mosquitoes carrying a special bacteria that significantly reduces their ability to transmit viruses. As far as I can tell, however, the new mosquitoes still suck your blood.

Nature: The bacterium Wolbachia pipientis naturally infects about half of all insect species. Aedes aegypti mosquitoes, which transmit dengue, Zika, chikungunya and other viruses, don’t normally carry the bacterium, however. O’Neill and his colleagues developed the WMP mosquitoes after discovering that A. aegypti infected with Wolbachia are much less likely to spread disease. The bacterium outcompetes the viruses that the insect is carrying.

When the modified mosquitoes are released into areas infested with wild A. aegypti, they slowly spread the bacteria to the wild mosquito population.

Several studies have demonstrated the insects’ success. The most comprehensive one, a randomized, controlled trial in Yogyakarta, Indonesia, showed that the technology could reduce the incidence of dengue by 77%1, and was met with enthusiasm by epidemiologists.

In Brazil, where the modified mosquitoes have so far been tested in five cities, results have been more modest. In Niterói, the intervention was associated with a 69% decrease of dengue cases2. In Rio de Janeiro, the reduction was 38%3.

Wolbachia-infected mosquitoes have already been approved by Brazilian regulatory agencies. But the technology has not yet been officially endorsed by the World Health Organization (WHO), which could be an obstacle to its use in other countries. The WHO’s Vector Control Advisory Group has been evaluating the modified mosquitoes, and a discussion about the technology is on the agenda for the group’s next meeting later this month.

Do older economists write differently?

The scholarly impact of academic research matters for academic promotions, influence, relevance to public policy, and others. Focusing on writing style in top-level professional journals, we examine how it changes with age, and how stylistic differences and age affect impact. As top-level scholars age, their writing style increasingly differs from others’. The impact (measured by citations) of each contribution decreases, due to the direct effect of age and the much smaller indirect effects through style. Non-native English-speakers write in different styles from others, in ways that reduce the impact of their research. Nobel laureates’ scholarly writing evinces less certainty about the conclusions of their research than that of other highly productive scholars.

Here is the full NBER paper by Lea-Rachel and Daniel S. Hamermesh.

Wednesday assorted links

1. Scott Aronson with some AGI risk sanity.

2. The revise and resubmit process in economics.

4. Taylor Swift did due diligence on FTX.

5. Haiti fact of the day: “Ticket from Miami to Port-au-Prince: $124 Ticket from Port-au-Prince to Miami: $1,000-3,000”

6. Magnus playing poker (WSJ).

My Conversation with Anna Keay

A very good episode, here is the audio, video, and transcript. Here is part of the episode summary:

Tyler sat down with Anna to discuss the most plausible scenario where England could’ve remained a republic in the 17th century, what Robert Boyle learned from Sir William Petty, why some monarchs build palaces and other don’t, how renting from the Landmark Trust compares to Airbnb, how her job changes her views on wealth taxes, why neighborhood architecture has declined, how she’d handle the UK’s housing shortage, why giving back the Koh-i-Noor would cause more problems than it solves, why British houses have so little storage, the hardest part about living in an 800-year-old house, her favorite John Fowles book, why we should do more to preserve the Scottish Enlightenment, and more.

And here is one excerpt:

COWEN: Which are the old buildings that we have too many of in Britain? There’s a lot of Christopher Wren churches. I think there’s over 20.

KEAY: Too many?

COWEN: What if they were 15? They’re not all fantastic.

KEAY: They’re not all fantastic? Tell me one that isn’t fantastic.

COWEN: The Victorians knocked down St. Mildred. I’ve seen pictures of it. I don’t miss it.

KEAY: Well, you don’t miss something that’s not there. I think it’d be pretty hard to convince me that any Christopher Wren church wasn’t worth hanging on to. But your point is right, which is to say that not everything that was ever built is worth retaining. There are things which are clearly of much less interest or were poorly built, which are not serving a purpose anymore in a way that they need to. To me, it’s all about assessing what matters, what we care about.

It’s incredibly important to remember how you have to try and take the long view because if you let things go, you cannot later retrieve them. We look at the decisions that were made in the past about things that we really care about that were demolished — wonderful country houses, we’ve mentioned. It’s fantastic, for example, Euston Station, one of the great stations of the world, built in the middle of the 19th century, demolished in the ’60s, regretted forever since.

So, one of the things you have to be really careful about is to make a distinction between the fashion of the moment and things which we are going to regret, or our children or our grandchildren are going to curse us for having not valued or not thought about, not considered.

Which is why, in this country, we have this thing called the listing system, where there’s a process of identifying buildings which are important, and what’s called listing them — putting them on a list — which means that if you own them, you can’t change them without getting permission, which is a way of ensuring that things which you as an owner or I as an owner might not treat with scorn, that the interest of generations to come are represented in that.

COWEN: Why were so many big mistakes made in the middle part of the 20th century? St. Pancras almost was knocked down, as I’m sure you know. That would have been a huge blunder. There was something about that time that people seem to have become more interested in ugliness. Or what’s your theory? How do you explain the insanity that took all of Britain for, what, 30 years?

KEAY: Well, I think this is such a good question because this is, to me, what the study of history is all about, which is, you have to think about what it was like for that generation. You have to think of what it was like for people in the 1950s and ’60s, who had experienced, either firsthand or very close at hand, not just one but two catastrophic world wars in which numbers had been killed, places had been destroyed. The whole human cost of that time was so colossal, and the idea for that generation that something really fundamental had to change if we were going to be a society that wasn’t going to be killing one another at all time.

This has a real sort of mirror in the 17th century, during the Civil War in the 17th century. There’s a real feeling that something had to be done. Otherwise, God was going to strike down this nation, this errant nation. I think for that generation in the ’50s and ’60s, the sense that we simply have to do things differently because this pattern of life, this pattern of existence, this way we’ve operated as a society has been so destructive.

Although lots of things were done — when it comes to urban planning and so on — that we really regret now, I think you have to be really careful not to diminish the seriousness of intent of those people who were trying to conceive of what that world might be — more egalitarian, more democratic, involving more space, more air, more light, healthier — all these kinds of things.

Definitely recommended, with numerous interesting parts. And I am very happy to recommend Anna’s latest book The Restless Republic: Britain Without a Crown.

*The Two-Parent Privilege*

A new and great book, authored by Melissa S. Kearney of the University of Maryland. The subtitle is How Americans Stopped Getting Married and Started Falling Behind, and here is one excerpt of the summary points:

Two-parent families are beneficial for children.

The class divide in marriage and family structure has exacerbated inequality and class gaps.

Places that have more two-parent families have higher rates of upward mobility.

Not talking about these facts is counterproductive.

The marshaled evidence is convincing, and I will be blogging more about this book. While some stiff competition is coming, this could be the most important economics and policy book of this year. And yes it is remarkable that such a book is so needed, but yes it is. And here is Melissa on Twitter.

Ideas for regulating AI safety

Noting these come from Luke Muelhhauser, and he is not speaking for Open Philanthropy in any official capacity:

- Software export controls. Control the export (to anyone) of “frontier AI models,” i.e. models with highly general capabilities over some threshold, or (more simply) models trained with a compute budget over some threshold (e.g. as much compute as $1 billion can buy today). This will help limit the proliferation of the models which probably pose the greatest risk. Also restrict API access in some ways, as API access can potentially be used to generate an optimized dataset sufficient to train a smaller model to reach performance similar to that of the larger model.

- Require hardware security features on cutting-edge chips. Security features on chips can be leveraged for many useful compute governance purposes, e.g. to verify compliance with export controls and domestic regulations, monitor chip activity without leaking sensitive IP, limit usage (e.g. via interconnect limits), or even intervene in an emergency (e.g. remote shutdown). These functions can be achieved via firmware updates to already-deployed chips, though some features would be more tamper-resistant if implemented on the silicon itself in future chips.

- Track stocks and flows of cutting-edge chips, and license big clusters. Chips over a certain capability threshold (e.g. the one used for the October 2022 export controls) should be tracked, and a license should be required to bring together large masses of them (as required to cost-effectively train frontier models). This would improve government visibility into potentially dangerous clusters of compute. And without this, other aspects of an effective compute governance regime can be rendered moot via the use of undeclared compute.

- Track and require a license to develop frontier AI models. This would improve government visibility into potentially dangerous AI model development, and allow more control over their proliferation. Without this, other policies like the information security requirements below are hard to implement.

- Information security requirements. Require that frontier AI models be subject to extra-stringent information security protections (including cyber, physical, and personnel security), including during model training, to limit unintended proliferation of dangerous models.

- Testing and evaluation requirements. Require that frontier AI models be subject to extra-stringent safety testing and evaluation, including some evaluation by an independent auditor meeting certain criteria. [footnote in the original]

- Fund specific genres of alignment, interpretability, and model evaluation R&D. Note that if the genres are not specified well enough, such funding can effectively widen (rather than shrink) the gap between cutting-edge AI capabilities and available methods for alignment, interpretability, and evaluation. See e.g. here for one possible model.

- Fund defensive information security R&D, again to help limit unintended proliferation of dangerous models. Even the broadest funding strategy would help, but there are many ways to target this funding to the development and deployment pipeline for frontier AI models.

- Create a narrow antitrust safe harbor for AI safety & security collaboration. Frontier-model developers would be more likely to collaborate usefully on AI safety and security work if such collaboration were more clearly allowed under antitrust rules. Careful scoping of the policy would be needed to retain the basic goals of antitrust policy.

- Require certain kinds of AI incident reporting, similar to incident reporting requirements in other industries (e.g. aviation) or to data breach reporting requirements, and similar to some vulnerability disclosure regimes. Many incidents wouldn’t need to be reported publicly, but could be kept confidential within a regulatory body. The goal of this is to allow regulators and perhaps others to track certain kinds of harms and close-calls from AI systems, to keep track of where the dangers are and rapidly evolve mitigation mechanisms.

- Clarify the liability of AI developers for concrete AI harms, especially clear physical or financial harms, including those resulting from negligent security practices. A new framework for AI liability should in particular address the risks from frontier models carrying out actions. The goal of clear liability is to incentivize greater investment in safety, security, etc. by AI developers.

- Create means for rapid shutdown of large compute clusters and training runs. One kind of “off switch” that may be useful in an emergency is a non-networked power cutoff switch for large compute clusters. As far as I know, most datacenters don’t have this.[6] Remote shutdown mechanisms on chips (mentioned above) could also help, though they are vulnerable to interruption by cyberattack. Various additional options could be required for compute clusters and training runs beyond particular thresholds.

I am OK with some of these, provided they are applied liberally — for instance, new editions of the iPhone require regulatory consent, but that hasn’t thwarted progress much. That may or may not be the case for #3 through #6, I don’t know how strict a standard is intended or who exactly is to make the call. Perhaps I do not understand #2, but it strikes me as a proposal for a complete surveillance society, at least as far as computers are concerned — I am opposed! And furthermore it will drive a lot of activity underground, and in the meantime the proposal itself will hurt the EA brand. I hope the country rises up against such ideas, or perhaps more likely that they die stillborn. (And to think they are based on fears that have never even been modeled. And I guess I can’t bring in a computer from Mexico to use?) I am not sure what “restrict API access” means in practice (to whom? to everyone who might be a Chinese spy? and does Luke favor banning all open source? do we really want to drive all that underground?), but probably I am opposed to it. I am opposed to placing liability for a General Purpose Technology on the technology supplier (#11), and I hope to write more on this soon.

Finally, is Luke a closet accelerationist? The status quo does plenty to boost AI progress, often through the military and government R&D and public universities, but there is no talk of eliminating those programs. Why so many regulations but the government subsidies get off scot-free!? How about, while we are at it, banning additional Canadians from coming to the United States? (Canadians are renowned for their AI contributions.) After all, the security of our nation and indeed the world is at stake. Canada is a very nice country, and since 1949 it even contains Newfoundland, so this seems like less of an imposition than monitoring all our computer activity, right? It might be easier yet to shut down all high-skilled immigration. Any takers for that one?