Category: Economics

A Republic, if you can keep it

The conclusion of Justice Gorsuch’s concurrence in the tariff case:

For those who think it important for the Nation to impose more tariffs, I understand that today’s decision will be disappointing. All I can offer them is that most major decisions affecting the rights and responsibilities of the American people (including the duty to pay taxes and tariffs) are funneled through the legislative process for a reason. Yes, legislating can be hard and take time. And, yes, it can be tempting to bypass Congress when some pressing problem

arises. But the deliberative nature of the legislative process was the whole point of its design. Through that process, the Nation can tap the combined wisdom of the people’s elected representatives, not just that of one faction or man. There, deliberation tempers impulse, and compromise hammers

disagreements into workable solutions. And because laws must earn such broad support to survive the legislative process, they tend to endure, allowing ordinary people to plan their lives in ways they cannot when the rules shift from day to day. In all, the legislative process helps ensure each of us has a stake in the laws that govern us and in the Nation’s future. For some today, the weight of those virtues is apparent. For others, it may not seem so obvious. But if history is any guide, the tables will turn and the day will come when those disappointed by today’s result will appreciate the legislative process for the bulwark of liberty it is.

South Africa facts of the day

With the lights back on and freight beginning to move again, in November South Africa won its first credit upgrade in two decades after S&P Global Ratings lifted the sovereign rating by one notch to double B.

Investor confidence is also up. According to Nedbank, private sector investment announcements tripled last year to more than R382bn ($23.6bn). Since the end of 2023, the South African rand has been the world’s best performing major currency on a spot basis reflecting immediate exchange rates, up nearly 15 per cent against the dollar. Over the past 12 months, the JSE all-share index is up 37 per cent in rand terms.

Growth reached 1.2 per cent in 2025, hardly transformative, but double the rate of the previous year. For the first time in a long time, economists are talking enthusiastically about “green shoots” and forecasting year-on-year expansion.

Here is more from David Pilling, Joseph Cotterill, and Monica Mark at the FT.

I podcast on Spain and Latin America

With Rasheed Griffith and Diego Sanchez de la Cruz. Here is one excerpt:

Rasheed: Tyler, if El Salvador were to become a success story, what would it likely be a success at first? Manufacturing, migratory investment, investment tourism, or something more unusual? Because those typical answers feel like maybe they have missed the boat.

Tyler: I think El Salvador has turned itself into a very safe country which is great news. I think you and I both saw that when we were there. I think under all scenarios they have a very hard time becoming much richer. So I don’t think it’s manufacturing through no fault of their own. But most of the world is de-industrializing. So manufacturing is not a source of growing employment due to automation. But there’s other issues for Central America such as scale and the cost of electricity. El Salvador is not the best in Latin America for either of those compared say to Northern Mexico. So I don’t see what its relative advantage is. And it’s just a small place.

I checked with ChatGPT. one estimate places about third of the population, living in the United States on average. That’s probably the more ambitious one third. So there’s considerable brain drain. I do think in terms of levels they can do much more with tourism. They have an entire Pacific Coast which is quite underdeveloped, and could be developed very fruitfully. Sell condominiums, have people do more surfing. Try to have something a bit more like the next Acapulco, but even there you’re competing against Cancun among other locations and it will boost their level but it won’t be a permanently higher rate of growth.

And that’s the case with many touristic developments. They don’t self compound forever and give you many other productivity improvements. So I expect El Salvador to do much better but I know a lot of people who read Bukele on social media and they think it’s about to be the next Singapore or something and I just don’t know how they’re gonna do that under really any scenario. I do think it will improve and they’ll get more foreign investment and more tourism.

Rasheed: How much is “much better”? That’s doing a lot of work there.

Tyler: When you look at the Pacific Coast and you and I sat right next to the water [it could develop much more]. So that could create quite a few jobs. But in the longer term steady state I think they’ll have a hard time averaging more than 2% growth. So they can attach themselves more closely to the US economy. They use the dollar and let’s just assume their governance does not go crazy. That’s another risk right? So Bukele or whoever succeeds them could overreach. The checks and balances the constitutional protections there seem quite weak. Another possible risk there that even despite his best efforts the country becomes dangerous again. You look at Costa Rica which had been quite safe and did all the right things, and is larger and has many more resources and that’s now becoming a more dangerous place because it was targeted by external, in some cases Mexican drug traffickers. And that could happen to El Salvador as well. So even if think the current campaign is gonna work forever it doesn’t mean the country stays safe forever. It’s not really in a very safe region. So that’s a side risk which will also keep down foreign investment. I don’t know, I’m I am definitely seeing the upside but not super duper optimistic there.

Plenty of fresh material, with transcript, recommended.

The Great Forgetting, a continuing series

On Thursday, the Center for American Progress, a prominent left-leaning think tank that often cultivates policy ideas later adopted by the Democratic Party, proposed a two-year freeze on the prices of 24 food items, such as strawberries and ground beef.

Grocers would voluntarily agree to capping the cost of food in exchange for paying lower fees on credit card transactions, according to the proposal, which was written by a group led by Jared Bernstein, who chaired the White House Council of Economic Advisers during Joe Biden’s presidency.

That, in effect, would force credit card companies to absorb the cost of subsidizing food purchases, a highly unusual arrangement. A draft of the proposal said the Federal Reserve could force credit card companies to do so via its regulatory oversight, though that provision was removed after questions from The Washington Post.

It is not clear how else the government might persuade credit card companies to foot the bill, nor how many grocers would agree.

Here is more from The Washington Post. Elsewhere in The Great Forgetting, three Supreme Court Justices seem to have forgotten what the Constitution says.

Why don’t American companies hire more in Canada?

In the specific sector I work in (previously law and now tech), I am surprised by how few US companies hire in Canada. The Canadians I know in these fields are typically on par with the Americans, but doing the same work at half the price. This superficially looks like an economic puzzle: with no timezone difference, language barrier, or cultural friction, why would American companies not hire the much cheaper Canadians? I believe the answer brings together everything I’ve touched on in this essay. The reason is legibility. There aren’t enough Canadians with resumes that American hiring algorithms recognize. If an American tech company uses “Previously worked at a company like Amazon” as a filter, a software engineer from RBC, despite being equally talented, does not pass the filter. If Canada wanted to see more of its citizens hired by US companies, the strategy shouldn’t be better education or training. It should be subsidizing large US companies to open offices in Canada, purely to brand candidates as “Amazon Product Managers.” Because once they have the badge, the market will finally see them.

Here is more from Daniel Frank, note the post covers some quite different issues, all related to talent. Via Watt.

India AI Data MCP

The Government of India’s Ministry of Statistics and Program Implementation has created an impressive Model Context Protocol (MCP) to connect AI’s to Indian datasets. An AI connected to data via an MCP essentially knows the entire codebook and can make use of the data like an expert. Once connected one can query the data in natural language and quickly create graphs and statistical analysis. I connected Claude to the MCP and created an elegant dashboard with data from India’s Annual Survey of Industries. Check it out.

My excellent Conversation with Joe Studwell

Here is the audio, video, and transcript. The conversation is based around Joe’s new and very good book How Africa Works: Success and Failure in the World’s Last Developmental Frontier. Here is part of the episode summary:

Tyler and Joe explore whether population density actually solves development, which African countries are likely to achieve stable growth, whether Africa has a manufacturing future, why state infrastructure projects decay while farmer-led irrigation thrives, what progress looks like in education and public health, whether charter cities or special economic zones can work, and how permanent Africa’s colonial borders really are. After testing Joe’s optimism about Africa, Tyler shifts back to Asia: what Japan and South Korea will do about depopulation, why industrial policy worked in East Asia but failed in India and Brazil, what went wrong in Thailand, and what Joe will tackle next.

Excerpt:

COWEN: Does Africa have a manufacturing future? Is robotics coming, AI, possibly some reshoring?

STUDWELL: Yes. I believe that Africa does have a manufacturing future.

COWEN: But making what? And at what cost of energy?

STUDWELL: They will start, as everybody does, producing garments, producing textiles, which in certain enclaves is already going on in Madagascar, in Lasutu, in Morocco, and they’ll move on to other things. They’ll start with those things because they are the most labor cost-sensitive products.

Africa is now in a position where — depending on which state you’re looking at, and taking China as a reference point — the cost of labor is now between a half and one-tenth of what it is in China. Factory labor is now around $600 a month at its cheapest. In a country like Ethiopia or Madagascar, it’s $60 or $65 a month. So, it’s a 10th of the cost, and that’s already beginning to have a bit of effect, often with Chinese firms moving production to Africa.

So, I think there is a future for manufacturing. It will depend on the extent to which African governments understand that you don’t really move forward fast for very long without manufacturing, that every developed country — apart from a few petro states and financial centers — has gone through a manufacturing phase of development. It depends on the extent to which African governments engage with that, but some, without doubt, will.

The Ethiopians, for instance, have already attempted to do that. What they’re trying to do has been somewhat derailed by the two-year civil war that took place from 2020, but they’re back on it now, and they’re trying to move forward.

The idea that robotics and AI are going to change the story I personally do not buy, principally for two reasons. One is the cost reason, because whenever people talk about what’s happening with robotics, no one ever talks about the cost of robots. In garmenting, for instance, even a basic robot will cost you in excess of $100,000, and you pay the cost upfront, and you’ve then paid that, whether there’s demand for your products or not. Also, in garmenting and in textiles, robots don’t work very well because they can’t work with material very well. They’re much better at working with solid things.

So, you’ve spent $100,000 for a robot when you can go out in somewhere like Tana in Madagascar and get another skilled — because they’ve been doing it now for 20 years — garmenting employee for $60 or $65 to make the new order that you just got. And if the order doesn’t come through, you can sack them. You see what I’m saying? There’s a point about the cost of robotics.

COWEN: But think of automation more generally — it’s not that expensive. Most countries are de-industrializing. Even South Africa has been de-industrializing for a while, and China maybe has peaked out at industrialization, measured in terms of employment. It’s hard to trust their numbers. But maybe just everywhere is going to deindustrialize, and that will be very bad for Africa.

STUDWELL: I don’t think so. I think South Africa is deindustrializing because the ANC has followed a hyper-liberal approach to economic policy. I don’t think the ANC has ever really understood economic policy, frankly, so South Africa is an outlier in that respect. There are many other states in Africa, whether Nigeria or Ethiopia, which understand they’ve got to have a manufacturing future and intend to pursue one.

Then, as I was saying, the other point is, what people miss is the flexibility with robotics and AI. There’s very limited flexibility with robotic and automated production. When demand goes up, you can’t just stick in more robots, but when demand goes up in a people-operated factory, where the cost of labor is low, you can stick in more people and produce more.

Just one example: during COVID, when everybody was having home deliveries of supermarket goods, the price of a UK firm called Ocado, which runs a supermarket, but was also developing the software and consulting around building blind warehouses went up through the roof, but now it’s down through the floor.

And only last week, Kroger supermarket in the US said, “We’re closing five of these super-modern blind warehouses.” And the reason, fundamentally, is because they lack the flexibility that human labor brings to the job. So, I’m not saying that robots, automation, and AI are not important. They are important. What I am saying is that they are not going to derail a manufacturing future for a number of African countries that aggressively pursue it.

COWEN: But there’re a lot of developing nations around the world — you could look at India, you could look at Pakistan, even Thailand — where manufacturing has not taken off the way one might have wanted. There’re just major forces operating against it. And in the US, manufacturing employment was once 37 percent of the workforce; now it’s 7 percent to 8 percent.

It just seems like it’s swimming upstream for Africa — which again, has quite expensive energy — to think it will do that well. And again, South Africa had very good technology, pretty high state capacity. I don’t see the alternate world state where a wiser ANC would have made that work.

STUDWELL: Well, oddly enough, before the end of Apartheid, the manufacturing performance of South Africa was really not bad at all, with classic industrial policy, quite high levels of protection, and so forth. I think that demand for manufactured goods will continue to be high around the world, and the labor cost will continue to be a prime determinant of where producers go for low value-added goods. So, I think that the opportunity is there for African countries.

COWEN: But say there’re transportation costs internally, energy costs, political order uncertainty. Where’s the place where people really want to put all these manufacturing firms?

Interesting throughout, recommended.

The Cassidy Report on the FDA

Senator Bill Cassidy (R-La.) released a new report on how to modernize the FDA. It has some good material.

… FDA’s process for reviewing new products can be an unpredictable “black box.” FDA teams can differ greatly in the extent to which they require testing or impose standards that are not calibrated to the relevant risks. The perceived disconnect between the forward leaning rhetoric and thought leadership of senior FDA officials and cautious reviewer practice creates further unpredictability. This uncertainty dampens investment and increases the time it takes for patients to receive new therapies.

Companies report that they face a “reviewer lottery,” where critical questions hinge on the approach of a small number of individuals at FDA. Some FDA review teams are creative and forward-leaning, helping developers design programs and overcome obstacles to get needed products to patients, without cutting corners. FDA’s Oncology Center of Excellence (OCE), for example, is repeatedly identified as a model for providing predictable yet flexible options for bringing new drugs to cancer patients. OCE is now a dialogue-based regulatory paradigm that has facilitated efforts by academia, industry, the National Institutes of Health (NIH), and others to develop new cancer therapies and launch innovative programs and pilots like Project Orbis, RealTime Oncology Review.

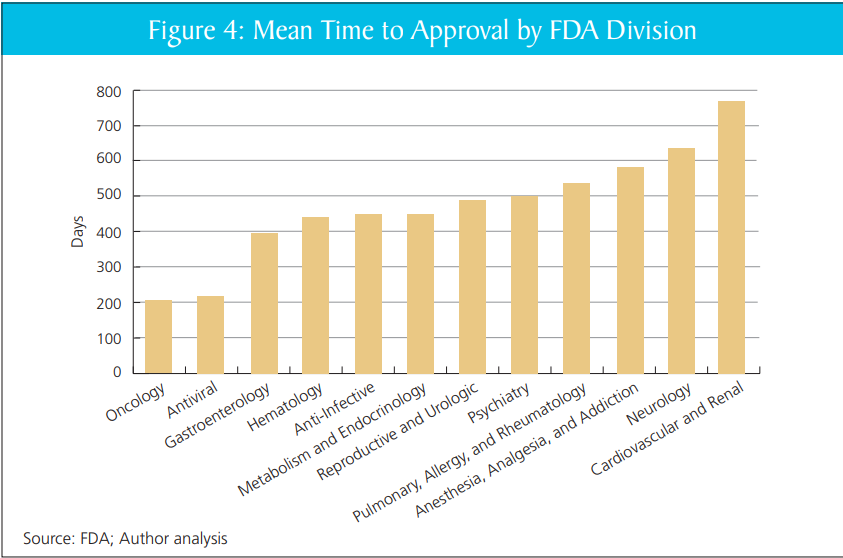

That’s quite right. In my report with DiMasi and Milne, we show that review times vary markedly within the FDA, across divisions, without clear cause. Put aside the big questions of FDA or no FDA–scaling the practices of the better-performing divisions would likely yield large gains.

Mike Doherty further asks the AI what Alex Tabarrok would think of the Cassidy report. It replies:

Strongest features: It correctly diagnoses FDA as a bottleneck to innovation, emphasizes “least burdensome” evidence, calls for streamlined pathways (505(b)(2)-style for biologics, biosimilar simplification), and worries about U.S. losing early-phase trials to other countries. This is very much in Tabarrok’s wheelhouse: regulation as a hidden tax on innovation and growth.

Weakest features: It rarely quantifies tradeoffs, almost never confronts the “invisible graveyard” of patients who never see therapies because of delay, and treats reforms as marginal tweaks rather than testing fundamentally different regulatory models (e.g., insurance-based approval, private certification, or sunset/experimentation with parallel regimes).

If you imagine this as a draft memo handed to Tabarrok, he’d likely say: “Good directionally; now add 50% more economics, 50% more quantification, and 100% more willingness to experiment with institutional competition.”

Yeah, pretty good.

Addendum: In other FDA news see also Adam Kroetsch on Will Bayesian Statistics Transform Trials?

Addendum 2: FDA has now agreed to review Moderna’s flu vaccine which is good although the course reversal obviously speaks to the unpredictability of the FDA.

A simple test of how immigration really is going

I suggest looking at whether real estate prices in a particular locale have been rising or falling. If immigration is “ruining” a particular city, we would expect homes and other property values in that place to become much cheaper.

Home values have historically served as a strong indicator of the health of a city. Consider Detroit. It was one of the premier American cities in the mid-20th century, but the region lost a lot of its automobile industry to foreign competition, and crime rose precipitously. The city also was poorly managed. The result in real estate markets was a collapse in prices. If anyone asked you to point to quantifiable evidence for the decline in Detroit, it was easy to do so.

Detroit has undergone a renaissance since its nadir. New businesses have opened, crime rates have fallen, and the city feels more lively again. And since that turn of fortune, often dated around the 1990s, Detroit real estate has made a major comeback, putting aside the price collapse of the Great Recession in 2008. Home prices are not a perfect measure of how the city is doing, but they do pick up major and radical trends, both on the downside and on the upside.

The nice thing about market prices is that they show how buyers weigh the benefits of immigration against costs. Say some new immigrants have moved into your community and the quality of the schools has declined somewhat and traffic is modestly worse. At the same time, there are new businesses, the streets feel more lively, and it is easier to get a good local plumber. In the abstract, it is hard to tell which effects might be most important. But individuals, when bidding for homes or deciding to sell, make their own judgments. What happens to the home prices is a reflection of the collective judgments of people with major decisions about their lives on the line.

Of course in most of the Western world, including Malmo, real estate prices are healthy and very often rising. Here is the full Free Press link, by yours truly. The piece of course does cover the usual caveats, such as bubbles and busts, but note NIMBY factors will not alone reverse the basic conclusions.

“You see tech and AI everywhere but in the productivity statistics”

How many times have I heard versions of that claim? Erik Brynjolfsson picks up the telephone in the FT:

While initial reports suggested a year of steady labour expansion in the US, the new figures reveal that total payroll growth was revised downward by approximately 403,000 jobs. Crucially, this downward revision occurred while real GDP remained robust, including a 3.7 per cent growth rate in the fourth quarter. This decoupling — maintaining high output with significantly lower labour input — is the hallmark of productivity growth.

My own updated analysis suggests a US productivity increase of roughly 2.7 per cent for 2025. This is a near doubling from the sluggish 1.4 per cent annual average that characterised the past decade.

It is fine to suggest caution in interpreting such statistics, but they hardly push the other way.

Minimum Wages for Gig Workers Can’t Work

In 2017, I analyzed the Uber Tipping Equilibrium:

What is the effect of tipping on the take-home pay of Uber drivers? Economic theory offers a clear answer. Tipping has no effect on take home pay. The supply of Uber driver-hours is very elastic. Drivers can easily work more hours when the payment per ride increases and since every person with a decent car is a potential Uber driver it’s also easy for the number of drivers to expand when payments increase. As a good approximation, we can think of the supply of driver-hours as being perfectly elastic at a fixed market wage. What this means is that take home pay must stay constant even when tipping increases.

…If Uber holds fares constant, the higher net wage (tips plus fares) will attract more drivers but as the number of drivers increases their probability of finding a rider will fall. The drivers will earn more when driving but spend less time driving and more time idling. In other words, tipping will increase the “driving wage,” but reduce paid driving-time until the net hourly wage is pushed back down to the market wage.

A paper by Hall, Horton and Knoepfle showed that’s exactly what happened.

More recently, in 2024, Seattle implemented “PayUp”, a pay package for gig workers like DoorDash workers that required a minimum wage based on the time worked and miles travelled for each offer. Note that this is not a minimum wage for all workers but for one type of worker in a large market. For this reason, we can use the same analysis as with Uber tipping. The supply of workers is very elastic and essentially fixed at the market wage for workers of similar skill. Thus, we would expect a zero effect on net pay.

Here is a recent NBER paper by An, Garin and Kovak looking at the effects of the Seattle law:

We find that the minimum pay law raised delivery pay per task….At the same time, the policy led to a reduction in the number of tasks completed by highly attached incumbent drivers (but not an increase in exit from delivery work), completely offsetting increased pay per task and leading to zero effect on monthly earnings. We find evidence that drivers experienced more unpaid idle time and longer distances driven between tasks…Using a simple model of the labor market for platform delivery drivers, we show that our evidence is consistent with free entry of drivers into the delivery market driving down the task-finding rate until expected earnings return to their pre-reform level.

All of this is a general result of the Happy Meal Fallacy.

Malthus had real influence

From a recent paper by Eric Robertson:

Public officials often fail to implement government policy as directed, yet the role of economic ideas in shaping these implementation choices is poorly understood. This paper provides causal evidence that exposure to economic ideas can durably influence bureaucrat behavior. I study British colonial bureaucrats in India, exploiting a natural experiment created by the abrupt death of Thomas Malthus in 1834, replacing his economics instruction at a bureaucrat training college for that of a contemporary critic, Richard Jones. Whereas Malthus regarded economic distress as a natural mechanism for restoring equilibrium by reducing population growth, Jones disagreed with this view. Linking rainfall shocks to district-level fiscal responses, I show that officials trained by Malthus delivered less relief during droughts, providing 0.10-0.25 SD less aid across all major measures compared with officials taught by Jones. The results reveal that exposure to abstract economic ideas can shape real-world policy implementation for decades.

This may be a case where using rainfall shocks in a paper actually makes sense. Via Krzysztof Tyszka-Drozdowski.

Natural and Artificial Ice

Excellent Veritasium video on the 19th century ice industry. Shipping ice from America to India would hardly seem like a wise idea—it’s hard to imagine ever getting a committee to approve such a venture—but entrepreneurs are free to try wacky ideas all the time, and sometimes they pay off, resulting in great riches. That’s the story of the “Ice King,” Frederic Tudor, who lost money for years before figuring out the insulation and logistics needed to make the trade profitable.

What I hadn’t fully appreciated is how the ice trade reshaped shipping, diet, and city design before the invention of mechanical refrigeration. Ice created the cold chain, and the cold chain made it possible to move fresh meat, fish, and produce over long distances. That in turn enabled cities to grow far beyond what local agriculture could support and shifted the American diet from salted and smoked provisions toward fresh food.

The profits of the ice trade encouraged investment in artificial ice which initially was met with resistance—natural ice is created by God!—a classic example of incumbents wrapping their economic interests in moral language, a pattern we see repeated with every disruptive technology from margarine to ridesharing.

Lots of lessons in the video about option value, permissionless innovation, and creative destruction. New technologies destroy old industries and create new ones that no one could have foreseen. The moral panic over artificial ice replacing the natural kind is no doubt familiar.

Hat tip: Naveen Nvn

The economics of corporate espionage

Weprovide systematic evidence on the economic damages from espionage to US firms and industries. Compiling a comprehensive dataset of publicly disclosed espionage incidents from 1995-2024, we establish that espionage has substantial negative effects on targeted f irms. In an event-study design, revenues and R&D expenditures at targeted firms decline by roughly 40% within five years, with effects persisting for up to a decade. These effects do not appear for firms unsuccessfully targeted for espionage, supporting a causal interpretation. These firm-level damages translate into measurable aggregate effects on US industry: exports in targeted sectors decline by 60% over a decade. Given these substantial damages, we investigate whether firms restrict knowledge sharing in response to espionage. Across a wide range of outcomes, we find no evidence of such restrictions. Firms do not reduce their patenting with foreign inventors, and do not discriminate in employment based on perceived espionage risk. Overall, espionage has clear economic harms to targeted firms and US industry, but firms are puzzlingly unresponsive in how they manage innovation.

That is from a new paper by Andrew Kao and Karthik Tadepalli. Via Kris Gulati.

Taxing Beta, Exempting Alpha: A Benchmark-Based Inheritance Regime

This paper proposes a generational benchmark inheritance regime as a structural replacement for the federal estate tax. By distinguishing between systemic market returns (Beta) and active value creation (Alpha), the regime captures the passive growth of capital at generational boundaries while fully exempting idiosyncratic surplus. Using a Pareto tail interpolation (α ≈ 1.163) calibrated to Federal Reserve wealth data, we estimate baseline annual revenue of approximately $295 billion under conservative assumptions. This revenue is sufficient to finance a 2.1 percentage point reduction in the OASDI payroll tax, shifting the fiscal burden from labor to underperforming dynastic capital. Unlike continuous wealth taxes, the regime requires no new valuation machinery, relying exclusively on existing estate and gift tax procedures. We situate the proposal within the Jeffersonian principle of usufruct and the modern literature on optimal inheritance taxation.