AI Risks

Two new papers/initiatives indicate severe risks from AI, interestingly in opposite directions. The first is that the most advanced frontier models are now capable of finding and exploiting software in ways that could be used to crash or control pretty much all the world’s major systems.

Anthropic: We formed Project Glasswing because of capabilities we’ve observed in a new frontier model trained by Anthropic that we believe could reshape cybersecurity. Claude Mythos2 Preview is a general-purpose, unreleased frontier model that reveals a stark fact: AI models have reached a level of coding capability where they can surpass all but the most skilled humans at finding and exploiting software vulnerabilities.

Mythos Preview has already found thousands of high-severity vulnerabilities, including some in every major operating system and web browser. Given the rate of AI progress, it will not be long before such capabilities proliferate, potentially beyond actors who are committed to deploying them safely. The fallout—for economies, public safety, and national security—could be severe. Project Glasswing is an urgent attempt to put these capabilities to work for defensive purposes.

That’s from Anthropic. The irony is that the company that has developed a frontier model capable of infiltrating and undermining more or less any computer system in the world is the one that has been forbidden from working with the US government. It’s as if a private firm developed nuclear weapons and the American government refused to work with them because they were too woke. Okey dokey.

The second paper on AI risks is AI Agent Traps from Google DeepMind. They point out that AI agents on the web are vulnerable to all kinds of attacks from things like text in html never read by humans, hidden commands in pdfs, commands encoded in the pixels of images using steganography and so forth.

Putting this together we have the worrying combination that very powerful AI’s are very vulnerable. Will AI solve the problems of AI? Eventually the software will be made secure but weird things happen in arms races and its going to be a bump ride.

The Public Choice Outreach Conference!

The annual Public Choice Outreach Conference is a crash course in public choice. The conference is designed for undergraduates and graduates in a wide variety of fields. It’s entirely free. Indeed scholarships are available! The conference will be held Friday June12- Sunday June 14 , near Washington, DC in Reston, VA. Lots of great speakers including Tyler, myself, Bryan Caplan, Robin Hanson, Jon Klick, Shruti Rajagopalan and more.

Please apply and encourage your students to apply.

The CA Minimum Wage Increase: Summing Up

Two recent joint-papers Did California’s Fast Food Minimum Wage Reduce Employment? by Clemens, Edwards and Meer and The Effects of California’s $20 Fast Food Minimum Wage on Prices by Clemens, Edwards, Meer and Nguyen give what I think is a plausible and consistent account of California’s $20 fast food minimum wage.

California’s $20 fast food minimum wage raised wages in the sector by roughly 8 percent relative to the rest of the country but employment fell by 2.3 to 3.9 percent (depending on specification, median ~3.2%), translating to about 18,000 lost jobs. Food away from home (FAFH) prices in California’s four CPI-reporting MSAs rose 3.3–3.6 percent relative to 17 control MSAs. Falsification tests on Food at Home and All Items Less Food and Energy show zero differential movement—this is specific to restaurant prices.

What’s interesting is that the papers are independently estimated but the fit is consistent. The price paper uses Andreyeva et al.’s demand elasticity of -0.8 to convert the estimated price increases into an implied quantity declines: about 3.9–4.1 percent in limited-service and 1.7–1.8 percent in full-service. These align well with the employment declines of 3.2 and 2.1 percent estimated in the first paper.

The consistency tells us something about the mechanism. One thing we have learned about the minimum wage in recent years is that the pass-through effect is large and more of the employment decline is driven by pass through than by labor-capital substitution. In other words, prices rose, quantity demanded fell, and that’s what killed the jobs—not robots replacing workers. Not today, anyway.

In terms of welfare, the bulk of employed workers get an 8% wage increase, a small minority get disemployed. The big transfer was from consumers to workers. California has roughly 39 million residents, all of whom face 3.3–3.6% higher FAFH prices. The transfer is likely regressive — lower-income households spend a larger budget share on fast food specifically. So the policy effectively taxes low-income consumers generally to raise wages for a subset of low-income workers, while eliminating jobs for another subset. Your mileage may vary but I don’t see this as a big win for workers. We thought small increases in the minimum wage were absorbed–maybe some were or maybe they were just hard to estimate–but you can’t extrapolate the small increases to big ones–the effect is non-linear. Big increases in the minimum wage start to bite.

As usual, when it comes to fast food there is no such thing as a free lunch.

Addendum: Clemens’s JEP paper continues to be the masterclass in how to think through minimum wage issues.

The President(s) Fought the Law and the Law Won

In our textbook, Modern Principles, Tyler and I emphasize that Congress and the President are subject to a higher law, the law of supply and demand. In an excellent column, Jason Furman gives a clear example of how difficult it is to fight the law of inelastic demand:

…Today a given number of autoworkers can make, according to my calculations, three times as many cars in a year as they could 50 years ago.

The problem is that consumers do not want three times as many cars. Even as people get richer, they increase their spending on manufactured goods only modestly, preferring instead to spend more on services like travel, health care and dining out. There are only so many cars a family can own, but that’s not the case for expensive vacations or fancy meals. As a result we have fewer people working in auto factories and more people working in luxury resorts and the like.

These forces — rising productivity but steady demand — explain why the United States was losing manufacturing job share as far back as the 1950s and 1960s, long before trade became a major factor.

How to Make Judges and Referees Pay

A recent viral tweet, quoted by Elon Musk, points out that bartenders can be fined or even imprisoned if they serve alcohol to patrons who later kill someone while under the influence. Judges, in contrast, enjoy absolute or qualified immunity even when they repeatedly release defendants who go on to kill.

I agree that judges should face stronger incentives to make good decisions, but the obvious problem with penalizing judges who release people who later commit crimes is that judges would then have very little incentive to release anyone—and that too is a bad decision. Steven Landsburg solved this problem in his paper A Modest Proposal to Improve Judicial Incentives, published in my book Entrepreneurial Economics.

Landsburg’s solution is elegant: we must also pay judges a bounty when they release a defendant.

Whether judges would release more or fewer defendants than they do today would depend on the size of the cash bounty, which could be adjusted to reflect the wishes of the legislature. The advantage of my proposal is not its effect on the number of defendants who are granted bail but the effect on which defendants are granted bail. Whether we favor releasing 1 percent or 99 percent, we can agree that those 1 percent or 99 percent should not be chosen randomly. We want judges to focus their full attention on the potential costs of their decisions, and personal liability has a way of concentrating the mind.

One might object that a cash bounty will cost too much, but recall that the bounty is balanced by penalties when a released defendant commits a future crime. The bounties and penalties can be calibrated so that on average the program is budget-neutral. The key is to get the incentives right on the margin.

The structure of this problem is quite general. Ben Golub, for example, writes:

There should be a retrospective reputational penalty imposed on referees who vote no on a paper because the paper is too simple technically — if that paper ends up being important. It’s an almost definitional indicator of bad judgment.

Quite right, but a penalty for rejection needs to be balanced with a bonus for acceptance. Get the marginal incentive right and quality will follow!

Grade Caps are Not a Good Solution to Grade Inflation

It’s well known that grade inflation has “degraded” the informational content of grades at many colleges. At Harvard, two-thirds of all undergraduate grades are now A’s—up from about a quarter two decades ago. In response, a Harvard faculty committee has proposed capping A grades at 20 percent of each class (plus a cushion for small courses). That may give professors some cover to resist further inflation, but it doesn’t solve the real problem.

The real problem is not inflation per se. It’s that students are penalized for taking harder courses with stronger peers. A grade cap leaves that distortion intact—and can even amplify it. As Harvard economist Scott Kominers argues:

A grade cap systematically penalizes ambitious students for surrounding themselves with strong classmates. Perverse course-shopping incentives ensue as a result. A student who is prepared for an advanced course but concerned about landing in the bottom 80 percent may choose to drop down preemptively—seeking out a pond where they are a relatively bigger fish. As strong students move into lower-level courses, competition for A grades increases there while harder courses continue to shrink—reducing their A allocation further and driving more students away.

The underlying issue is informational. A grade tries to capture two things—student ability and course difficulty—with a single number. Gans and Kominers show that in general this is impossible: if some students take math and earn B’s while others take political science and earn A’s, there is no way, from grades alone, to tell whether the difference reflects ability or course difficulty.

There is, however, a solution in some cases. Clearly, if every student takes some math and political science courses, informative patterns can emerge. If math students tend to get B’s in math but A’s in political science, while political science students get A’s in their own field but C’s in math, you can begin to separate course difficulty from student ability.

Students don’t all overlap the same classes. But full overlap isn’t necessary—you just need a connected network. If Alice just takes math courses, Joe takes math and political science courses, and Bob just takes political science courses, then Alice and Bob can be compared through Joe. With enough of these links, the entire system can be stitched together. The more overlap, the more precise the estimates.

Valen Johnson proposed a practical method along these lines in 1997. Gans and Kominers embed the same intuition in a much more general framework, showing exactly what can and cannot be inferred, and under what conditions.

The great thing about achievement indexes based on relative comparisons is that they are robust to grade inflation and do not penalize students for taking hard classes or subjects. A political science student who chooses to take a tough math class instead of an easy-A intro to sociology course won’t be penalized because their low math grade will, in effect, by boosted by the difficulty of the course/quality of the students. That’s good for the student and also good for disciplines that have lost students over the years because they held the line on grade inflation.

One final point. Harvard’s cap proposal appears to have been developed with little engagement with researchers who have studied problems like these for decades in the mechanism and market design literature—people like Kominers, Gans, Budish, Roth, Maskin, and Sönmez, some of them at Harvard! Moreover, this isn’t a case of ignoring high-theory for practice. The high-theory of mechanism design has produced real-world systems including kidney exchanges, school choice mechanisms, physician-resident matching, even the assignment of students to courses at Harvard, as well as many other mechanisms. Mechanism design is practical.

Grade inflation is a mechanism design problem—and we know a lot about how to solve it, if we want to solve it.

Social Security Should Be a Forced Savings Program Not a Welfare Program

There is a growing movement to eliminate the wage cap on Social Security taxes while capping benefits. The argument, often from the center-right, is that Social Security is insolvent and that “tough” choices are needed to save it. But this moves the system in exactly the wrong direction.

One of the better features of Social Security is that it has never been purely redistributive. It has also functioned, in part, as a forced-savings program. The Social Security Administration itself emphasizes that benefits depend on earnings history: earn more, retire with more. Why do some people receive large Social Security checks? Because they paid a lot more into the system.

Eliminating the wage cap while capping benefits weakens, and in the limit destroys, that connection. It turns Social Security away from forced saving and toward retirement welfare financed by a broader tax on earnings. That is a bad idea.

The problem is not just that this creates another welfare program. It also worsens marginal incentives. A tax that buys you a claim on future benefits is not the same as a pure tax. Suppose 10 percent of your salary goes into a 401(k). That reduces current consumption, but it is not simply money lost to the state. You receive an asset in return. It is closer to a purchase than to a tax–a reason to work more not a reason to work less.

Social Security is not a personal retirement account, but it does contain that logic. There is a connection between taxes paid and benefits received. To the extent that workers understand that connection, the payroll tax is less distortionary than an ordinary tax of the same size. Part of what workers pay is offset by the expectation of future benefits.

Gut that connection, however, and the tax becomes more distortionary even if total taxes paid and total benefits received stay the same. The averages can remain unchanged while the marginal incentives deteriorate. Once additional taxes no longer generate additional benefits, the system looks much more like a straight tax on work.

A much better reform would move in the opposite direction: strengthen the link between contributions and benefits. Make Social Security more like what many people already think it is—an individual account that accumulates benefits over time. The stronger that link, the lower the effective tax wedge.

This would also improve the politics of the system. A welfare program invites zero-sum conflict: my benefit comes at your expense. A claim-based system is less divisive. It ties benefits more clearly to contributions and makes rising prosperity good for everyone. In that kind of system, we can all become richer—including low-wage immigrants—without treating retirement policy as a fight over who gets to pick whose pocket.

Addendum: James Buchanan first made these points here. John Cochrane gets the economics right, of course.

Marginal Garfield

Marginal Garfield generates an original Garfield cartoon every day based on posts from Marginal Revolution! Here is the first strip. You can guess the post. Is there now any reason to come to MR? What a world.

You can also check out Rationalist Garfield which pulls from Less Wrong.

We thank Tim Hwang.

Physician Incomes and the Extreme Shortage of High IQ Workers

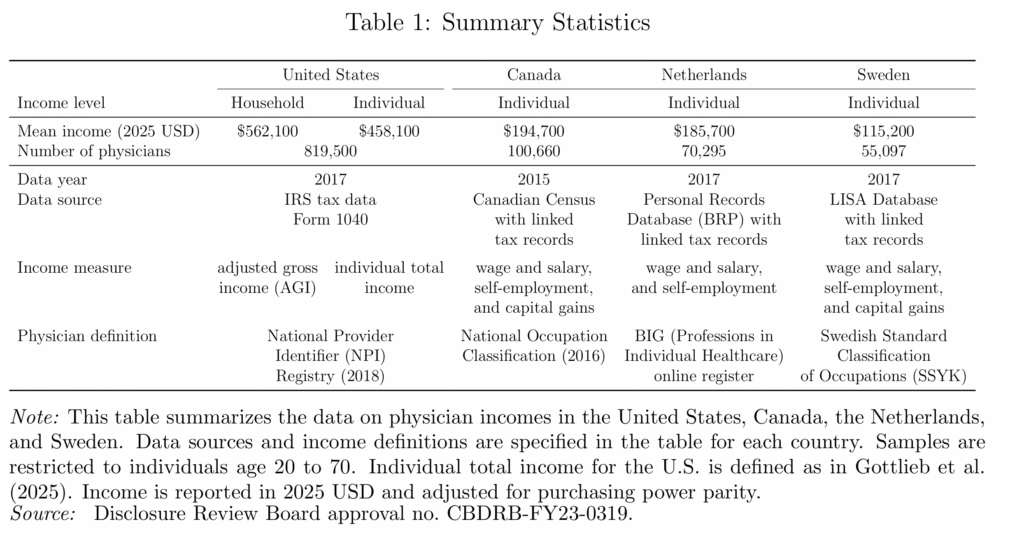

Physician incomes are extraordinarily high in the United States. A new NBER paper finds that U.S. physicians earn roughly two to four times as much as their counterparts in Canada, the Netherlands, and Sweden.

Why? Is it some feature particular to the US health care sector? Probably not. The same paper finds that physicians in the US have about the same relative income ranking as in Canada, the Netherlands, and Sweden. In other words, lots of high-skill workers in the US earn high incomes and physicians don’t look unusual relative to these other high-skill groups.

That is exactly what one would expect in an economy with an extreme shortage of high-IQ, high-skill workers. The US is a uniquely productive economy for high-skill workers which is why the US demand for foreign workers and the foreign demand to immigrate are so strong, especially at the high end.. By one estimate, “immigrants account for 32 percent of aggregate U.S. innovation.”

Immigration of high-skill workers such as with the H-1B and EB-1,2,3 programs, together with stronger U.S. education, is one way to reduce the shortage of high-skill workers. The alternative is simpler: make the economy less dynamic and less rewarding for talent. Then wages would fall and fewer ambitious people would bother coming. A solution but only if your preferred cure for scarcity is decline.

Oil versus Ice Cream

When Tyler and I were writing Modern Principles of Economics, we wanted examples that were modern, specific, and grounded in the real world. That has been a bit of a headache, because we have to update them with every new edition. Our biggest competitor uses the ice cream market as its central example and never has to revise. Smart! But for us, the extra work has been worth it.

We chose the oil market as our central example. Oil is always in the news, and it works really well across a wide range of textbook topics: the elasticity of demand and supply; oligopoly and cartels; the shutdown condition; shocks; expectations, speculation and futures markets; and oil prices have macroeconomic implications that connect micro to macro.

Yes, keeping the examples current takes more work. But when a student sees that the price of crude has surged past $100 a barrel because Iran closed the Strait of Hormuz—choking off 20% of the world’s oil supply—they have the framework to understand what is happening. Supply shock, inelastic demand, expectations and speculation, the macroeconomic transmission to GDP—it’s all right there in the headlines. Try doing that with the ice cream market.

See the Invisible Hand. Understand Your World. It is not just our slogan. It’s our method.

A Danish Fix for U.S. Mortgage Lock-in

In the Danish mortgage market every mortgage is backed by a corresponding bond. Thus, if a home buyer takes out a 500k mortgage at 3% interest, a bond is issued that pays the lender 3% interest on 500k. I’ve written about this system several times before. It has two distinct advantages.

- The correspondence principle means that mortgage banks don’t bear interest rate risk but instead specialize in evaluating credit risk (the risk that the borrower won’t pay). Deep markets rather than banks take on the interest rate risk. This makes the Danish system very stable.

- Mortgages can be pre-paid by buying the corresponding bond at market rates and extinguishing it. If a Danish borrower takes out a 500k mortgage at 3% interest and then rates rise to 6%, for example, the value of that mortgage falls to $358k and the borrower can buy the corresponding bond, deliver it to the bank, and, in this way, extinguish the loan.

In the US, a mortgage can be pre-paid only at a par. As a result, if interest rates rise, home owners don’t want to move because moving would require them giving up a 3% mortgage and replace it with say a 6% mortgage. This is called the lock-in effect. Lock-in can be quite severe. Fonseca and Liu find:

Using individual-level credit record data and variation in the timing of mortgage origination, we show that a 1 percentage point decline in the difference between mortgage rates locked in at origination and current rates reduces moving by 9% overall and 16% between 2022 and 2024, and this relationship is asymmetric. Mortgage lock-in also dampens flows in and out of self-employment and the responsiveness to shocks to nearby employment opportunities that require moving, measured as wage growth within a 50- to 150-mile ring and instrumented with a shift-share instrument.

What about in Denmark? The Danes definitely take advantage of the opportunity to buy-back. Part of this is due to tax advantages but those are just a transfer. More importantly, Danes don’t get locked in. A new paper by Berger, Jeong, Marx, Olesen, and Tourre compares mobility across Denmark and the US:

We study Danish fixed-rate mortgage contracts, which are identical to those in the United States except that borrowers may repurchase their mortgages at market value. Using Danish administrative data, we show that households actively buy back debt when mortgage prices fall below par and that household mobility is largely insensitive when existing mortgage rates are below prevailing market rates — unlike in the United States, where moving rates fall sharply as rates rise. We develop an equilibrium model that explains these patterns and show that introducing a repurchase-at market option into U.S. mortgages substantially reduces interest-rate-induced lock-in with limited effects on equilibrium mortgage rates.

The last point is especially important because you might wonder whether we are assuming a free lunch? After all, if US borrowers lose when they have to pre-pay at par then lenders surely gain. And if lenders gain on pre-payment then they will be willing to lend at lower rates on mortgage initiation. No free lunch, right? The logic is correct but note that the gain to lenders comes mainly from the relatively small set of households that move despite lock-in so the pre-payment bonus to lenders is quite small. Under the author’s calibrated model, mortgage interest rates in the US would rise by only 18 basis points on average if the US moved to a Danish type system.

In other words, there actually is a free or at least a low-priced lunch because lock-in is bad for homeowners and it doesn’t benefit lenders. As a result, moving to a Danish system would create net benefits.

Understanding Demonic Policies

Matt Yglesias has a good post on the UK’s Triple Lock, which requires that UK pensions rise in line with whichever is highest: wages, inflation, or 2.5 percent. Luis Garicano calls this “the single stupidest policy in the entire Western world” — and I’d be inclined to agree, if only the competition weren’t so fierce.

The triple lock guarantees that pensioner incomes grow at the expense of everything else, and the mechanism bites hardest when the economy is weakest. During the 2009 financial crisis wages fell and inflation declined, for example, yet pensioner incomes rose by 2.5 percent! (Technically this was under a double-lock period; the triple lock came slightly later — as if the lesson from the crisis was that the guarantee hadn’t been generous enough.)

Now, as Yglesias notes, if voters were actually happy with pensioner income growing at the expense of worker income, that would be one thing. But no one seems happy with the result. The same pattern is clear in the United States:

As I wrote in January, there is a pattern in American politics where per capita benefits for elderly people have gotten consistently more generous in the 21st century even as the ratio of retired people to working-age people has risen.

This keeps happening because it’s evidently what the voters want. Making public policy more generous to senior citizens enjoys both broad support among the mass public and it’s something that elites in the two parties find acceptable even if neither side is particularly enthusiastic about it. But what makes it a dark pattern in my view is that voters seem incredibly grumpy about the results.

Nobody’s saying things have been going great in America over the past quarter century.

Instead, the right is obsessed with the idea that mysterious forces of fraud have run off with all the money, while the left has convinced itself that billionaires aren’t paying any taxes.

But it’s not some huge secret why it seems like the government keeps spending and spending without us getting any amazing new public services — it’s transfers to the elderly.

The contradictions of “Elderism” are an example of rational irrationality. Individual voters bears essentially no cost for holding inconsistent political beliefs — wanting generous pensions and robust public services and low taxes is essentially free, since no single vote determines the outcome. The irrationality is individually rational and collectively ruinous. Voters are not necessarily confused about what they want; they simply face no price for wanting incompatible things. Arrow’s impossibility theorem adds another layer: even if each voter held perfectly coherent preferences, there is no reliable procedure for aggregating them into a coherent social choice. The grumpiness Yglesias documents may not reflect hypocrisy so much as the incoherence of demanding that collective choice makes sense — collective choice cannot be rationalized by coherent preferences and thus it’s perfectly possible that democracy can simultaneously “choose” generous pensions and “demand” better services for workers, with no mechanism to register the contradiction until the bill arrives.

A New Order of Things

Big infrastructure projects in the developing world for things like water and electricity are under-pressure. Chinese and US funding is down and these projects often fall apart due to corruption and political incentives to build but not maintain. It is possible to break old institutions and establish new ones, but “there is nothing more difficult to take in hand, more perilous to conduct, or more uncertain in its success, than to take the lead in the introduction of a new order of things.” Connor Tabarrok gives a great example. Ek Son Chan in Cambodia:

In 1993, the Phnom Penh Water Supply Authority was a catastrophe. The city was emerging from decades of war and genocide. Only 20 percent of the city had connections at all, and water flowed for just 10 hours a day. 72 percent of the water was non revenue water. It was lost to leaks or stolen through illegal connections.

Into this mess walked Ek Son Chan, a young Cambodian engineer appointed as Director General. Over the next two decades he executed an incredible institutional turnaround.

Chan replaced corrupt managers with qualified engineers. He got rid of unmetered taps. Every single connection received a meter and was billed. The old system of manual billing was replaced with a computerized system, which cut down on low level employees giving out free water and receiving kickbacks. Bill collection rates went from 48 percent to 99.9 percent. These changes were intensely unpopular, and Chan faced fierce resistance from rent seekers, from freeloading customers to his own employees. He established an incentive system based on bonuses among the workers, introduced an internal discipline system with a penalty for violators, and set up a discipline commission for all levels of the organization to deal with corruption

He divided the distribution network into pressure zones with flow monitoring. A 24 hour leak detection team walked the streets at night with listening bars to identify underground leaks.

The institutional change dwarfed the infrastructural change, but was absolutely necessary to make the infrastructure investment worthwhile….

This commitment would not be untested. When Chan tried to enforce bill payment on Cambodia’s elite, and sent his team out to install a water meter on the property of a high ranking general who had been freeloading. The general refused the installation of a meter, so the team attempted to disconnect the water. The general and his bodyguards ran them off the property. When Chan heard of this, he decided not to back down, and mobilized his own team to dig up the pipe and install the meter. Always a leader from the front, Chan jumped in the hole to take a shift at digging. When he looked up, his team had fled, and he was facing down the general himself, pointing a gun at his head. In Cambodia in the 90s, consequences for such a high ranking official were unlikely. CHan didn’t give up. He mobilized the local armed police and returned with 20 men to standoff against the general, disconnected him from service and left him out to dry. Chan said this about the dispute:

”He had no water. My office was on the second floor and the general came in with his ten bodyguards to look for me. I said, “ No. You can come here alone, but with an appointment”. He couldn’t do anything. He had to return. He said, “Okay”! At that time we had a telephone, a very big Motorola. He came in to make an appointment for tomorrow. I said, “ Okay, tomorrow you come alone”. So he comes alone, we talk. “Okay. I’ll reconnect on two conditions. The first condition is that you have to sign a commitment saying that you will respect the Water Supply Authority and second, you need to pay a penalty for your bad behavior and you must allow us to broadcast the situation to the public, or no way, no water in your house”. So he agreed. “

….By 2010, coverage in the city went from 25 percent to over 90 percent with 24 hour service. The utility became financially self sustaining and turned a profit. It was listed on the Cambodia Securities Exchange in 2012. Chan won the Ramon Magsaysay Award in 2006.

By separating the utility company from the low-capacity local government, Ek and PPWSA proved that:

- Functional infrastructure relies on institutional quality and mechanism design.

- State capacity need not exist within the state

Subscribe for more.

Why is the USDA Involved in Housing?!

In yesterday’s post, The 21st Century ROAD to Housing Act, I wrote that Trump’s Executive Order “cuts off institutional home investors from FHA insurance, VA guarantees and USDA backing…”. The USDA is of course the United States Department of Agriculture. In the comments, Hazel Meade writes:

USDA? Wait, what????

Why is the USDA in any way involved in housing financing?

Are we humanly capable of organizing anything in a rational way?

It’s a good question. The answer is a great illustration of the March of Dimes syndrome. The USDA got involved with housing in the late 1940s with the Farmers Home Administration. The original rationale was to support farmers, farm workers and agricultural communities with housing assistance on the theory that housing was needed for farming and the purpose of the USDA was to improve farming. Not great economic reasoning but I’ll let it pass.

Well U.S. farm productivity roughly tripled between 1948 and the 1990s as family farms became technologically sophisticated big businesses. So was the program ended? Of course not. Over time the program subtly shifted from farmers to “rural communities”–the shift happened over decades although it was officially recognized in 1994 when the Farmers Home Administration was renamed the Rural Housing Service. Today rural essentially means low population density which no longer has any strong connection to agriculture.

So that’s the story of how the US Department of Agriculture came to run a roughly $10 billion annual housing program for non-farmers in non-agricultural communities. And how does it do this? By supporting no-money-down direct lending and a 90 percent guarantee to approved private lenders. Lovely.

It’s a small program in the national totals, but an amusing example of the US government robbing Peter to pay Paul and then forgetting why Paul needed the money in the first place.

The 21st Century ROAD to Housing Act

The 21st Century ROAD to Housing Act appears likely to pass the Senate. The bill contains some genuinely good ideas alongside some very popular—but bonkers ideas.

Let’s start with the good ideas.

The bill would streamline NEPA review for federally supported housing, primarily by expanding categorical exclusions. Federal environmental review does impose real costs and delays on housing construction, so reducing unnecessary review is a step in the right direction. The gains will probably be modest—most housing regulation occurs at the state and local level—but removing friction is good.

The bill would also deregulate manufactured housing by eliminating the permanent chassis requirement and creating a uniform national construction and safety standard. The United States once built far more factory-produced housing; in the early 1970s, by some accounts a majority of new homes were factory-built (mobile or modular). Long-run productivity growth in housing almost certainly requires greater use of factory construction. Land-use regulation remains the dominant constraint on supply, but enabling scalable manufacturing is still welcome.

Another interesting provision involves Community Development Block Grants (CDBG). The bill allows CDBG funds to be used for building new housing rather than being largely restricted to rehabilitation of existing housing. More federal spending is not automatically appealing, but the bill adds an unusual incentive mechanism.

The bill creates a tournament for CDBG allocations. Localities that exceed the median housing growth improvement rate among eligible CDBG recipients receive bonus funding. Those below the median face a 10 percent reduction. The key feature is that the penalties fund the bonuses, so the system reallocates money rather than expanding spending.

This is a clever design. It creates competition among localities and benchmarks them against peers rather than against a fixed national target. In effect, the program rewards relative improvement rather than absolute performance—a classic tournament structure. (See Modern Principles for an introduction to tournament theory!).

Ok, now for the popular but bonkers ideas. Section 901 (“Homes are for People, Not Corporations”) restricts the purchase of new single-family homes by large institutional investors. Elizabeth Warren is a sponsor of the bill but this section was driven almost entirely by President Trump. Trump passed an Executive Order, Stopping Wall Street from Competing With Main Street Home Buyers, that cuts off institutional home investors from FHA insurance, VA guarantees, USDA backing, Fannie/Freddie securitization and so forth. The bill goes further by imposing a seven-year mandatory divestiture rule, forcing institutional investors to convert rental homes to owner-occupied units after seven years.

No one objects to institutional investors owning apartment buildings. But when the same investors own single-family homes, it breaks people’s brains. Consider how strange the logic sounds if applied elsewhere:

…a growing share of apartments, often concentrated in certain communities, have been purchased by large Wall Street investors, crowding out families seeking to buy condominiums.

Apartments are fine, hotels are fine, but somehow a corporation owning a single family home is un-American. In fact, the US could do with more rental housing of all kinds! Why take the risk of owning when you can rent? Rental housing improves worker mobility. When foreclosures surged after 2008 and traditional buyers disappeared, institutional investors stepped in and absorbed distressed supply — helping stabilize markets. Who plays that role next time?

Institutional investors own only a tiny number of homes, so even if this were a good idea it wouldn’t be effective. But it’s not a good idea, it’s just rage bait driven by Warren/Trump anti-corporate rhetoric.

What does “Homes are for People, Not Corporations” even mean?–this is a slogan for the Idiocracy era. “Food is for People, Not Corporations,” so we should ban Perdue Farms and McDonald’s?